In the news | ZDNet

SONIC Featured in ZDNet

What bhasha do you want to talk in?

Many of us who speak multiple languages switch seamlessly between them in conversations and even mix multiple languages in one sentence. For us humans, this is something we do naturally, but it’s a nightmare for computing systems to understand mixed…

As COVID-19 reshapes large sections of the workforce, many employees have found themselves struggling to establish a work-life balance. A survey conducted by the Browser User Researcher team looked at how Information Workers (IWs) are adapting to their new environments…

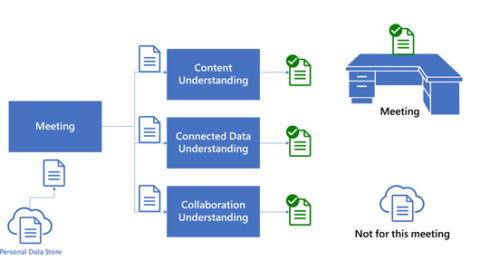

Meeting Insights: Contextual assistance for everyone

| Abhishek Arun, Ramakrishna Bairi, Paul Bennett, Kevin Moynihan, and Adam D. Troy

It’s a big day for you. Back-to-back meetings are scheduled with critical customers and partners, and a parent-teacher conference is sandwiched in there as well. As you’re headed toward the last meeting, suddenly you cannot remember the key talking points.…

He was the first Chinese scholar to become a “complete” fellow at all the following organizations: ACM, AAAI, IEEE, AAAS and IAPR. He was the first Chinese scholar to serve as the program chair of AAAI. He was elected as…

Old tools, new tricks: Improving the computational notebook experience for data scientists

| Titus Barik, Ishita Prasad, Rob DeLine, and Sumit Gulwani

As technology has advanced, the way we accomplish things in our lives has shifted. While new tech is influencing such basic human activities as communication, for instance, encouraging us to reconsider what it means to connect with one another, technological…

We are announcing Hummingbird, a library for accelerating inference (scoring/prediction) in traditional machine learning models. Internally, Hummingbird compiles traditional ML pipelines into tensor computations to take advantage of the optimizations that are being implemented for neural network systems.

Harvesting randomness, HAIbrid algorithms and safe AI with Dr. Siddhartha Sen

Dr. Siddhartha Sen is a Principal Researcher in MSR’s New York City lab, and his research interests are, if not impossible, at least impossible sounding: optimal decision making, universal data structures, and verifiably safe AI. Today, he tells us how…

In the news | DeepSpeed.ai

Microsoft DeepSpeed achieves the fastest BERT training time

Good news! DeepSpeed obtains the fastest BERT training record: 44 minutes on 1024 NVIDIA V100 GPU. This is a 30% improvement over the best published result of 67 mins in end-to-end training time to achieve the same accuracy on the…