Innovating in India with Dr. Sriram Rajamani

Dr. Sriram Rajamani is a Distinguished Scientist and the Managing Director of the Microsoft Research lab in Bangalore. He’s dedicated his career to advancing globally applicable science in the testbed that is India. He is, by any measure, a world-class…

In the news | WinBuzzer

Microsoft Open Sources BERT for ONNX Runtime

In December, Microsoft open sourced its ONNX Runtime inference engine. Now, the company says it also open-sourced an optimized version of BERT, a natural language model from Google, for ONNX.

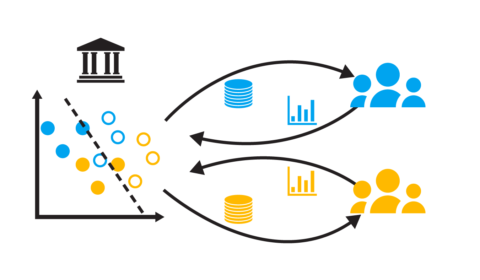

When bias begets bias: A source of negative feedback loops in AI systems

| Lydia T. Liu

Is bias in AI self-reinforcing? Decision-making systems that impact criminal justice, financial institutions, human resources, and many other areas often have bias. This is especially true of algorithmic systems that learn from historical data, which tends to reflect existing societal…

In the news | VentureBeat

Microsoft open-sources ONNX Runtime model to speed up Google’s BERT

Microsoft Research AI today said it plans to open-source an optimized version of Google’s popular BERT natural language model designed to work with the ONNX Runtime inference engine. Microsoft uses to the same model to lower latency for BERT when…

In the news | AmstatNews

Summary of JSM 2019 Session on Formal Privacy: Making an Impact at Large Organizations

With the growing amount of data collected every day, data confidentiality is increasingly at risk. Many of the traditional approaches to statistical disclosure control are no longer deemed sufficient to protect the confidentiality of the data. Formal privacy guarantees are…

In the news | ZDNet

Microsoft makes performance, speed optimizations to ONNX machine-learning runtime available to developers

Microsoft is open sourcing and integrating some updates it it has made in deep-learning models used for natural-language processing. On January 21, the company announced it is making available to developers these optimizations by integrating them into the ONNX Runtime.

Awards | IEEE Computer Society

Nachi Nagappan to receive 2020 Harlan D. Mills Award

Nachi Nagappan has been selected to receive the 2020 Harlan D. Mills Award (opens in new tab) for his “outstanding contributions to empirical software engineering and data-driven software development.” The Harlan D. Mills Award recognizes researchers and practitioners who have demonstrated…

In the news | Microsoft Open Source Blog

Microsoft open sources breakthrough optimizations for transformer inference on GPU and CPU

One of the most popular deep learning models used for natural language processing is BERT (Bidirectional Encoder Representations from Transformers) (opens in new tab). Due to the significant computation required, inferencing BERT at high scale can be extremely costly and may…

In the news | Linux.com

Microsoft Opens Up Rust-Inspired Project Verona Programming Language On GitHub