微软3D生成扩散模型RODIN,秒级定制3D数字化身

编者按:近日,由微软亚洲研究院提出的 Roll-out Diffusion Network (RODIN) 模型,首次实现了利用生成扩散模型在 3D 训练数据上自动生成 3D 数字化身(Avatar)的功能。仅需一张图片甚至一句文字描述,RODIN 扩散模型就能秒级生成 3D 化身,让低成本定制 3D 头像成为可能,为 3D 内容创作领域打开了更多想象空间。相关论文“RODIN: A Genera...

In the news | The Economic Times

Microsoft Research India is creating tools to help preserve fast disappearing languages

In 2010, Bo, a language of the Andaman Islands that is at least 65,000 years old became extinct when the only person who spoke this pre-Neolithic tongue died. This isn’t an isolated case. Every two weeks, a language is lost…

Introducing CliffordLayers: Neural Network layers inspired by Clifford / Geometric Algebras.

We are open sourcing CliffordLayers (opens in new tab), a repo for building neural network layers inspired by Clifford / Geometric Algebras. This repo contains the source code of our ICLR 2023 paper Clifford Neural Layers for PDE Modeling (opens…

Research Focus: Week of March 6, 2023

Welcome to Research Focus, a series of blog posts that highlights notable publications, events, code/datasets, new hires and other milestones from across the research community at Microsoft. Attack methods like Spectre (opens in new tab) exploit speculative execution, one of…

完成一幅计算机学术生态的拼图,少不了这些“斜杠女性”

在微软亚洲研究院学术合作部,来自不同国家、有着不同学术背景和人生阅历的女性,正在以各自所擅长的方式,为跨领域研究搭建沟通协作的桥梁。这些“斜杠女性”有着不拘一格的职场哲学与处世之道,她们也更加乐于在开放、多元、包容的氛围中,为研究院连接众多合作伙伴,以自己的热情和智慧,为广阔的计算机学术生态拼图填补着不可或缺的板块。 Miran Lee,微软亚洲研究院学术合作总监 国籍:韩国 关系管理能手/ 自主...

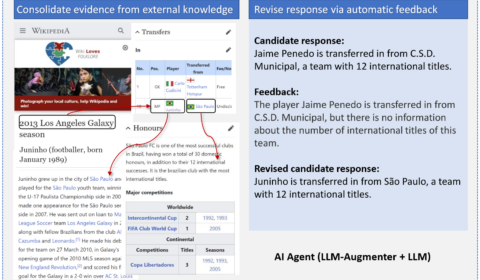

Large language models (LLMs), such as ChatGPT, are able to generate human-like, fluent responses for many downstream tasks, e.g., task-oriented dialog and question answering. However, applying LLMs to real-world, mission-critical applications remains challenging mainly due to their tendency to generate…

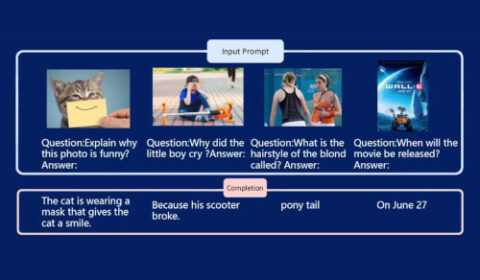

作者:机器之心 在 NLP 领域,大规模语言模型(LLM)已经成功地在各种自然语言任务中充当通用接口。只要我们能够将输入和输出转换为文本,就能使得基于 LLM 的接口完成一个任务。举例而言,对于摘要任务,我们能够将文档输入到语言模型,语言模型就可以生成摘要。 尽管 LLM 在 NLP 任务中取得了成功的应用,但研究人员仍努力将其原生地用于图像和音频等多模态数据。作为智能的基本组成部分,多模态感知是...

In the news | Julia Chatterly - CNN

Eyes in the sky – Planet Labs satellites track a changing globe

“It’s helping to lift the fog of war. No longer can anyone hide.” @planet Labs CEO @Will4Planet on how their satellite data and AI is transforming damage assessment in #Ukraine & #Turkey, tackling food security & deforestation.

We are announcing SMART, a generalized pretraining framework for a wide variety of control tasks. Self-supervised pretraining of large neural networks (BERT (opens in new tab), GPT (opens in new tab), MoCo (opens in new tab), and CLIP (opens in…