News & features

Research Focus: Week of February 5, 2024

Research Focus: New Research Forum series explores bold ideas in the era of AI; LASER improves reasoning in language models; Cache-Efficient Top-k Aggregation over High Cardinality Large Datasets; Six Microsoft researchers named 2023 ACM Fellows.

Jianfeng Gao, Sumit Gulwani, Nicole Immorlica, Stefan Saroiu, Manik Varma, and Xing Xie are among the new class of 68 Association for Computing Machinery (ACM) fellows for their transformative contributions to computing science and technology.

Data Formulator: A concept-driven, AI-powered approach to data visualization

| Chenglong Wang, Bongshin Lee, John Thompson, Steven Drucker, and Jianfeng Gao

Visualization is vital for understanding complex data, but existing tools require “tidy data,” adding extra steps. Learn how Data Formulator transforms concepts into visuals, promoting collaboration between analysts and AI agents.

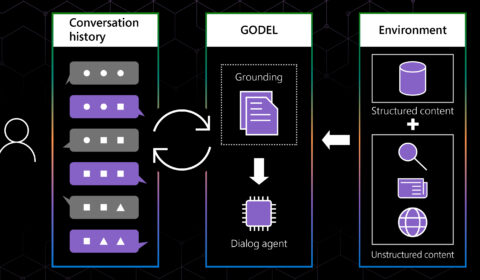

GODEL: Combining goal-oriented dialog with real-world conversations

| Baolin Peng, Michel Galley, Lars Liden, Chris Brockett, Zhou Yu, and Jianfeng Gao

They make restaurant recommendations, help us pay bills, and remind us of appointments. Many people have come to rely on virtual assistants and chatbots to perform a wide range of routine tasks. But what if a single dialog agent, the…

In the news | Analytics India

Interview with the team behind Microsoft’s µTransfer

Recently, researchers – Edward Hu, Greg Yang, Jianfeng Gao from Microsoft, introduced µ-Parametrization, which offers maximal feature learning even in infinite-width limit.

µTransfer: A technique for hyperparameter tuning of enormous neural networks

| Edward Hu, Greg Yang, and Jianfeng Gao

Great scientific achievements cannot be made by trial and error alone. Every launch in the space program is underpinned by centuries of fundamental research in aerodynamics, propulsion, and celestial bodies. In the same way, when it comes to building large-scale…

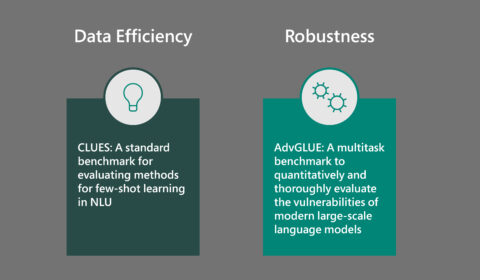

You get what you measure: New NLU benchmarks for few-shot learning and robustness evaluation

| Jianfeng Gao and Ahmed Awadallah

Recent progress in natural language understanding (NLU) has been driven in part by the availability of large-scale benchmarks that provide an environment for researchers to test and measure the performance of AI models. Most of these benchmarks are designed for…

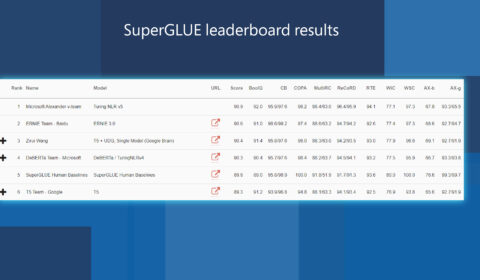

Efficiently and effectively scaling up language model pretraining for best language representation model on GLUE and SuperGLUE

| Jianfeng Gao and Saurabh Tiwary

As part of Microsoft AI at Scale (opens in new tab), the Turing family of NLP models are being used at scale across Microsoft to enable the next generation of AI experiences. Today, we are happy to announce that the…

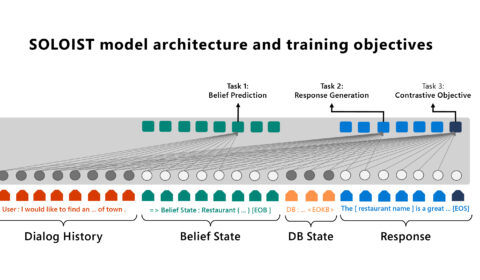

SOLOIST: Pairing transfer learning and machine teaching to advance task bots at scale

| Baolin Peng, Chunyuan Li, Jinchao Li, Lars Liden, and Jianfeng Gao

The increasing use of personal assistants and messaging applications has spurred interest in building task-oriented dialog systems (or task bots) that can communicate with users through natural language to accomplish a wide range of tasks, such as restaurant booking, weather…