In the news | Possible

Peter Lee on the Future of Health and Medicine

A conversation with Peter Lee on the POSSIBLE Podcast. "At some point, it just makes sense that, of course, you would never practice medicine without washing your hands first. And we will definitively get to a point—probably sooner than we…

In the news | Communications of the ACM

What Would the Chatbot Say?

Sebastien Bubeck is a senior principal research manager in the Machine Learning Foundations Group at Microsoft Research. Bubeck often generates stories about unicorns for his young daughter using a chatbot powered by GPT-4, the latest large language model (LLM) by…

In the news | Scientific American

When It Comes to AI Models, Bigger Isn’t Always Better

Artificial intelligence models are getting bigger, along with the data sets used to train them. But scaling down could solve some big AI problems. Artificial intelligence has been growing in size. The large language models (LLMs) that power prominent chatbots,…

Orca 2: Teaching Small Language Models How to Reason

| Ahmed Awadallah, Andres Codas, Luciano Del Corro, Hamed Khanpour, Shweti Mahajan, Arindam Mitra, Hamid Palangi, Corby Rosset, Clarisse Simoes Ribeiro, and Guoqing Zheng

At Microsoft, we’re expanding AI capabilities by training small language models to achieve the kind of enhanced reasoning and comprehension typically found only in much larger models.

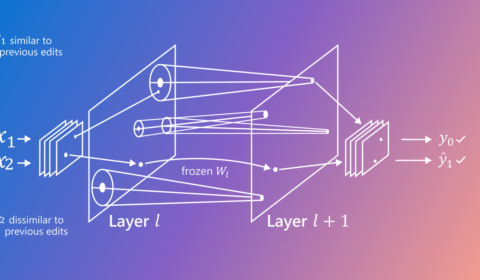

Lifelong model editing in large language models: Balancing low-cost targeted edits and catastrophic forgetting

| Tom Hartvigsen and Hamid Palangi

Lifelong model editing fixes mistakes discovered after model deployment. This work could expand sequential editing to model properties like fairness and privacy and enable a new class of solutions for adapting LLMs over long deployment lifetimes.

Abstracts: November 20, 2023

| Gretchen Huizinga and Shrey Jain

Today I'm talking to Shrey Jain, an applied scientist at Microsoft Research, and Dr. Zoë Hitzig, a junior fellow at the Harvard Society of Fellows.

In the news | VentureBeat

Microsoft releases Orca 2, a pair of small language models that outperform larger counterparts

Even as the world bears witness to the power struggle and mass resignation at OpenAI, Microsoft, the long-time backer of the AI major, is not slowing down its own AI efforts. Today, the research arm of the Satya Nadella-led company…

铸星闪耀 | 张扶桑:开展以人为本的研究,结识惺惺相惜的伙伴

编者按:微软亚洲研究院“铸星计划”向全球杰出的青年学者发出邀请,提供在微软亚洲研究院进行为期三个月研究访问的机会。无论是与领域内顶尖研究员合作的机会,还是丰富的数据集和强大的支持资源,抑或是产业界独有的实际应用场景,都吸引着青年才俊们来到微软亚洲研究院探索领域内的前沿新知。 本文讲述了 2022 年度“铸星计划”访问学者、中国科学院软件研究所副研究员张扶桑的“铸星”故事。人在哪里,场景在哪里,无线...

铸星闪耀 | 郑伟龙:通过脑电波,我想看到抑郁症患者眼中的世界

编者按:微软亚洲研究院“铸星计划”向全球杰出的青年学者发出邀请,提供在微软亚洲研究院进行为期三个月研究访问的机会。无论是与领域内顶尖研究员合作的机会,还是丰富的数据集和强大的支持资源,抑或是产业界独有的实际应用场景,都吸引着青年才俊们来到微软亚洲研究院探索领域内的前沿新知。 本文讲述了 2022 年度“铸星计划”访问学者、上海交通大学副教授郑伟龙的“铸星”故事。如果能知道抑郁症患者眼中的世界与普通...