In the news | Microsoft Translator Blog

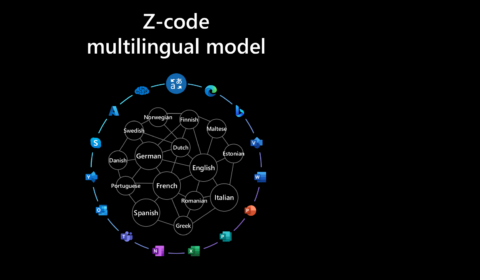

Multilingual translation at scale: 10000 language pairs and beyond

Microsoft is on a quest for AI at Scale with high ambition to enable the next generation of AI experiences. The Microsoft Translator ZCode team is working together with Microsoft Project Turing and Microsoft Research Asia to advance language and…

Microsoft Translator: Now translating 100 languages and counting!

| Krishna Doss Mohan and Jann Skotdal

Today, we’re excited to announce that Microsoft Translator has added 12 new languages and dialects to the growing repertoire of Microsoft Azure Cognitive Services Translator, bringing us to a total of 103 languages! The new languages, which are natively spoken…

In the news | VentureBeat

Microsoft taps AI techniques to bring Translator to 100 languages

Today, Microsoft announced that Microsoft Translator, its AI-powered text translation service, now supports more than 100 different languages and dialects. With the addition of 12 new languages including Georgian, Macedonian, Tibetan, and Uyghur, Microsoft claims that Translator can now make…

In the news | Microsoft AI Blog

Azure AI empowers organizations to serve users in more than 100 languages

Microsoft announced today that 12 new languages and dialects have been added to Translator. These additions mean that the service can now translate between more than 100 languages and dialects, making information in text and documents accessible to 5.66 billion…

DeepSpeed powers 8x larger MoE model training with high performance

| DeepSpeed Team and Z-code Team

Today, we are proud to announce DeepSpeed MoE, a high-performance system that supports massive scale mixture of experts (MoE) models as part of the DeepSpeed (opens in new tab) optimization library. MoE models are an emerging class of sparsely activated…

In the news | Microsoft AI - Cognitive Services Blog

Summarize text with Text Analytics API

The extractive summarization feature in Text Analytics uses natural language processing techniques to locate key sentences in an unstructured text document. These sentences collectively convey the main idea of the document. This feature is provided as an API for developers.…

In the news | Microsoft AI - Cognitive Services Blog

Announcing GA of Text Analytics for health, Opinion Mining, PII and Analyze

It has been a year since we released (in GA) our last TA API (v3.0). After five previews of adding features, responsible AI, incorporating customer feedback, UX feedback, and optimizations; in July 2021 we announced GA (General Availability) of Text…

In the news | customers.microsoft.com

Machine translation speaks Volkswagen – in 40 languages

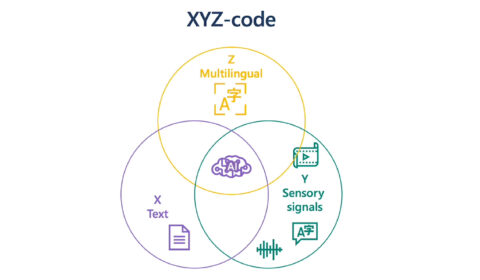

A holistic representation toward integrative AI

| Xuedong Huang

At Microsoft, we have been on a quest to advance AI beyond existing techniques, by taking a more holistic, human-centric approach to learning and understanding. As Chief Technology Officer of Azure AI Cognitive Services, I have been working with a…