“During the sprint development at the Microsoft AI Co-Innovation Lab KOBE, we leveraged Azure OpenAI and Azure AI Speech to confirm the feasibility of intuitive remote robot operation through voice.

With generative AI functioning as a 'translator between people and robots,' we feel confident that a future where anyone can participate in remote robot operation — regardless of IT skills or physical constraints — is within reach.

Going forward, we will continue adopting the latest AI technologies to drive the social implementation of 'human-centered remote robotics' in the era of Physical AI.”

Innovation Challenge

This initiative is positioned not merely as a UI improvement or voice input experiment, but as a practical implementation of Physical AI — where AI makes decisions and moves the real world.

For the flagship platform Remolink, the objectives were:

- Voice-driven application operation

- Generative AI–assisted operation understanding and code generation

- UX validation for robot/edge device control

These were intensively validated within a short timeframe, with the goal of turning the vision of “human-centered remote robotics” into technology that actually works.

Why “Voice × Generative AI”?

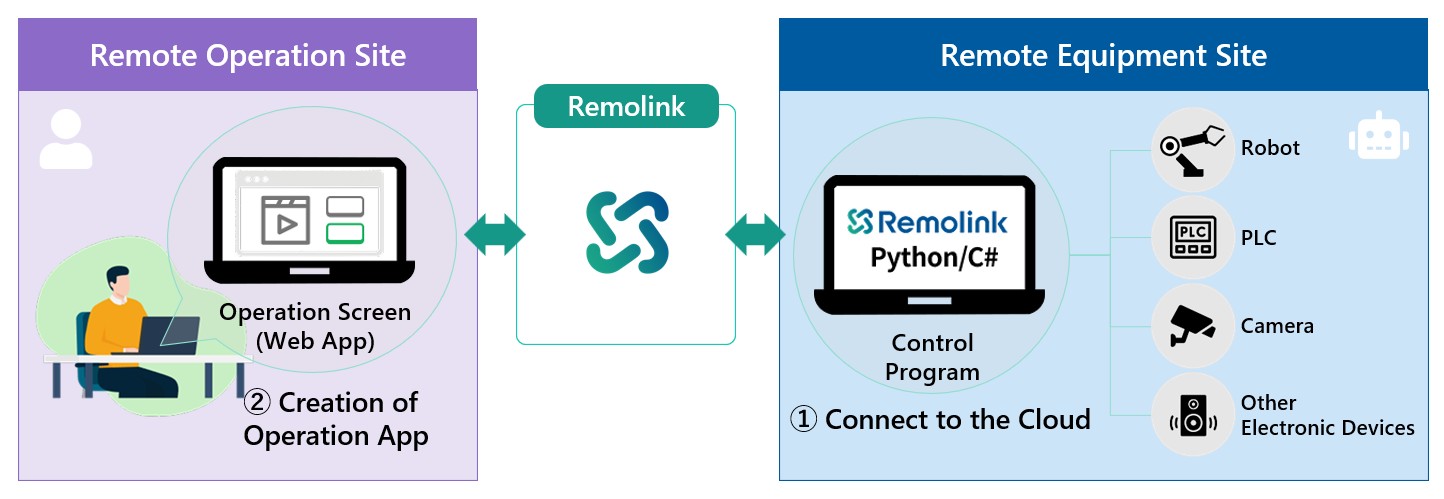

Remote Robotics, Inc. has been established as a joint venture between Kawasaki Heavy Industries, Ltd. and Sony Group Corporation, and provides

- Autonomous Mobile Robots (AMR)

- Industrial equipment and PLCs

- Edge devices such as network cameras

through “Remolink,” a web-based platform for remote operation and monitoring.

However, as the platform evolved, the following challenges emerged:

- An increasing number of operation items

- Growing UI complexity and sophistication

As a result, the barrier to operation was rising for:

- Elderly users

- On-site workers unfamiliar with IT

- People with disabilities

To address this dilemma, the company focused on “voice-based operation” and “generative AI–powered understanding and assistance.”

Rather than memorizing the UI, users simply speak, and AI understands their intent, makes decisions, and carries out the operation on their behalf.

This experience was deemed essential for the next generation of remote robotics.

Bridging the Gap Between People and Robots with AI

The essence of this Co-Innovation was not simply the introduction of speech recognition.

- Understand “what the user wants to do” through voice

- Correctly map it to the UI/workflow on Remolink

- Reflect it in actual robot/device control

The key was to achieve an end-to-end flow:

Human Intent → Digital Operation → Physical World Action

In particular, for operations such as:

- Button clicks

- Dropdown selections

- Checkbox changes

- Camera operations (zoom, switching)

— operations that are intuitive for humans but ambiguous for machines — the critical question was how generative AI could interpret these and translate them into safe and accurate control commands.

This architecture represents the core of Physical AI and demonstrated a high degree of completeness as a concrete example of “AI that goes beyond the screen.”

In the Lab: Prototyping (Sprint Development)

At the Microsoft AI Co-Innovation Lab KOBE, sprint development was conducted following onboarding that included tech calls and design thinking sessions.

Goal Setting

- Voice operation of no-code apps created with Remolink

- Validation of recognition and operation accuracy for Japanese voice input

- Technology selection with future product integration in mind

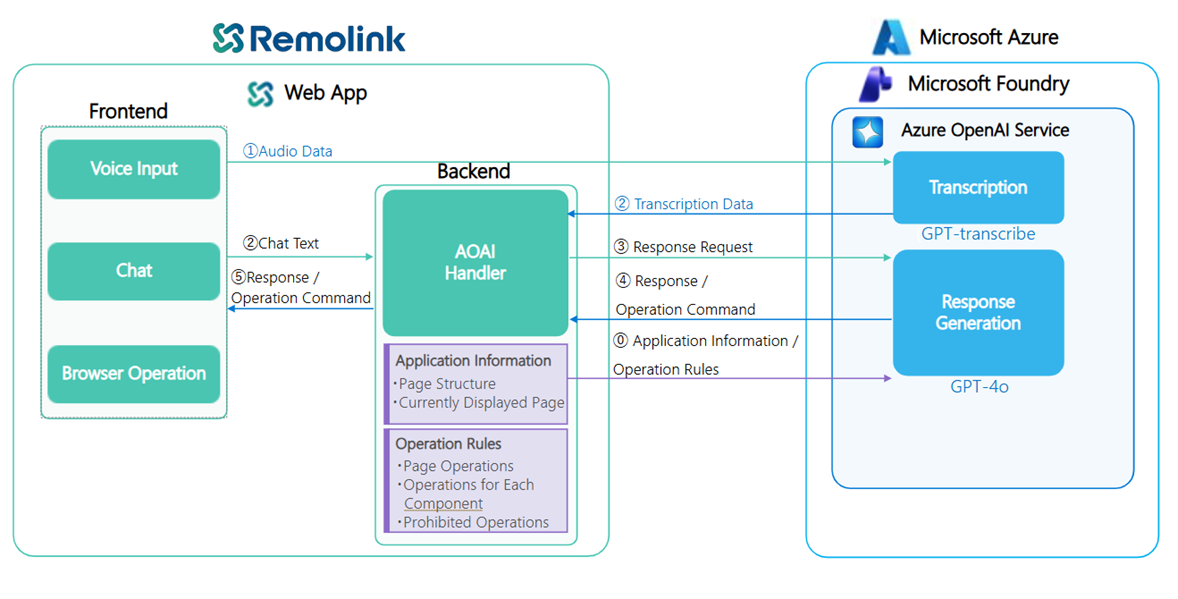

Architecture

Speech Recognition

- Azure AI Speech

- GPT-4o / GPT-5 transcribe

- Focused validation on numeric expression and Japanese context understanding accuracy

Generative AI (Azure OpenAI)

- Converts voice instructions into UI operations and control logic

- Differentiated use of GPT-4o (low latency, conversational) and GPT-5 (advanced reasoning)

Remolink SDK

- Connects generated operation logic to actual robot/device control

In this sprint, the priority was “making it work” over perfection, with repeated testing on both dummy apps and a Remolink environment close to production.

Technical Insights: Intuitive Operation Through Voice × LLM

In voice input, particularly for:

- “Press button 01”

- “Move the 3rd arm”

operation instructions containing numerical values were found to be prone to misrecognition by traditional STT (Speech-to-Text).

By using LLM-based speech recognition (GPT-4o-transcribe), highly accurate transcription that considers the meaning of the entire sentence became possible, demonstrating that practical-level recognition accuracy required for remote operation could be achieved.

“Understanding AI” and Agent-Based Architecture

Instructions obtained from voice are interpreted by Azure OpenAI Service's GPT-4o/GPT-5.

- GPT-4o: Optimal for low-latency, conversational operations

- GPT-5: Capable of understanding complex metadata such as JSON exceeding 1,000 lines and performing advanced reasoning

Based on these characteristics, a design balancing UX and reasoning performance was adopted.

Furthermore, a multi-agent configuration using Azure AI Foundry Agent Service was trialed:

- Agents retain UI configuration, specifications, and constraints

- Convert natural language instructions into function calls and SDK operations

- Provide end-to-end support from QA on SDK usage to code generation

This realized an architecture where a “thinking AI” mediates between people and robots.

This represents a quintessential implementation pattern of Physical AI, where AI makes decisions and triggers physical actions.

Results: The Value Revealed Through Prototyping

Voice-Based UI Operation Proven at a Practical Level

- Confirmed camera operation, form input, status changes, and more

Effectiveness of Generative AI for Code Generation and Operation Assistance

- Reduced SDK learning costs

- Significantly accelerated development speed

Strong Potential for Improved Accessibility

- The possibility of an operating experience that transcends differences in IT skills and physical constraints

Internal feedback included the assessment that “verification that would have previously taken 3 months was accomplished in just 5 days,” providing important insights for future product strategy.

From Kobe to Society — Future Outlook

This initiative goes beyond a mere proof of concept. Amid the major trends of:

- Labor shortages

- Growing demand for remote operations

- Social implementation of Physical AI (AI × the real world)

it clearly demonstrated the direction of “human-centered robot operation.”

By positioning generative AI not as “technology to make robots smarter” but as “a translator and assistant connecting people and robots,” a remote working environment where anyone can participate is becoming a realistic option.

Going forward, through:

- Product integration of voice × generative AI

- Collaboration with robot cells and digital twins

- Exhibition and external communication at the Kobe Lab

expansion into broader industrial domains is expected as a Physical AI use case originating from Japan.