-

Fast Data Transfer

a high-speed data loader for Azure

Experiment complete

Thanks for the feedback!

This project is no longer supported by Microsoft. We recommend everyone use the latest version of AzCopy. Refer to the following text for more information.

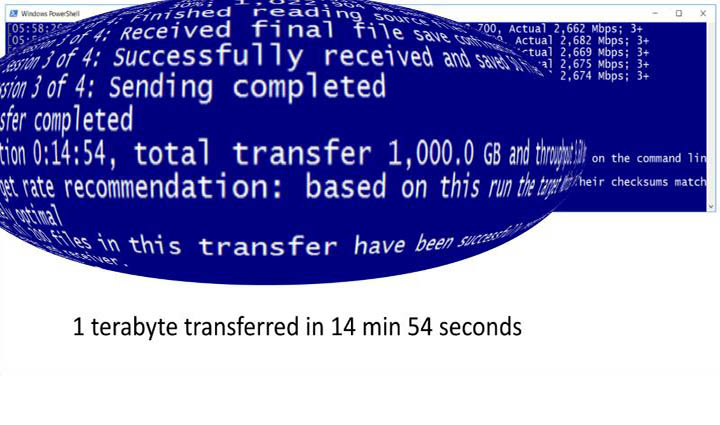

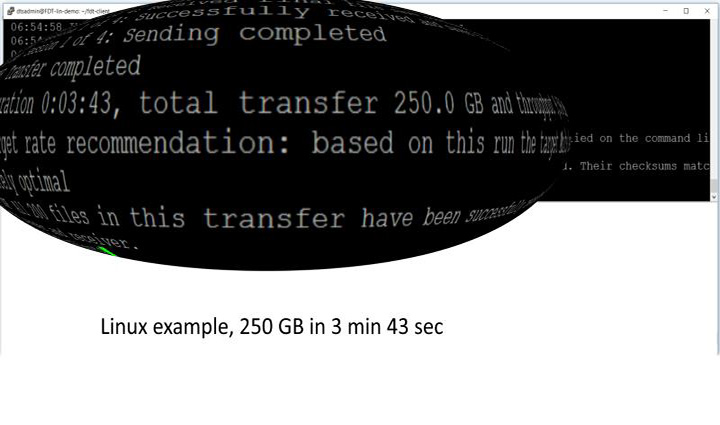

Fast Data Transfer is a tool for fast upload of data into Azure – up to 4 terabytes per hour from a single client machine. It moves data from your premises to Blob Storage, to a clustered file system, or direct to an Azure VM. It can also move data between Azure regions.

The tool works by maximizing utilization of the network link. It efficiently uses all available bandwidth, even over long-distance links. On a 10 Gbps link, it reaches around 4 TB per hour, which makes it about 3 to 10 times faster than competing tools we’ve tested. On slower links, Fast Data Transfer typically achieves over 90% of the link’s theoretical maximum, while other tools may achieve substantially less.

For example, on a 250 Mbps link, the theoretical maximum throughput is about 100 GB per hour. Even with no other traffic on the link, other tools may achieve substantially less than that. In the same conditions (250 Mbps, with no competing traffic) Fast Data Transfer can be expected to transfer at least 90 GB per hour. (If there is competing traffic on the link, Fast Data Transfer will reduce its own throughput accordingly, in order to avoid disrupting your existing traffic.)

Fast Data Transfer runs on Windows and Linux. Its client-side portion is a command-line application that runs on-premises, on your own machine. A single client-side instance supports up to 10 Gbps. Its server-side portion runs on Azure VM(s) in your own subscription. Depending on the target speed, between 1 and 4 Azure VMs are required. An Azure Resource Manager template is supplied to automatically create the necessary VM(s).

Update: May 2019

Following the recent release of AzCopy v10, we recommend that customers transferring data to Azure Storage should try AzCopy v10 first. In most cases, AzCopy v10 is just as fast as Fast Data Transfer, but is easier to set up. Customers should use AzCopy v10 in most cases.As of May 2019, we recommend that you should only use Fast Data Transfer if one of the following applies:

- Your files are very small (e.g. each file is only 10s of KB).

- You have an ExpressRoute with private peering.

- You want to throttle your transfers to use only a set amount of network bandwidth.

- You want to load directly to the disk of a destination VM (or to a clustered file system). Most Azure data loading tools can’t send data direct to VMs. Tools such as Robocopy can, but they’re not designed for long-distance links. We have reports of Fast Data Transfer being over 10 times faster.

- You are reading from spinning hard disks and want to minimize the overhead of seek times. In our testing, we were able to double disk read performance by following the tuning tips in Fast Data Transfer’s instructions.

Meet the team

George Pollard, John Rusk, Dave Fellows

Azure Batch

New Zealand