Real-Time Operational Analytics Using In-Memory Technology

Operational workloads refer to the business transactions that are critical to running a business. For example, a retail store has a transactional system to create or modify new orders, and a credit card company tracks all charges made by vendors on behalf of its customers. These transactional systems are critical to businesses, as any downtime or slowdown will have a direct impact on the business’s bottom line. Therefore, these systems are designed for performance/scalability and configured with high availability. Equally important to operational workload are the analytics that business use to answers questions such as, “What is the average time to fulfill an order?”

Most customers implement analytics by setting up a Data Warehouse on a different machine similar to the configuration described in my recent post on using In-Memory technology with periodic flow of data through ETL (Extract, Transform and Load) from operational system to Data Warehouse. This approach of optimizing/isolating operational and analytics workloads has served well, but there are some drawbacks:

- Data Latency: At best, the data for analytics is as current as of the last ETL job. If the ETL job moves data once a day, your analytic queries are running on data up to 24 hours behind. More businesses are demanding analytics on live data to better support operations. For example, a retail chain could run analytics on transactional data to track real-time product sales and use this knowledge to offer discounts or replenish inventory in a timely fashion.

- Complexity: Migrating data from operational data can be complex. For example, identifying what has changed since the last ETL operation is often difficult.

- Cost: You need to setup a separate server to host the Data Warehouse, which means another license for a database server and the overhead of creating and maintaining the ETL application.

Solution

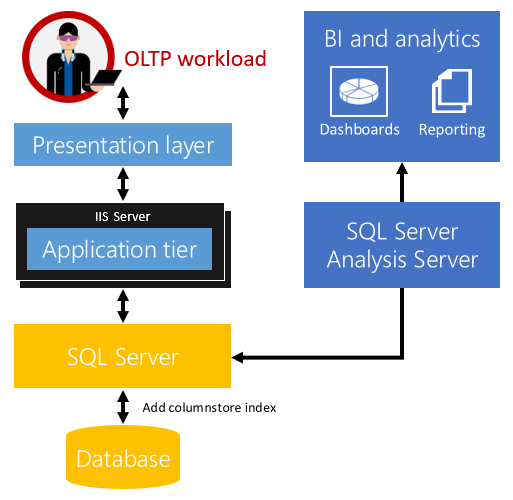

Real-Time Operational Analytics in SQL Server 2016 enables running analytics queries directly on your operational workload using columnstore indexes. The image below shows one possible configuration using Analysis Server in Direct Query mode, but you can use any analytics tool or custom solution at your disposal. This solution addresses the drawbacks mentioned above since the analysis happens directly on the operational data.

For real-time operational analytics, take these steps:

For real-time operational analytics, take these steps:

- Identify the operational table(s) and the columns you need to run analytics on. This can be either a disk-based or memory-optimized table or both.

- Create the columnstore index on the tables identified. Note, starting with SQL Server 2016, the nonclustered columnstore index is now updateable. Your operational workload continues to work as before without requiring any application changes.

- Set up Analytics framework to source data directly from the operational store. SQL Server query optimizer will automatically choose the columnstore index as needed for analytics queries.

Not all workloads will fit this model. For example, a separate Data Warehouse is still needed if data needs to be aggregated from multiple sources for analytics.

While real-time operational analytics is promising, one may question its impact on the operational workload while delivering high performance analysis.

Minimizing impact of Operational Workload

SQL Server 2016 provides options to minimize the impact on operational workload as follows:

-

Filtered Columnstore Index: For many operational workloads, the data is ‘hot’ (i.e. target of DML operations) only for a short time, and then it transitions to a ‘warm’ or ‘cold’ state. For example, consider an online retail vendor where customers browse a catalog and place orders, and then these orders are shipped. An order is ‘hot’ while it is going through the steps of being processed, but once it is shipped it transitions into a ‘cold’ state since it may never get updated. This eliminates the overhead of maintaining the columnstore index on the ‘hot’ data. However, when an Analytics query is run; SQL Server generates the query plan to access the rows both from columnstore index as well as the ones that have been filtered out. SQL Server allows you to create a columnstore index with a filtered condition as shown in the example below:

create nonclustered columnstore index <index-name> on Orders (<list of columms>) where order_status = ‘Shipped’

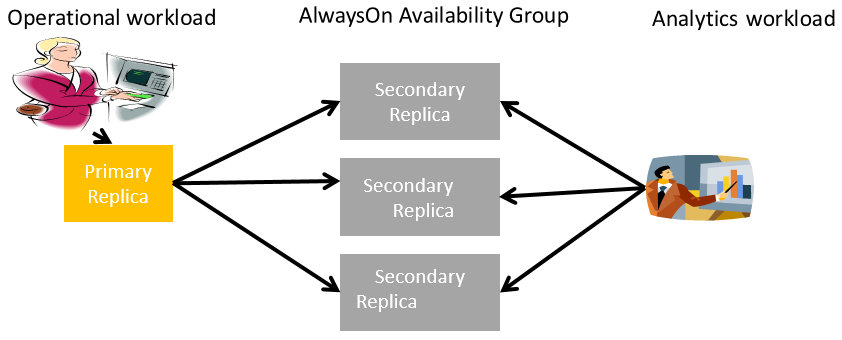

- Offloading Analytics Queries to Readable Secondary: As mentioned earlier, most operational workloads are mission critical and are likely to have high availability configured using AlwaysOn. You can choose to offload the analytics workload to readable secondary to minimize impact on the primary replica as shown in the picture below.

Analytics query performance

Analytics query performance

Running analytics on an operational schema, which is highly normalized, will not be as performant compared to say running it on the schema optimized for analytics (Star schema). However, using a columnstore index does speed up analytics query performance significantly to compensate for extra complexity (more joins) in the query.

Microsoft Dynamics AX, currently in public preview, leverages SQL 2016 with real time operational analytics.

Summary

SQL Server 2016 supports real-time operational analytics both on disk-based and memory-optimized tables so that you can leverage it without any changes to your applications. With memory-optimized and columnstore combination, you get the best of OLTP performance and analytics query performance. Columnstore Indexes for Real-Time Operational Analytics provides more detail about this solution.