Microsoft is committed to empowering our users

At Microsoft, our aim is to empower our users by advancing digital safety and addressing illegal and harmful content and conduct, while respecting rights like privacy, freedom of expression and access to information. Digital safety is a whole-of-society responsibility, so we work with industry partners, civil society, and other stakeholders to address complex online harms.

How do we keep people safe?

We take a multi-pronged approach to fostering digital safety. We set rules and policies for the use of Microsoft products and services and then remove user content or take action against user conduct that breaks our policies. We also need you to tell us if you have concerns about something you saw or experienced on a Microsoft service.

- Microsoft has a Service Agreement that includes a Code of Conduct. This Code of Conduct tells you what you can and can’t do when using a Microsoft service.

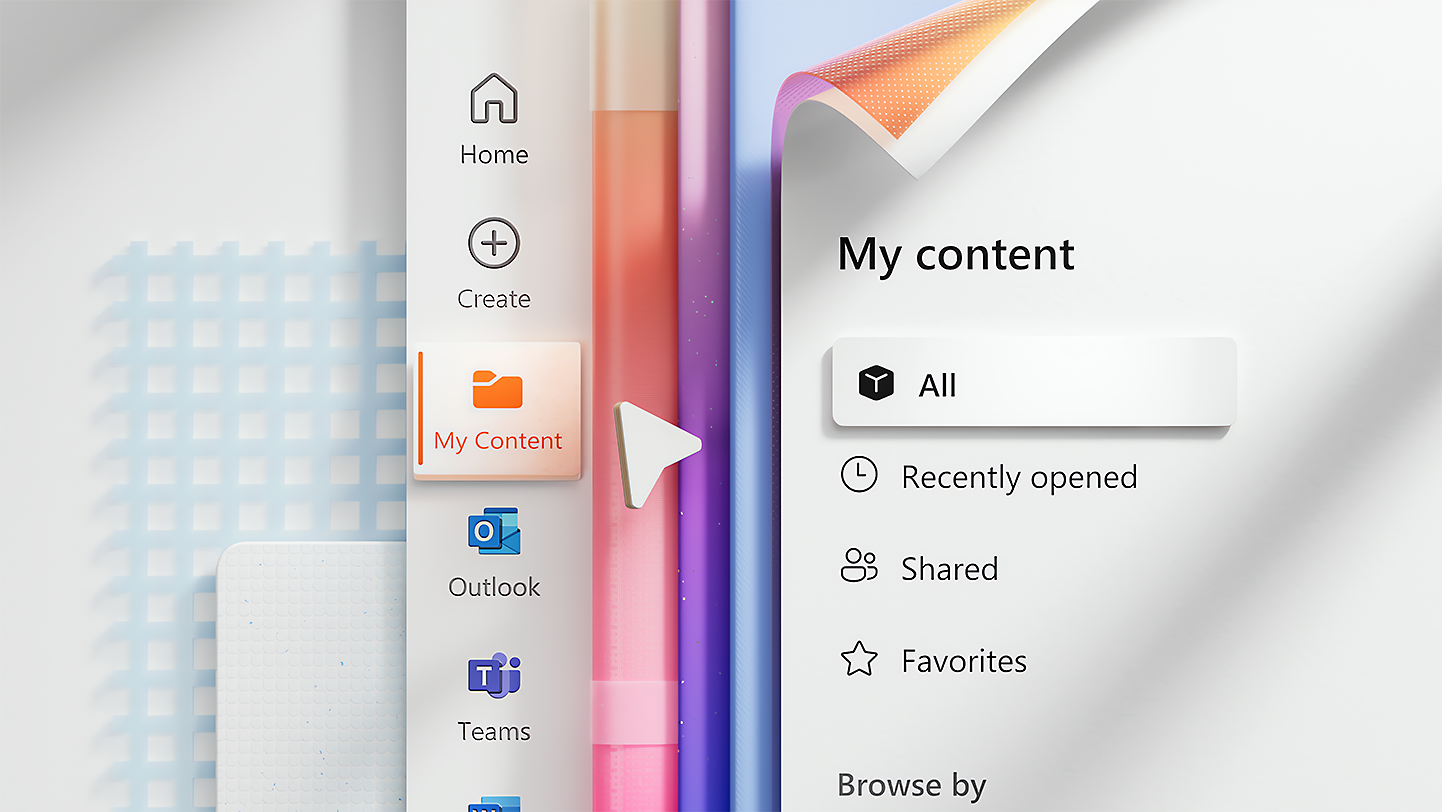

- Your Microsoft account is what you create to access Microsoft services like Xbox and Teams.

- Our guidelines give more details about rules and how we follow them.

- Different Microsoft services may have additional policies and community standards.

Staying safe online

Empowering our users includes helping people understand the risks they may face online and ways in which they can protect themselves and their families.

- Check out our annual research about online safety risks and experiences with the Global Online Safety Survey results.

- Review our resources for users for material to help you and your family stay safe online.

- Introduce your child to our approach to safety by visiting digital safety for young people.

Policies

Microsoft content and conduct policies explain what is not allowed on our services.

Moderation & enforcement

We use automated technology and trained human reviewers to flag and act on content that may violate our policies.

Transparency reports

Microsoft regularly publishes transparency reports to provide visibility on our approach and actions we have taken.