How to build and deliver an effective data strategy: part 3

In part one of this three part blog series, we took a detailed look at the importance of building a data strategy. We started laying out the steps to build a successful strategy that builds a data-driven culture and aligns with business outcomes. In part two we covered capability and maturity models. In this final blog we’re focussing on how you can execute a strategy, by taking a principled approach for the end-to-end data lifecycle and aligning technology choices accordingly. We’ll also look at the importance of ethics and responsibility in data and analytics.

Take a principled approach to your data strategy

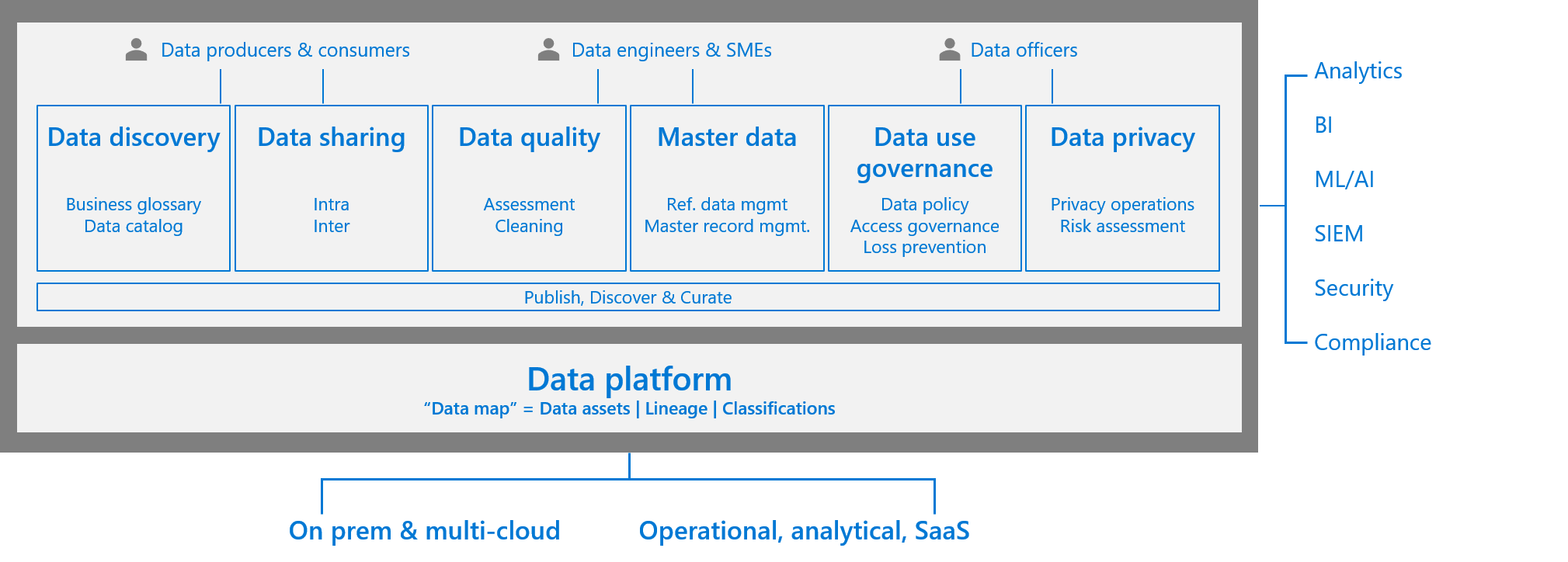

With data governance in mind, the following diagram shows the essential stages for data lifecycle, please consult the attached document on “A Guide to Data Governance”. An effective data strategy must have provisions for data governance, but they are mutually inclusive, but not the same thing.

For the purpose of this blog, let’s take a closer look at the considerations to take when building design principles for the four layers that are key in your data strategy, focused towards delivering business outcome and value from data.

1. Data ingestion

A key consideration for data ingestion is the ability to build a data pipeline extremely fast, from requirements to production, in a secure and compliant manner. Elements such as metadata driven, self-service, low-code technologies to hydrating your data lake are key. When building a pipeline, consider design, the ability to do data wrangling, scale compute and also data distribution capabilities. In addition, having the right DevOps support for the continuous integration and delivery of your pipeline is critical. Tools such as Azure Data Factory support a plethora of on-premises, Software as a Service and data sources from other public clouds. Having such agility from the get-go is always helpful.

2. Storage

Data needs to be tagged and organised in physical and logical layers. Data lakes are key in all modern data analytics architectures. The Hitchhikers guide to the data lake is an excellent resource to understand different considerations that companies need to make. Organisations need to apply appropriate data privacy, security and compliance requirements based on data classification and the industry compliance requirements they operate in. The other key considerations are cataloguing and self-service to aid organisation level data democratisation to fuel innovation, guided by appreciate access control.

Choosing the right storage based on the workload is key, but even if you don’t get it right the first time, the cloud provides you options to failover quickly and provide options to restart the journey reasonably easily. You can choose the right database based on your application requirements. When choosing an analytics platform, make sure you consider the ability to process batch and streaming data.

3. Data processing

Your data processing needs will vary according to workload, for example most big data processing has elements of both real-time and batch processing. Most enterprises also have elements of time series processing requirements and the need to process free-form text for enterprise search capabilities.

The most popular organisational processing requirements come from Online Transaction Processing (OLTP). Certain workloads need specialised processing such as High Performance Computing (HPC), also called ‘Big Compute’. These use many CPU or GPU-based computers to solve complex mathematical tasks.

For certain specialised workloads, customers can secure execution environments such as Azure confidential computing which helps users secure data while it’s ‘in use’ on public cloud platforms (a state required for efficient processing). The data is protected inside a Trusted Execution Environment (TEE), also known as an enclave. This protects the code and data against viewing and modification from outside of the TEE. This can provide the ability to train AI models using data sources from different organisations without sacrificing data confidentiality.

4. Analytics

Extract, transform, load construct or otherwise called ETL (or ELT depending on where the transform happens) relates to online analytical processing (OLAP), and data warehousing needs. One of the useful capabilities here is the ability to automatically detect schema drift. Consider end-to-end architectures like automated enterprise BI with Azure Synapse Analytics and Azure Data Factory. To support advanced analytics including Machine Learning and AI capabilities, it is key to consider the reusability of platform technologies already in use for other processing requirements of similar nature. Here is a quick start guide with working example for end-to-end processing.

Batch processing on Databricks, R, Python or for deep learning models are common examples. Compute, storage, networking, orchestration and DevOps/MLOps are key considerations here. For super large models, look at distributed training of deep learning models on Azure or the Turning Project. You also need to consider the ability to deal with data and model drift too.

Azure Enterprise Ready Analytics Architecture helps collate all the four layers together with people, process, security, and compliance. We also suggest using the recommended architectures from Azure Landing Zones to get started. It uses the Microsoft Cloud Adoption Framework and culminates our experience working through thousands of large scale enterprise deployments.

Now that we have covered the four stages, the following representation shows the key capabilities needed on top of your data platform to provide end to end data governance capability.

Figure 1: End-to-end unified data governance strategy.

Here are some resources to help you build a data strategy that accounts for governance and also allows for seamless innovation.

- A proactive approach to privacy includes capabilities such as data catalog and stewardship, data lineage,

- Map business glossary and industry models to fuel data pipelines,

- A common data model (CDM) is often the key to success.

- Industry accelerators contribute to agility in an organisation’s ability to deliver transformations and sustain innovations.

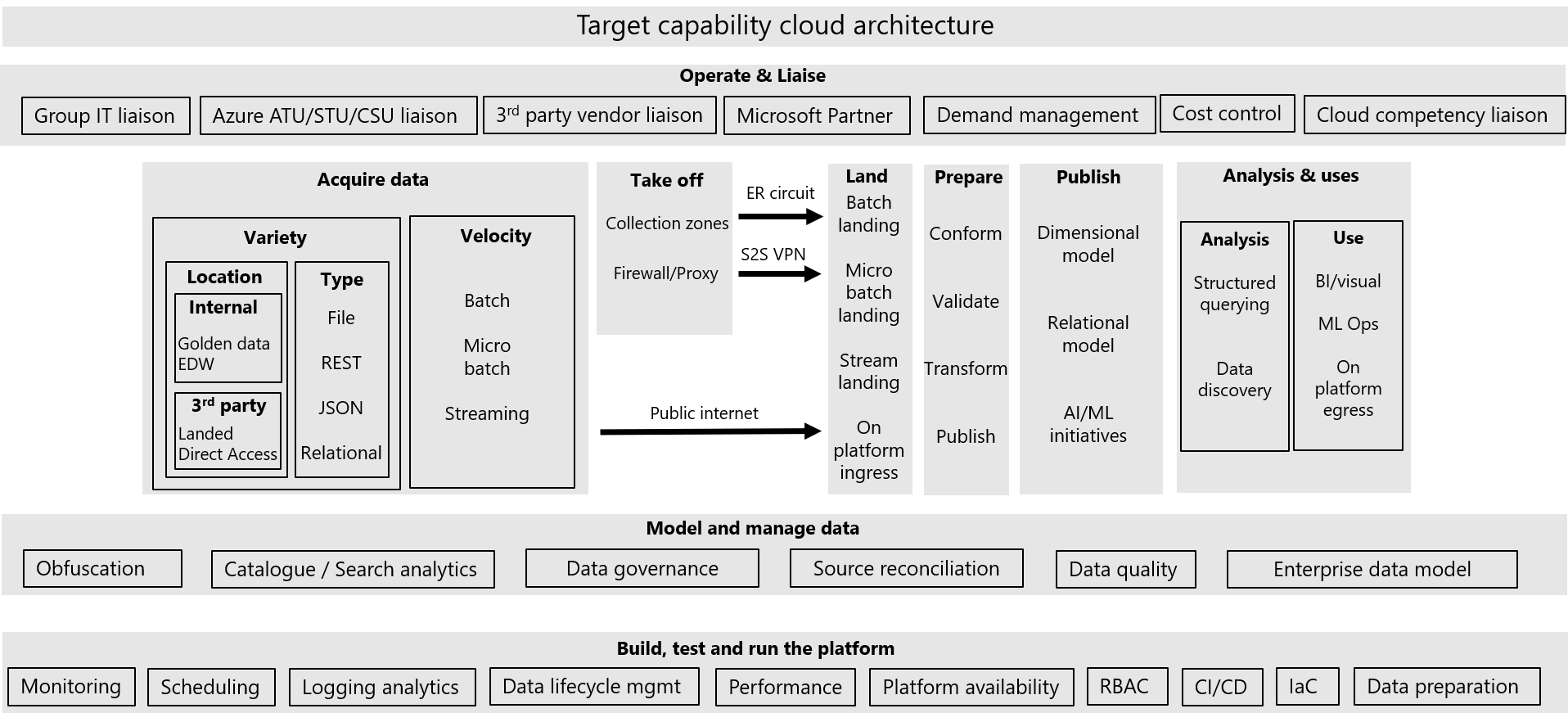

After making all the capability provisions, and taking a principled architectural view as discussed in this section, you will most likely end up with the building blocks required for your cloud strategy journey which may look something like the below:

Figure 2: Target capability cloud architecture.

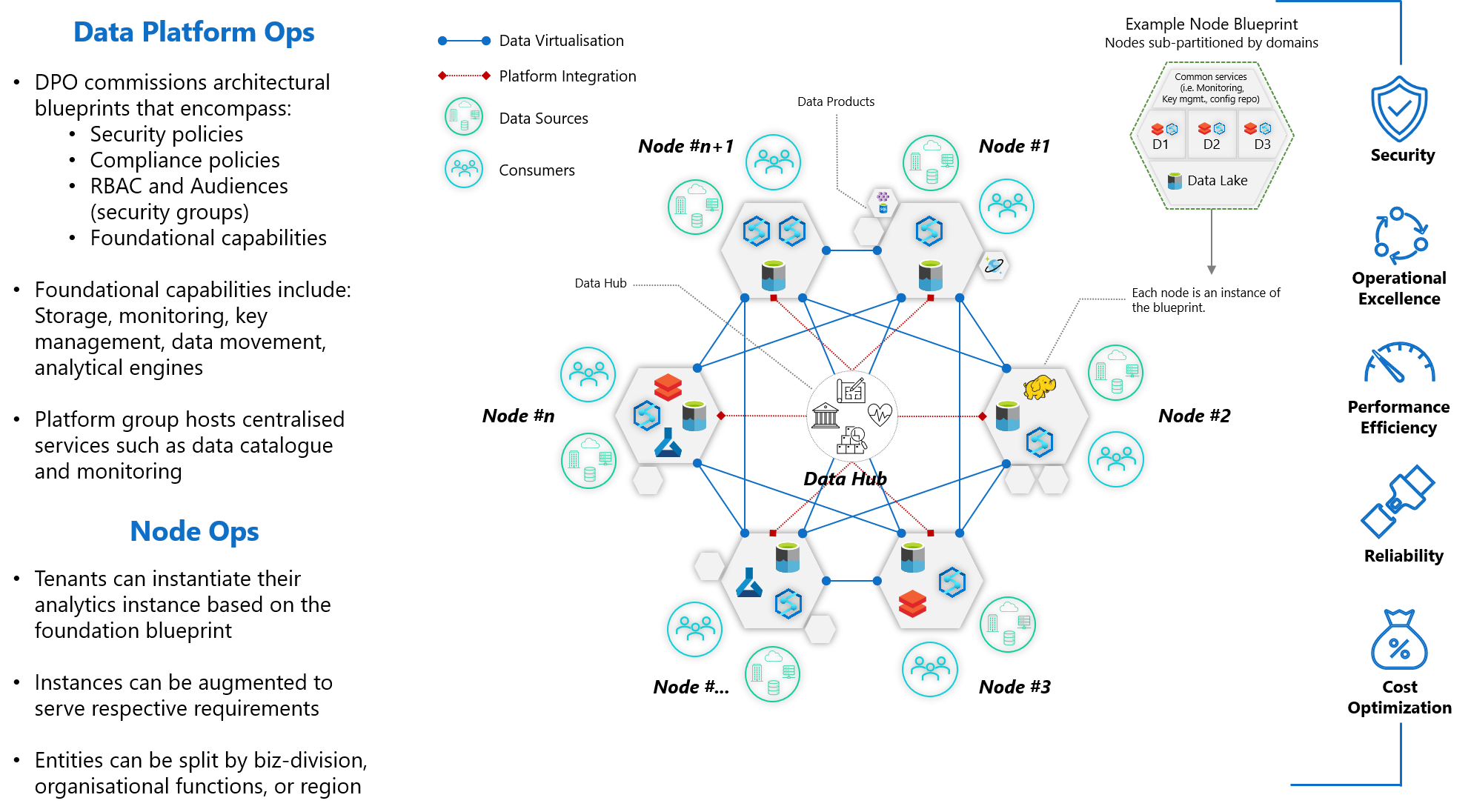

An equivalent architectural representation on Azure may look like this:

Figure 3: Enterprise Harmonised Mesh Data Architecture to foster innovation through analytics and insights in a secure and compliant manner.

A good data strategy values ethics and responsibility

Taking a principled approach on additional, but very relevant considerations, such as data governance and responsible AI will pay dividends later.

At Microsoft, we use four core principles of fairness, reliability and safety, privacy and security, and inclusiveness. Underpinning this is two foundational principles of transparency and accountability. As we move from principle to practice, we’re sharing our learnings to help you on your journey. We put responsible AI and our principles into practice through the development of resources and a system of governance. Some of our guidelines focus on human-AI interaction, conversational AI, inclusive design, an AI fairness checklist, and a datasheet for datasets.

In addition to practices, we’ve developed a set of tools to help others understand, protect, and control AI at every stage of innovation. Our tools are a result of collaboration across various disciplines to strengthen and accelerate responsible AI, spanning software engineering and development, to social sciences, user research, law, and policy.

To further collaboration, we also open-sourced many tools such as Interpret ML and Fair Learn that others can use to contribute and build upon alongside democratising tools through Azure Machine Learning.

Capture the data opportunity

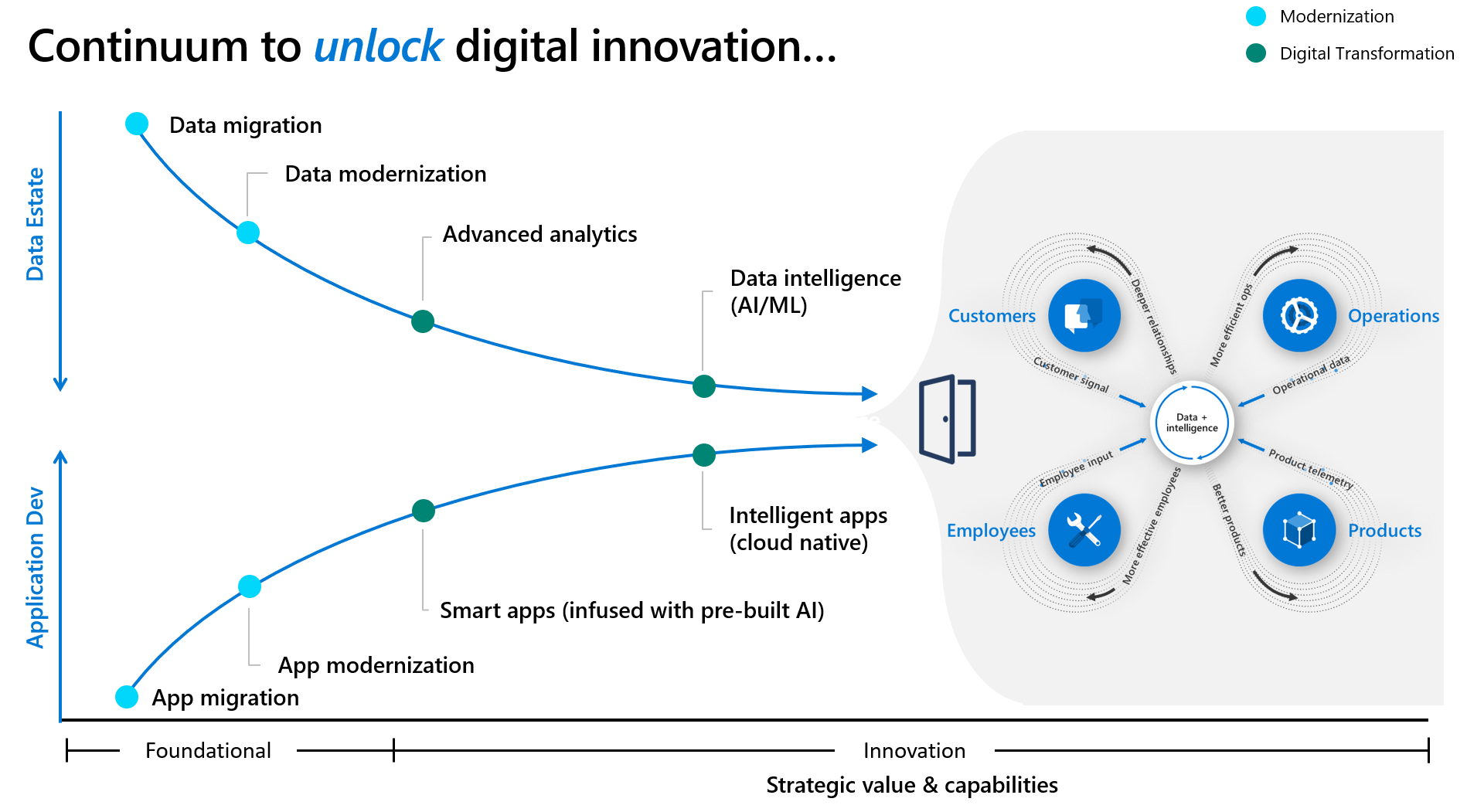

The pivot to becoming a data-driven organisation is fundamental to deliver competitive advantage in the new normal. We want to help our customers shift from an application only approach to an application and data-led approach, helping to create an end-to-end Data Strategy that can ensure repeatability and scalability across current and future use cases that impact business outcomes.

Figure 4: Data Strategy Continuum to unlock digital innovation.

Working with 1000s of customers across different industries of varying complexities we can pick out optimal patterns and tailor transformation plans to accelerate time to value. Be it retail, financial services, manufacturing, health care or public sector, we have the industry knowledge, and deep domain expertise to help you build a resilient data strategy and culture.

Let’s get started. Contact your account team at Microsoft or connect with a Microsoft Partner.

Find out more

Download: A Guide to Data Governance

How to build and deliver an effective data strategy: part 1

How to build and deliver an effective data strategy: part 2

Driving effective data governance for improved quality and analytics

Powering digital transformation at Microsoft with Modern Data Foundations

Designing a modern data catalog at Microsoft to enable business insights

Resources for your development team

Learn how to manage identities and governance in Azure

About the author

Pratim Das is the Director of Data & AI Architecture and CDO Advisory at Microsoft UK. Pratim and his team’s mission is to work alongside their customers in delivering insights and most importantly value from data, in achieving great business outcomes. Be it retail, financial services, manufacturing, health care or public sector, they have industry knowledge, and deep domain expertise to build a resilient data culture, and customer capability. Pratim’s special interests are around operational excellence for petabyte scale analytics, and design patterns covering “good data architecture” including governance, catalogue, privacy and data democratisation in a secure and compliant manner. Pratim brings over 20 years of experience both as a customer, and also working as a technology vendor building Data & AI services, with a key focus on building capability, products and solutions, that leads into fostering a data driven culture.

Pratim Das is the Director of Data & AI Architecture and CDO Advisory at Microsoft UK. Pratim and his team’s mission is to work alongside their customers in delivering insights and most importantly value from data, in achieving great business outcomes. Be it retail, financial services, manufacturing, health care or public sector, they have industry knowledge, and deep domain expertise to build a resilient data culture, and customer capability. Pratim’s special interests are around operational excellence for petabyte scale analytics, and design patterns covering “good data architecture” including governance, catalogue, privacy and data democratisation in a secure and compliant manner. Pratim brings over 20 years of experience both as a customer, and also working as a technology vendor building Data & AI services, with a key focus on building capability, products and solutions, that leads into fostering a data driven culture.