Anyone who uses AI systems knows the frustration: a prompt is given, the response misses the mark, and the cycle repeats. This trial-and-error loop can feel unpredictable and discouraging. To address this, we are excited to introduce Promptions (prompt + options), a UI framework that helps developers build AI interfaces with more precise user control.

Its simple design makes it easy to integrate into any setting that relies on added context, including customer support, education, and medicine. Promptions is available under the MIT license on Microsoft Foundry Labs (opens in new tab) and GitHub.

Background

Promptions builds on our research, “Dynamic Prompt Middleware: Contextual Prompt Refinement Controls for Comprehension Tasks.” This project examined how knowledge workers use generative AI when their goal is to understand rather than create. While much public discussion centers on AI producing text or images, understanding involves asking AI to explain, clarify, or teach—a task that can quickly become complex. Consider a spreadsheet formula: one user may want a simple syntax breakdown, another a debugging guide, and another an explanation suitable for teaching colleagues. The same formula can require entirely different explanations depending on the user’s role, expertise, and goals.

A great deal of complexity sits beneath these seemingly simple requests. Users often find that the way they phrase a question doesn’t match the level of detail the AI needs. Clarifying what they really want can require long, carefully worded prompts that are tiring to produce. And because the connection between natural language and system behavior isn’t always transparent, it can be difficult to predict how the AI will interpret a given request. In the end, users spend more time managing the interaction itself than understanding the material they hoped to learn.

Identifying how users want to guide AI outputs

To explore why these challenges persist and how people can better steer AI toward customized results, we conducted two studies with knowledge workers across technical and nontechnical roles. Their experiences highlighted important gaps that guided Promptions’ design.

Our first study involved 38 professionals across engineering, research, marketing, and program management. Participants reviewed design mock-ups that provided static prompt-refinement options—such as length, tone, or start with—for shaping AI responses.

Although these static options were helpful, they couldn’t adapt to the specific formula, code snippets, or text the participant was trying to understand. Participants also wanted direct ways to customize the tone, detail, or format of the response without having to type instructions.

Why dynamic refinement matters

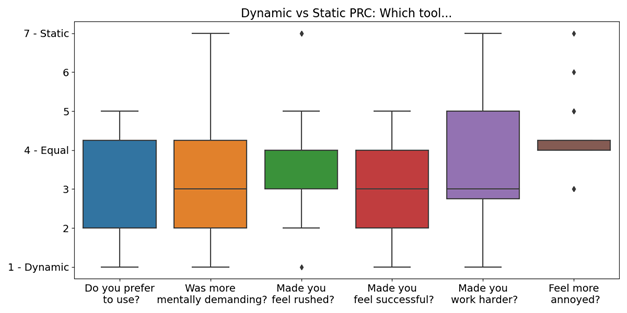

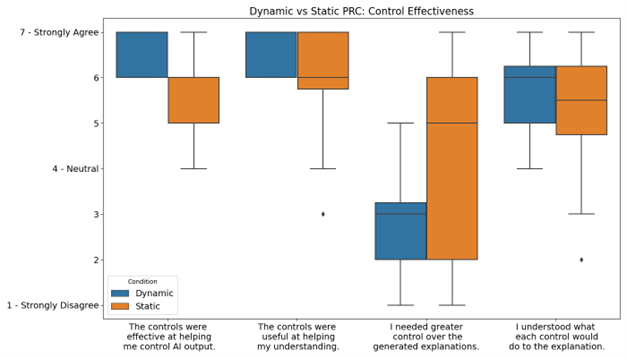

The second study tested prototypes in a controlled experiment. We compared the static design from the first study, called the “Static Prompt Refinement Control” (Static PRC), against a “Dynamic Prompt Refinement Control” (Dynamic PRC) with features that responded to participants’ feedback. Sixteen technical professionals familiar with generative AI completed six tasks, spanning code explanation, understanding a complex topic, and learning a new skill. Each participant tested both systems, with task assignments balanced to ensure fair comparison.

Comparing Dynamic PRC to Static PRC revealed key insights into how dynamic prompt-refinement options change users’ sense of control and exploration and how those options help them reflect on their understanding.

Static prompt refinement

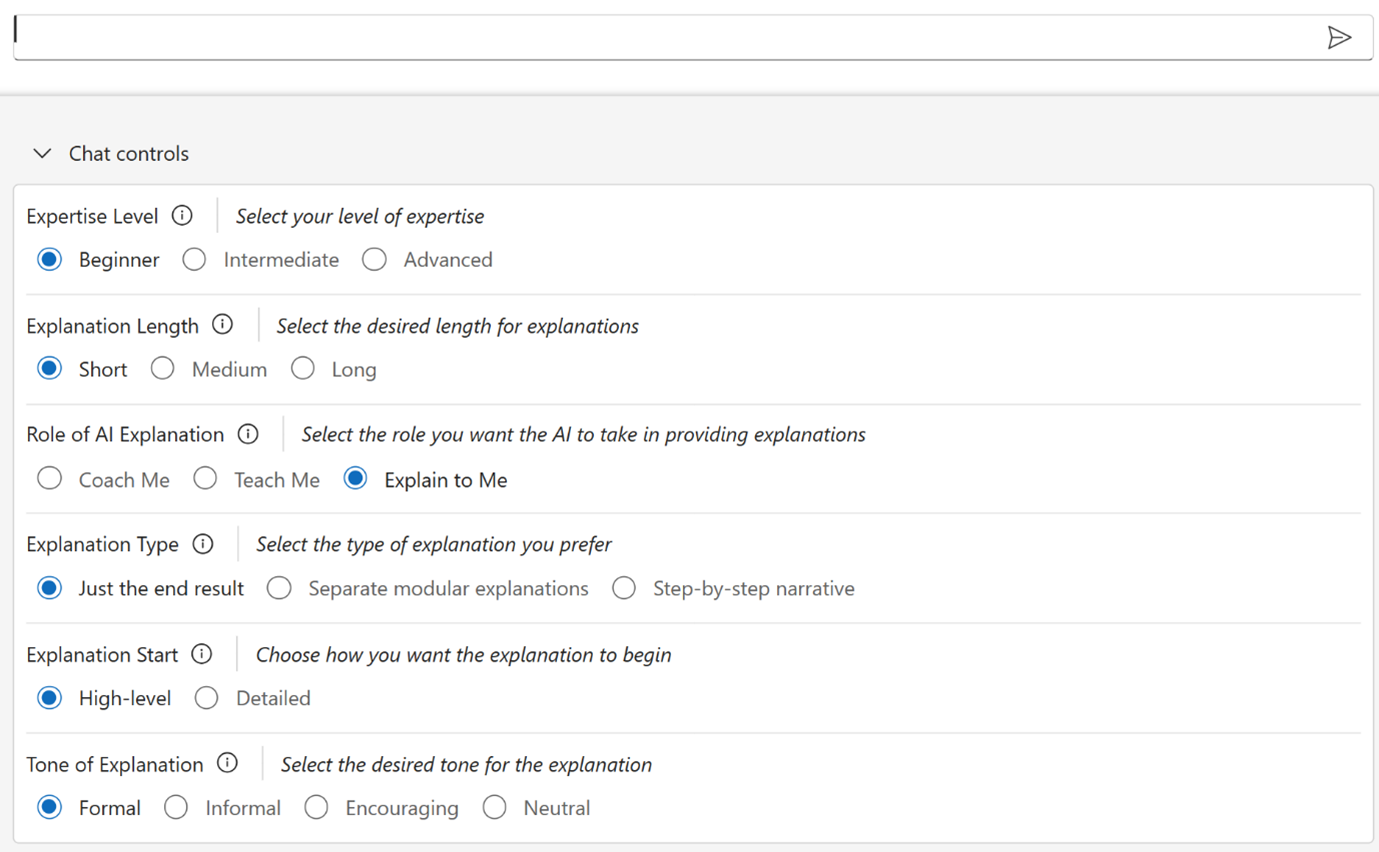

Static PRC offered a set of pre‑selected controls (Figure 1) identified in the initial study. We expected these options to be useful across many types of explanation-seeking prompts.

Dynamic prompt refinement

We built the Dynamic PRC system to automatically produce prompt options and refinements based on the user’s input, presenting them in real time so that users could adjust these controls and guide the AI’s responses more precisely (Figure 2).

![Alt text: How users interacted with the Dynamic PRC system. (1) shows a user input prompt of “Explain the formula” [with a long Excel formula] (2) Three rows of options relating to this prompt, Explanation Detail Level, Focus Areas, and Learning Objectives, with several options for each, preselected (3) User has modified the preselected options by clicking Troubleshooting under Learning Objectives (4) AI response of an explanation for the formula based on the selected options (5) Session chat control panel with text box that the user adds](https://www.microsoft.com/en-us/research/wp-content/uploads/2025/12/blog-fig03-dynamicprc-1.png)

Spotlight: AI-POWERED EXPERIENCE

Findings

Participants consistently reported that dynamic controls made it easier to express the nuances of their tasks without repeatedly rephrasing their prompts. This reduced the effort of prompt engineering and allowed users to focus more on understanding content than managing the mechanics of phrasing.

Contextual options prompted users to try refinements they might not have considered on their own. This behavior suggests that Dynamic PRC can broaden how users engage with AI explanations, helping them uncover new ways to approach tasks beyond their initial intent. Beyond exploration, the dynamic controls prompted participants to think more deliberately about their goals. Options like “Learning Objective” and “Response Format” helped them clarify what they needed, whether guidance on applying a concept or step-by-step troubleshooting help.

While participants valued Dynamic PRC’s adaptability, they also found it more difficult to interpret. Some struggled to anticipate how a selected option would influence the response, noting that the controls seemed opaque because the effect became clear only after the output appeared.

However, the overall positive response to Dynamic PRC showed us that Promptions could be broadly useful, leading us to share it with the developer community.

Technical design

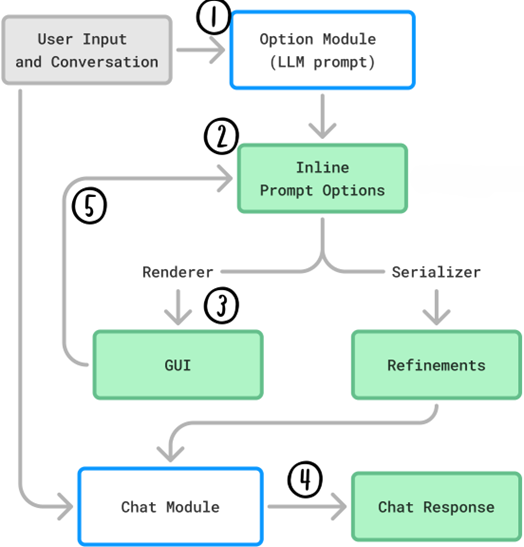

Promptions works as a lightweight middleware layer that sits between the user and the underlying language model (Figure 5). It has two main components:

Option Module. This module reviews the user’s prompt and conversation history, then generates a set of refinement options. These are presented as interactive UI elements (radio buttons, checkboxes, text fields) that directly shape how the AI interprets the prompt.

Chat Module. This module produces the AI’s response based on the refined prompt. When a user changes an option, the response immediately updates, making the interaction feel more like an evolving conversation than a cycle of repeated prompts.

Adding Promptions to an application

Promptions easily integrates into any conversational chat interface. Developers only need to add a component to display the options and connect it to the AI system. There’s no need to store date between sessions, which keeps implementation simple. The Microsoft Foundry Labs (opens in new tab) repository includes two sample applications, a generic chatbot and an image generator, that demonstrate this design in practice.

Promptions is well-suited for interfaces where users need to provide context but don’t want to write it all out. Instead of typing lengthy explanations, they can adjust the controls that guide the AI’s response to match their preferences.

Questions for further exploration

Promptions raises important questions for future research. Key usability challenges include clarifying how dynamic options affect AI output and managing the complexity of multiple controls. Other questions involve balancing immediate adjustments with persistent settings and enabling users to share options collaboratively.

On the technical side, questions focus on generating more effective options, validating and customizing dynamic interfaces, gathering relevant context automatically, and supporting the ability to save and share option sets across sessions.

These questions, along with broader considerations of collaboration, ethics, security, and scalability, are guiding our ongoing work on Promptions and related systems.

By making Promptions open source, we hope to help developers create smarter, more responsive AI experiences.

Explore Promptions on Microsoft Foundry Labs (opens in new tab)