One of the great things about being on the Excel team is the opportunity to meet with a broad set of customers. In talking with Excel users, it’s obvious that significant confusion exists about what exactly is “big data.” Many customers are left on their own to make sense of a cacophony of acronyms, technologies, architectures, business models and vertical scenarios.

It is therefore unsurprising that some folks have come up with wildly different ways to define what “big data” means. We’ve heard from some folks who thought big data was working two thousand rows of data. And we’ve heard from vendors who claim to have been doing big data for decades and don’t see it as something new. The wide range of interpretations sometimes reminds us of the old parable of the blind men and an elephant, where a group of men touch an elephant to learn what it is. Each man feels a different part, but only one part, such as the tail or the tusk. They then compare notes and learn that they are in complete disagreement.

Defining big data

On the Excel team, we’ve taken pointers from analysts to define big data as data that includes any of the following:

- High volume—Both in terms of data items and dimensionality.

- High velocity—Arriving at a very high rate, with usually an assumption of low latency between data arrival and deriving value.

- High variety—Embraces the ability for data shape and meaning to evolve over time.

And which requires:

- Cost-effective processing—As we mentioned, many of the vendors claim they’ve been doing big data for decades. Technically this is accurate, however, many of these solutions rely on expensive scale-up machines with custom hardware and SAN storages underneath to get enough horsepower. The most promising aspect of big data is the innovation that allows a choice to trade off some aspects of a solution to gain unprecedented lower cost of building and deploying solutions.

- Innovative types of analysis—Doing the same old analysis on more data is generally a good sign you’re doing scale-up and not big data.

- Novel business value—Between this principle and the previous one, if a data set doesn’t really change how you do analysis or what you do with your analytic result, then it’s likely not big data.

At the same time, savvy technologists also realize sometimes their needs are best met with tried and trusted technologies. When they need to build a mission critical system that requires ACID transactions, a robust query language and enterprise-grade security, relational databases usually fit the bill quite well, especially as relational vendors advance their offerings to bring some of the benefits of new technologies to their existing customers. This calls for a more mature understanding of the needs and technologies to create the best fit.

Excel’s role in big data

There are a variety of different technology demands for dealing with big data: storage and infrastructure, capture and processing of data, ad-hoc and exploratory analysis, pre-built vertical solutions, and operational analytics baked into custom applications.

The sweet spot for Excel in the big data scenario categories is exploratory/ad hoc analysis. Here, business analysts want to use their favorite analysis tool against new data stores to get unprecedented richness of insight. They expect the tools to go beyond embracing the “volume, velocity and variety” aspects of big data by also allowing them to ask new types of questions they weren’t able to ask earlier: including more predictive and prescriptive experiences and the ability to include more unstructured data (like social feeds) as first-class input into their analytic workflow.

Broadly speaking, there are three patterns of using Excel with external data, each with its own set of dependencies and use cases. These can be combined together in a single workbook to meet appropriate needs.

When working with big data, there are a number of technologies and techniques that can be applied to make these three patterns successful.

Import data into Excel

Many customers use a connection to bring external data into Excel as a refreshable snapshot. The advantage here is that it creates a self-contained document that can be used for working offline, but refreshed with new data when online. Since the data is contained in Excel, customers can also transform it to reflect their own personal context or analytics needs.

When importing big data into Excel, there are a few key challenges that need to be accounted for:

- Querying big data—Data sources designed for big data, such as SaaS, HDFS and large relational sources, can sometimes require specialized tools. Thankfully, Excel has a solution: Power Query, which is built into Excel 2016 and available separately as a download for earlier versions. Power Query provides several modern sets of connectors for Excel customers, including connectors for relational, HDFS, SaaS (Dynamics CRM, SalesForce), etc.

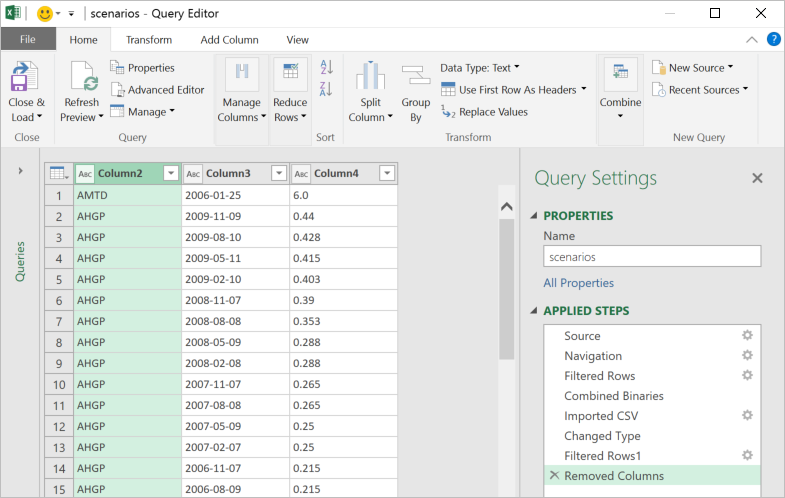

- Transforming data—Big data, like all data, is rarely perfectly clean. Power Query provides the ability to create a coherent, repeatable and auditable set of data transformation steps. By combining simple actions into a series of applied steps, you can create a reliably clean and transformed set of data to work with.

- Handling large data sources—Power Query is designed to only pull down the “head” of the data set to give you a live preview of the data that is fast and fluid, without requiring the entire set to be loaded into memory. Then you can work with the queries, filter down to just the subset of data you wish to work with, and import that.

- Handling semi-structured data—A frequent need we see, especially in big data cases, is reading data that’s not as cleanly structured as traditional relational database data. It may be spread out across several files in a folder or very hierarchical in nature. Power Query provides elegant ways of treating both of these cases. All files in a folder can be processed as a unit in Power Query so you can write powerful transforms that work on groups (even filtered groups!) of files in a folder. In addition, several data stores as well as SaaS offerings embrace the JSON data format as a way of dealing with complex, nested and hierarchical data. Power Query has a built-in support for extracting structure out of JSON-formatted data, making it much easier to take advantage of this complex data within Excel.

- Handling large volumes of data in Excel—Since Excel 2013, the “Data Model” feature in Excel has provided support for larger volumes of data than the 1M row limit per worksheet. Data Model also embraces the Tables, Columns, Relationships representation as first-class objects, as well as delivering pre-built commonly used business scenarios like year-over-year growth or working with organizational hierarchies. For several customers, the headroom Data Model is sufficient for dealing with their own large data volumes. In addition to the product documentation, several of our MVPs have provided great content on Power Pivot and the Data Model. Here are a couple of articles from Rob Collie and Chandoo.

Live query of an external source

Sometimes, either the sheer volume of data or the pattern of the analysis mean that importing all of the source data into Excel is either prohibitive or problematic (e.g., by creating data disclosure concerns).

Customers using OLAP PivotTables are already intimately familiar with the power of combining lightweight client side experiences in PivotTables and PivotCharts with scalable external engines. Interactively querying external sources with a business-friendly metadata layer in PivotTables allows users to explore and find useful aggregations and slices of data easily and rapidly.

One very simple way to create such an interactive query table external source with a large volume of data is to “upsize” a data model into a standalone SQL Server Analysis Services database. Once a user has created a data model, the process of turning it into a SQL Server Analysis Services cube is relatively straightforward for a BI professional, which in turn enables a centrally managed and controlled asset that can provide sophisticated security and data partitioning support.

As new technologies become available, look for more connectors that provide this level of interactivity with those external sources.

Export from an application to Excel

Due to the user familiarity of Excel, “Export to Excel” is a commonly requested feature in various applications. This typically creates a static export of a subset of data in the source application, typically exported for reporting purposes, free from the underlying business rules. As more applications are hosted in the browser, we’re adding new APIs that extend integration options with Excel Online.

Summary

We hope we were able to give you a set of patterns to help make discussions on big data more productive within your own teams. We’re constantly looking for better ways to help our customers make sense of the technology landscape and welcome your feedback!

—Ashvini Sharma and Charlie Ellis, program managers for the Excel team