ニュース&特集

Research Focus: Week of November 7, 2022

Welcome to Research Focus, a new series …

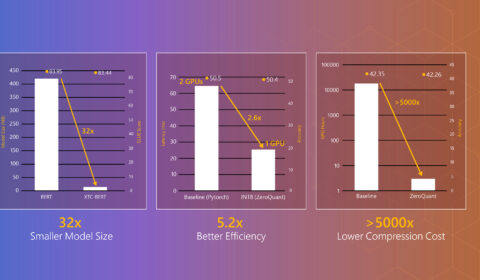

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization

| DeepSpeed Team と Andrey Proskurin

Large-scale models are revolutionizing d…

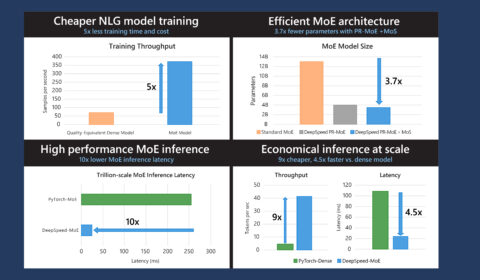

DeepSpeed: Advancing MoE inference and training to power next-generation AI scale

| DeepSpeed Team と Andrey Proskurin

In the last three years, the largest tra…

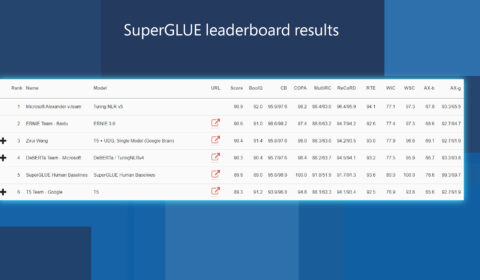

Efficiently and effectively scaling up language model pretraining for best language representation model on GLUE and SuperGLUE

| Jianfeng Gao と Saurabh Tiwary

As part of Microsoft AI at Scale (opens …

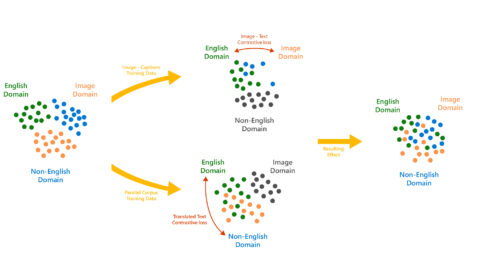

Turing Bletchley: A Universal Image Language Representation model by Microsoft

| Saurabh Tiwary

Today, the Microsoft Turing team (opens …

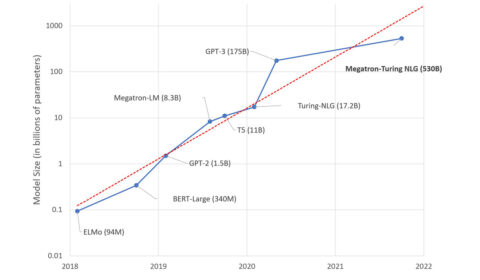

Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, the World’s Largest and Most Powerful Generative Language Model

| Ali Alvi と Paresh Kharya

We are excited to introduce the DeepSpee…

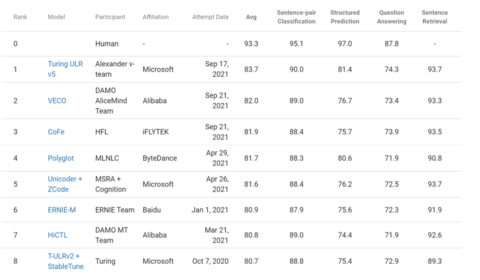

Microsoft Turing Universal Language Representation model, T-ULRv5, tops XTREME leaderboard and trains 100x faster

| Saurabh Tiwary と Lidong Zhou

Today, we are excited to announce that w…

DeepSpeed powers 8x larger MoE model training with high performance

| DeepSpeed Team と Z-code Team

Today, we are proud to announce DeepSpee…

Make Every feature Binary: A 135B parameter sparse neural network for massively improved search relevance

| Junyan Chen, Frédéric Dubut, Jason (Zengzhong) Li, と Rangan Majumder

Recently, Transformer-based deep learnin…