ニュース&特集

Given a language model, can we tell whether it is truly reasoning, or if its performance owes only to pattern recognition and memorization?

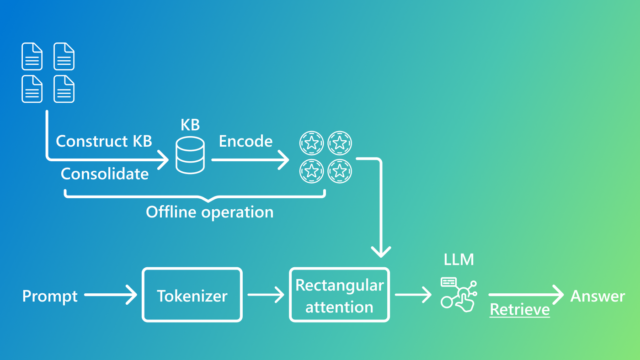

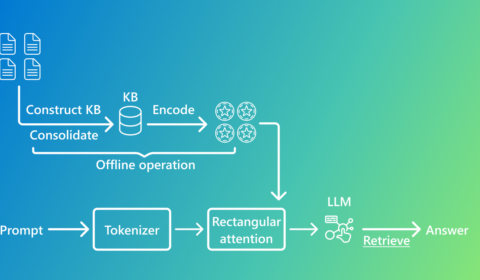

Introducing KBLaM: Bringing plug-and-play external knowledge to LLMs

| Taketomo Isazawa, Xi Wang, Liana Mikaelyan, Mathew Salvaris, と James Hensman

Introducing KBLaM, an approach that encodes and stores structured knowledge within an LLM itself. By integrating knowledge without retraining, it offers a scalable alternative to traditional methods.

ニュース | Windows Experience Blog

Phi Silica, small but mighty on-device SLM

Today we will share how the Applied Sciences team used a multi-interdisciplinary approach to achieve a breakthrough in power efficiency, inference speed and memory efficiency for a state-of-the-art small language model (SLM), Phi Silica.