Autonomous Soaring: an Open-World Challenge for AI

Techniques for automatic decision making under uncertainty have been making great strides in their ability to learn complex policies from streams of observations. However, this progress is happening mostly in — and has a bias towards — settings with abundant data or readily available high-fidelity simulators, such as games. Learning algorithms in these environments enjoy luxuries unavailable to AI agents in the open world, including resettable training episodes, many attempts at completing a task, and negligible costs of making mistakes.

Enabling fixed-wing small uninhabited aerial vehicles (sUAVs) to travel hundreds of miles without using a motor by taking advantage of regions of rising air the way some bird species and sailplane pilots do, challenges modern reinforcement learning and related approaches at all their weak spots. It requires building an AI agent that, given limited computational resources onboard, can plan far in advance for a wide variety of highly uncertain atmospheric conditions only crudely modeled by existing simulators, deal with these conditions’ non-stationarity, and learn literally on the fly, with mistakes potentially resulting in drone loss.

A frigatebird and one of its sUAV counterparts from our fleet, an F3J Shadow.

Frigatebird photo credit: Benjamint444, Wikimedia Commons (opens in new tab). Licensed under GFDL 1.2 (opens in new tab).

Project Goals

We aim to push the state of the of the art in AI decision making under uncertainty through enabling sailplane (a.k.a. “glider”) sUAVs to do what the most adept soarers — frigatebirds — can: autonomously fly long distances using energy extracted from the atmosphere. The problems we are interested in solving with AI include:

- Thermal, ridge, and wave soaring. Air can move upwards, carrying a sailplane with it, for a number of reasons. It may be heated up by the Earth’s surface beneath it, giving rise to thermals. It may be pushed up by the wind blowing against an obstacle such as a cliff, creating ridge soaring opportunities. Or, its flow over a mountain top can create standing waves that can take a sUAV as high as the stratosphere. In all cases, the sUAV’s AI needs to identify the rising air pattern and exploit it immediately, while ensuring that the sUAV remains at a safe distance from ground structures and within a safe operating envelope.

- Long-range motorless navigation with atmospheric energy harvesting. Navigating from A to B for a sailplane sUAV, which is at best equipped with a motor that can run a few minutes, is very much unlike path-finding for aircraft with stable active propulsion. Even equipped with a soaring capability, the UAV’s AI needs to route the aircraft through areas where opportunities for soaring exist in the first place. Their locations are never known with certainty, and the AI must constantly update its belief about them.

- Dynamic soaring. Frigatebirds spend much of their time meters flying over seas only meters from wave crests while hardly producing a wing flap. They extract energy from a wind gradient — an activity that requires immaculate situational awareness and control. Presently, it is beyond the capabilities of robotic flying machines.

A Radian Pro sUAV (left) and a hawk sharing a thermal.

- Incorporating visual cues into decision making. Although our sUAVs have sensors to detect a rising air mass once they are in it, they can only guess where to find it. Visual cues such as circling birds or certain cloud types can greatly help pinpoint the location of a thermal. Incorporating them into path planning and balancing energy demands of onboard video processing against its benefits present interesting research problems.

Methods and First Steps

Building an AI for soaring differs from designing controllers for other drones and ground vehicles in a fundamental way: transition dynamics crucial for continuing flight are nonstationary and a-priori unknown in many operating regions. Indeed, a sailplane sUAV is usually very uncertain where to find areas of rising air, and even when it flies through a thermal, initially doesn’t know how strong of an updraft the thermal provides, where this updraft begins and ends, and how turbulent it is. Other kinds of autonomous vehicles also occasionally face situations with uncertain or unexpected dynamics (imagine a car driving into an oil slick). However, for them these situations are usually undesirable, and they try to escape them using reactive controllers. On the contrary, AI for soaring aims to exploit this uncertain dynamics for extending flight time, and needs to learn it and plan for it deliberatively.

Following this intuition, in the first stage of this project we focused on approaches for using thermals autonomously to gain altitude.

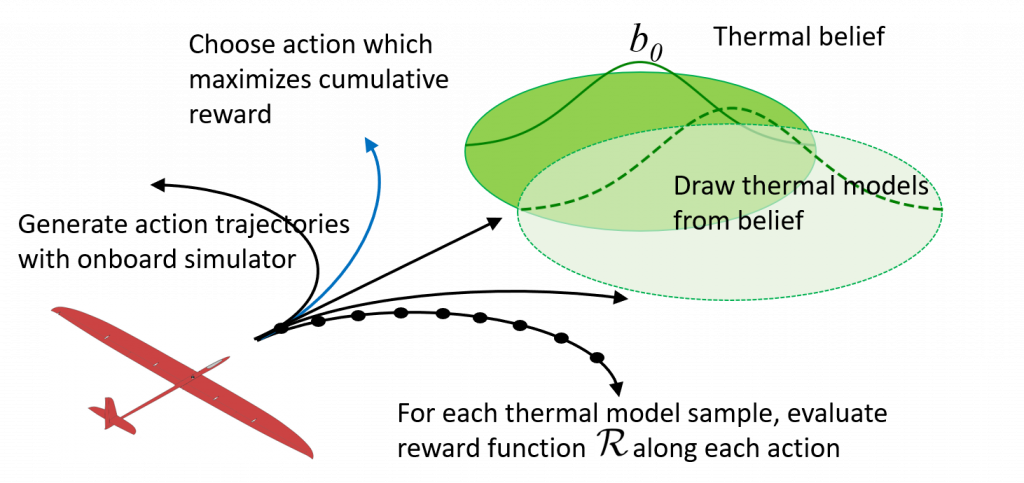

POMDSoar illustration

- In the RSS-2018 paper (opens in new tab) we modeled thermalling as a Bayesian reinforcement learning problem and proposed a thermalling algorithm, POMDSoar that deliberatively plans thermal exploration and exploitation. POMDSoar outperformed another strong thermalling controller, ArduSoar, which does not do explicit exploration, in live experiments in adverse thermalling conditions.

- In the IROS-2018 paper (opens in new tab) we studied ArduSoar in more detail to better understand its strengths and limitations. We have found it to perform very well given its approach that plans based on the current most likely dynamics model, regularly reestimating this model but not taking deliberate steps to gather information for it.

3D replay of a simultaneous live test flight of two identical Radian Pros running ArduSoar and POMDSoar. Zoom into the scene and rotate it with the mouse to get different views. The Radians flew the same default waypoint sequence, repeatedly climbing to a specified altitude using motors, turning off the motors, and gliding down. Whenever they encountered a thermal in a glide, they would interrupt waypoint following and try to soar. Thus, their thermalling ability extended the sUAVs’ flight duration. Replays of many more of our test flights are viewable on  (opens in new tab)

(opens in new tab)

Research literature describes special-purpose controllers partly based on human intuitions, such as ArduSoar, for several aspects of soaring. Despite their lack of explicit information gathering, some of them prove challenging to outperform for more general algorithms. Our current work focuses on (a) theoretically understanding when these pure control/replanning-based approaches are near-optimal (b) exploring reinforcement and imitation learning-based approaches that relax existing baselines’ assumptions and (c) researching long-range navigation algorithms that produce robust routing policies in spite of high uncertainty about the locations of lift sources.

Equipment

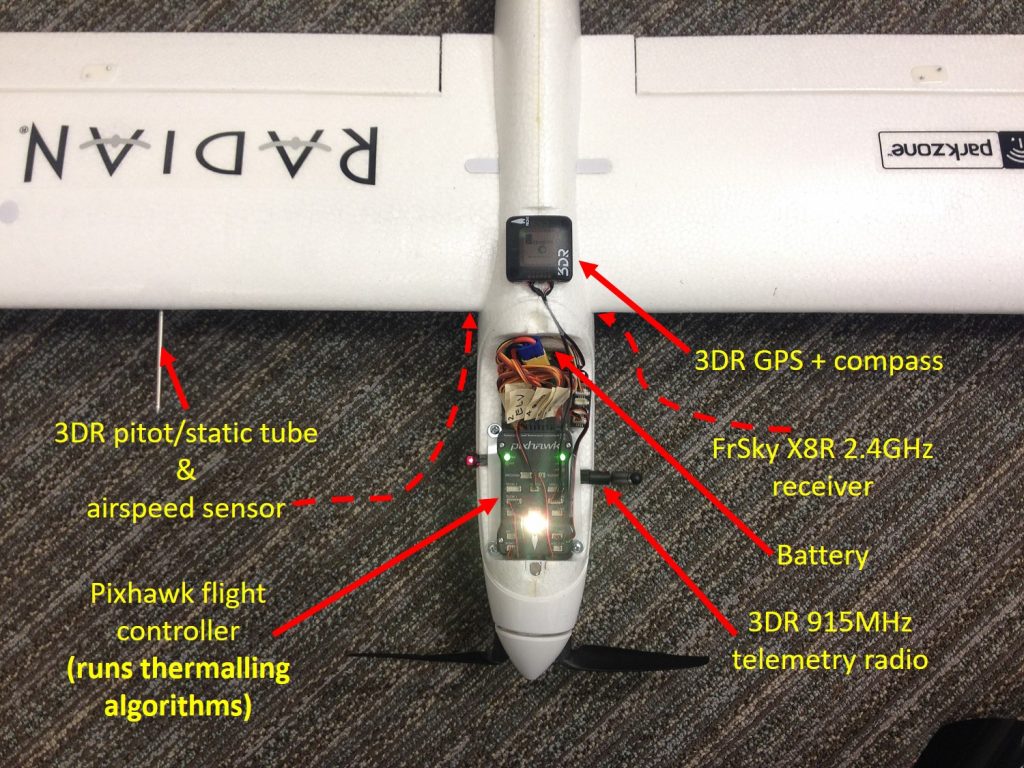

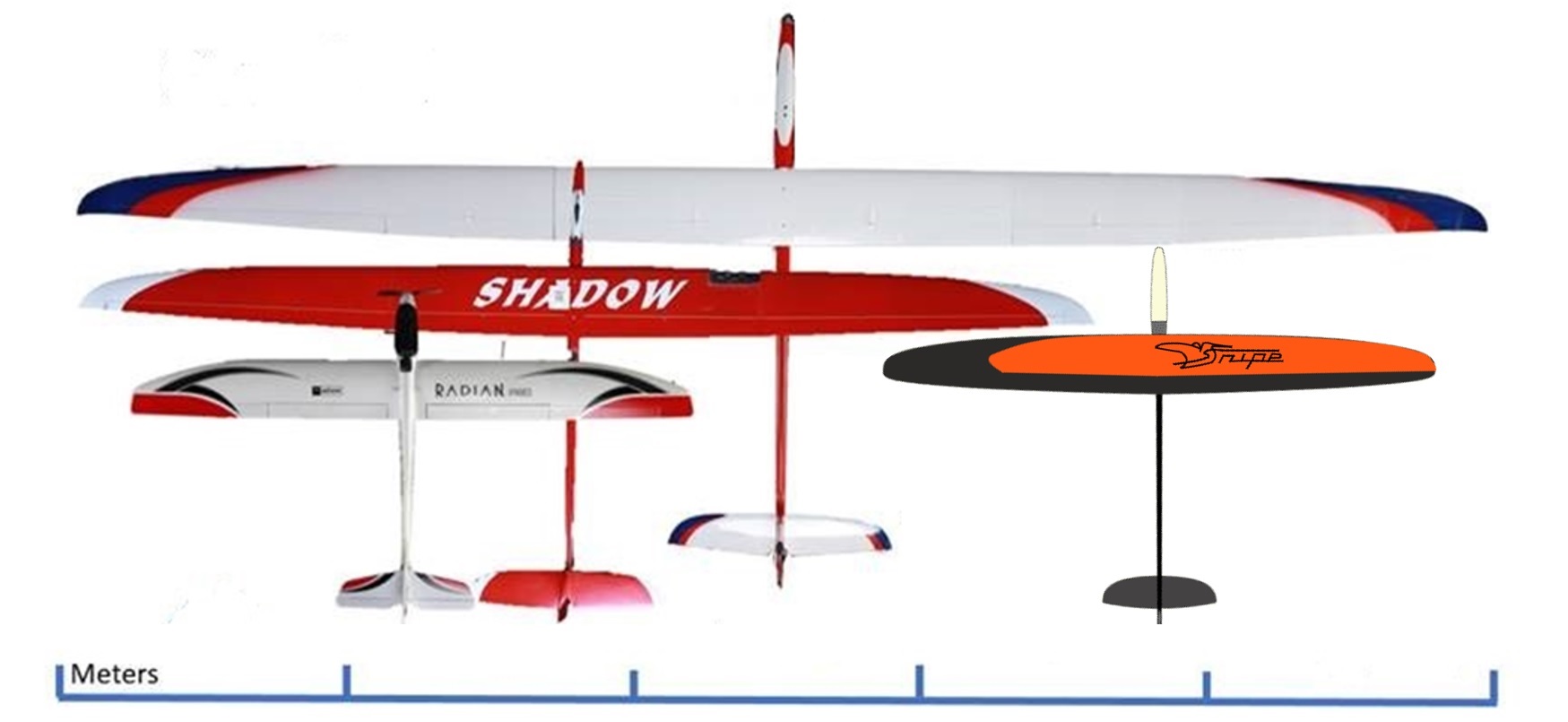

Our sUAV fleet currently consists of 1 Thermik XXXL (5m wingspan), 1 F3J Shadow (3.5m wingspan), several Radian Pros (2m wingspan), and 1 Snipe-2 (2.15m wingspan). Onbard equipment varies slightly depending on the airframe type, but always includes a GPS, compass, telemetry radio, RC receiver, pitot/static sensor, barometer, IMU, and a computer for fully autonomous flight.

Equipment onboard a Radian Pro sUAV.

Our fleet: Thermik XXXL, F3J Shadow, a few Radian Pros, and Snipe-2.

Code, Parameters, Data

The project’s code is on GitHub:

Currently, this repository contains an implementation of POMDPSoar, a POMDP-based thermalling controller from our RSS-2018 paper (opens in new tab). We have implemented POMDSoar in a fork of ArduPlane (opens in new tab), a flavor of ArduPilot (opens in new tab) open-source drone autopilot suite for fixed-wing sUAVs. Please refer to the README.md for build instructions. The parameter files for Radian Pros that we have been using for experiments are in the DATA subdirectory.

We also intend to contribute implementations of existing soaring controllers such as ArduSoar (see the IROS-2018 paper (opens in new tab)), which serve as benchmarks in our experiments, to ArduPilot directly.

3D replays of GPS traces from many of our live test flights are available on ![]() (opens in new tab)

(opens in new tab)

People

Andrey Kolobov

Principal Research Manager

Iain Guilliard

PhD Candidate/Former Intern

CSIRO, Australia

Rick Rogahn

Principal Software Engineering Lead