By Rishabh Singh, Jacob Devlin, Abdelrahman Mohamed, and Pushmeet Kohli, Microsoft Research

Despite the many advances in computing over the past decades, the actual process of writing computer software has not fundamentally changed — a programmer must manually code the exact algorithmic logic of a program in a step-by-step manner using a specialized programming language. Although programming languages have become much more user-friendly over the years, learning how to program is still a major endeavor that most computer users have not undertaken.

In a recent paper (opens in new tab), we report our latest work in deep learning for program synthesis, where deep neural networks learn how to generate computer programs based on a user’s intent. The user simply provides a few input/output (I/O) examples to specify the desired program behavior, and the system uses these to generate a corresponding program.

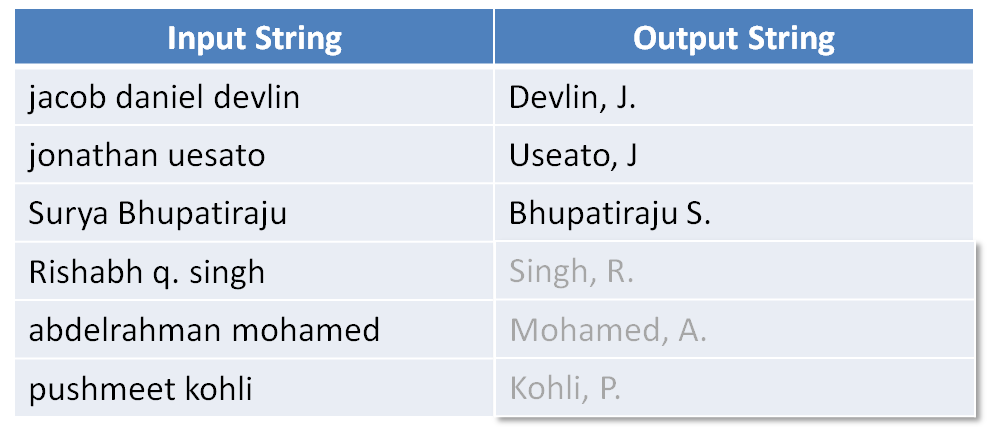

For example, imagine that a user has a list of names that she wishes to format in a specific way, as shown below. She provides a few input-output examples, and the system auto-fills the remaining outputs (shown in light gray). In cases where users have hundreds or thousands of input strings, this can be a major time saver.

The system performs this task by generating a program in a domain specific language (opens in new tab) (DSL). The user does not have to understand the details of the DSL, and in fact she generally does not see the program at all. In our DSL, the correct program corresponding to the above example is:

Concat(

ToCase(

GetToken(

input,

Type=Word,

Index=-1),

Type=Proper),

Const(", "),

ToCase(

SubString(

GetToken(

input,

Type=Word,

Index=1),

Start=0,

End=1),

Type=Proper),

Const("."))

There are two key challenges in program synthesis. First, there are trillions of possible programs in our expressive DSL, and the correct program has likely never been seen before by the system. Second, because the I/O examples are hand-generated by a human, they often contain noise (e.g., typos), as in the example above (Notice Useato instead of Uesato in the second example output).

Previous approaches to solving this problem — most notably the FlashFill (opens in new tab) system in Microsoft Excel — have relied on hand-crafted rules and heuristics to guide the search for the desired program. However, this approach makes it very difficult to extend the capabilities of the DSL, requires years of manual effort, and is also extremely sensitive to any noise in the I/O examples.

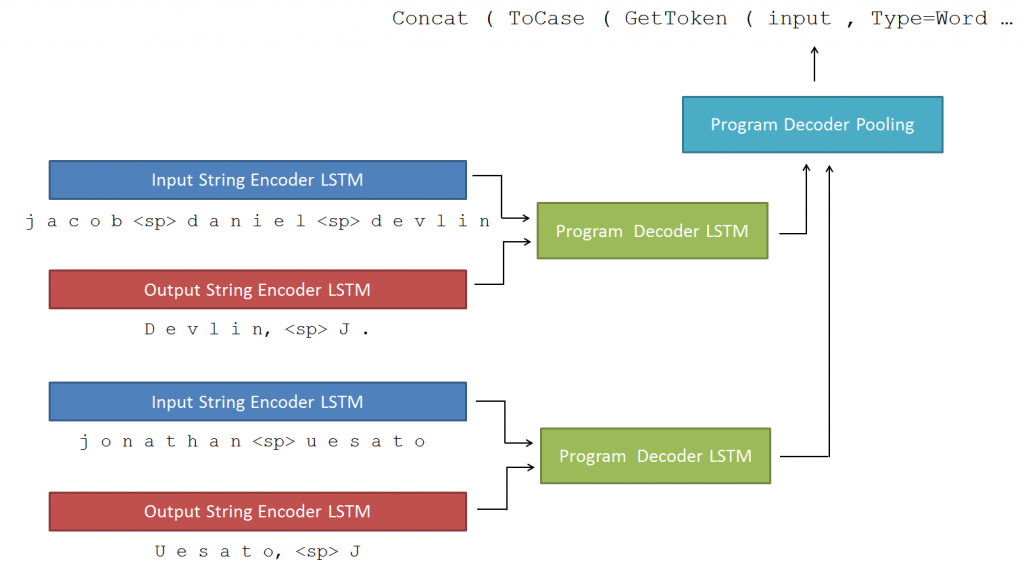

Our system, called RobustFill, leverages recent advances in deep learning to take a data-driven approach to program synthesis without the need for any hand-crafted rules. Instead, it uses an attentional sequence-to-sequence neural network, first pioneered for use in language translation, to generate the program based on the I/O examples. A sketch of our network is shown below, and the details are provided.

We trained our system on millions of randomly generated I/O + program pairs, but determined it could perform well on real-world data, because it could learn the semantics of the DSL. Overall, our system achieved 92 percent accuracy on a set of real-world benchmarks. What’s especially encouraging is the system is able to maintain its high accuracy even when the I/O examples contain significant noise.

Implications for Programming

The ability to successfully train neural architectures for learning to program in a rich functional language (FlashFill DSL) not only marks an exciting breakthrough in neural program synthesis, but also is a small but noteworthy step towards achieving more general artificial intelligence. It addresses the key challenges of incorporating interpretability and also touches on the important topic of connecting distributed representations with symbolic representations of knowledge.

We are now working on extensions of these architectures for learning programs in DSLs with stateful variables and control flow to generate richer program classes. We believe work along with direction will require us to study and tackle the fundamental technical issues involved in program synthesis and induction.

More information can be found in the papers below:

“RobustFill: Neural Program Learning under Noisy I/O” by Jacob Devlin, Jonathan Uesato, Surya Bhupatiraju, Rishabh Singh, Abdelrahman Mohamed, and Pushmeet Kohli, in arXiv:1703.07469

“Neuro-Symbolic Program Synthesis” by Emilio Parisotto, Abdelrahman Mohamed, Rishabh Singh, Lihong Li, Denny Zhou, and Pushmeet Kohli, in 5th International Conference on Learning Representations (ICLR 2017)

Contributing interns: Emilio Parisotto (CMU), Jonathan Uesato (MIT), Surya Bhuptairaju (MIT)