Imagine having to narrow down a video of passing vehicles captured on a traffic camera to red SUVs that are exceeding the speed limit. Such queries over images, videos and text are an increasingly common use-case for data analytics platforms.

A query to identify in-a-hurry red SUVs has to execute several machine learning modules on the video, for example, to detect parts of a video frame that contain vehicles, to classify the vehicles by type (SUV, truck, and so on), to track vehicles across frames and to estimate the speed of every moving vehicle. Eventually, a predicate picks the frames that have red SUVs traveling above the speed-limit.

video series

Unfortunately, such queries are slow today because the machine learning modules can be computationally intensive.

It is a waste of resources to execute the ML modules on every video frame because a clear majority of video frames will not contain speeding red SUVs. Unfortunately, it is not apparent at the outset which frames have a speeding red SUV without first executing the ML modules.

To speed up such queries, we train lightweight classifiers that run on the raw inputs and drop inputs that will not satisfy the query predicate; with these classifiers, the computationally expensive machine learning modules need only execute on a small portion of the input.

In a recent publication (opens in new tab) at SIGMOD 2018 (opens in new tab), we describe how to learn and use such lightweight classifiers which we call probabilistic predicates – PPs for short.

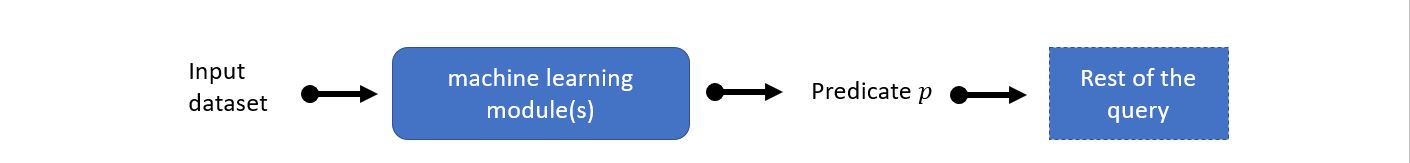

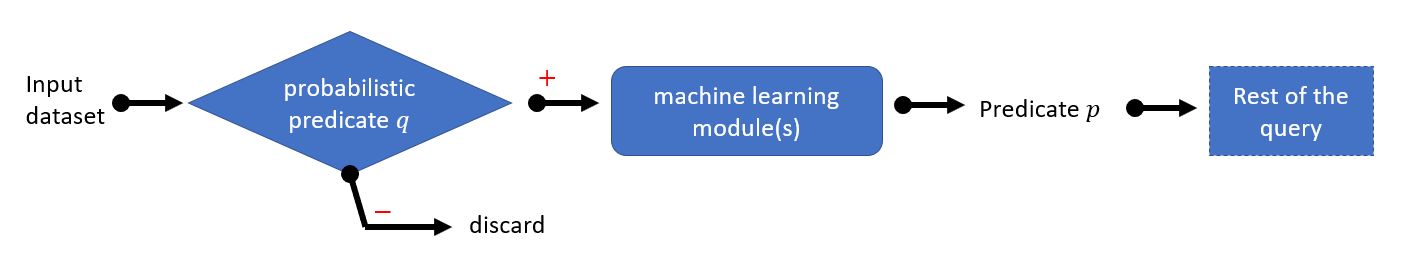

At a high level, this paper matches the pattern shown in Figure 1 below and adds probabilistic predicates as shown in Figure 2.

Figure 1 – Pattern matched in existing queries.

Figure 2 – The query pattern in Figure 1 is replaced with this pattern, where q is any necessary condition of p. Only inputs that match the probabilistic predicate are processed by the rest of the query components.

The paper addresses two key challenges.

First, to be useful, a probabilistic predicate must be much faster relative to the ML modules that it bypasses. Also, the PP must drop a large portion of the input and have few false negatives.

Simultaneously achieving these objectives on different query workloads and ML modules is challenging. We use a diverse collection of machine learning techniques, each of which is appropriate in different contexts including support vector machines and shallow neural networks. Moreover, our PP framework is future-proof in the sense that any classification technique satisfying a simple technical criterion can be used to train a PP (for example, random forests).

Next, to generalize to a large family of queries with only a small training budget, we train PPs only for a small number of simple predicates and extend query optimization techniques to construct combinations of PPs that can be used for a much larger space of query predicates. For example, using PPs trained only for column op value predicates where op can be =, <, ≤ and their negations, we can support any query predicate that is a conjunction, disjunction or negation over multiple columns.

For an expert in database systems, the key innovation is in extending a cost-based query optimizer to explore many possible necessary conditions of the true query predicate. Moreover, since our probabilistic predicates are tunable to explore different performance points on the precision-recall curve, the query optimizer extension also chooses the parameters of individual PPs such that the PP combination meets the desired objective. For example, the PP combination can be tuned to maximize benefit (ratio of fraction of input dropped to the cost of the PP combination) while meeting an accuracy threshold.

For an expert in machine learning, an interesting aspect is the use of ensembles of lightweight binary classifiers in a viola-style model cascade.

Our paper received a best demonstration award at SIGMOD 2018 and is available along with an accompanying demo video. For further information, questions, or comments you can also email us!