Autonomous Systems have attracted a lot of attention as they promise to improve efficiency, reduce cost and most importantly take on tasks that are too dangerous for humans. However, building a real-world autonomous system that would operate safely at scale is a very difficult task. For example, the first self-sufficient autonomous cars were demonstrated by Carnegie Mellon University’s Navigation Laboratory in the 1980s and while there has been great progress, achieving safe and reliable autonomy continues to intrigue the brightest of minds.

Autonomous Systems have attracted a lot of attention as they promise to improve efficiency, reduce cost and most importantly take on tasks that are too dangerous for humans. However, building a real-world autonomous system that would operate safely at scale is a very difficult task. For example, the first self-sufficient autonomous cars were demonstrated by Carnegie Mellon University’s Navigation Laboratory in the 1980s and while there has been great progress, achieving safe and reliable autonomy continues to intrigue the brightest of minds.

We are excited to announce that Microsoft Research is teaming up with Carnegie Mellon University to explore and test these ideas and tool chains. Specifically, Microsoft will be partnering with the Carnegie Mellon team led by Sebastian Scherer and Matt Travers to solve the DARPA Subterranean Challenge (opens in new tab). The challenge requires building robots that can autonomously search tunnels, caves and underground structures. This exacting task requires a host of technologies that include mapping, localization, navigation, detection, planning, and so on, and consequently the collaboration will center on toolchains that would enable rapid development for autonomous systems by utilizing simulations, exploiting modular structure and providing robustness via statistical machine learning techniques.

We aim to explore how to enable developers, engineers and researchers to build autonomy across a wide variety of domains without needing to spend additional decades of research and development. We need tools that allow subject matter expertise, specific to the application domain, to be fused with the knowledge we’ve garnered developing various machine learning, AI and robotic systems over the last few decades.

PODCAST SERIES

Field robotics is hard, due to the effort, expense, and time required in designing, building, deploying, testing, and validating physical systems. Moreover, the recent gamut of Deep Learning and Reinforcement Learning methodologies requires data at a scale that is impossible to collect in the real-world. The ability to run high-fidelity simulations at scale on Azure, is an integral part of this process that allows rapid prototyping, engineering and efficient testing. AI centric simulations, such as AirSim (opens in new tab) allow generation of meaningful training data that greatly helps in tackling the challenge of the high sample-complexity of popular machine learning and reinforcement learning methods.

Autonomous systems need to make a sequence of decisions under a host of uncertain factors that include the environment, other actors, its own perception and dynamic system. Consequently, techniques such as imitation and reinforcement learning lie at the foundation of building autonomous systems. For example, in a collaboration with Technion, Israel (opens in new tab), hi-fidelity simulations in AirSim were used to train for an autonomous Formula SAE design competition car via imitation learning (see Figure 1.) While great success has been attained in the solving of arcade games, achieving real-world autonomy is non-trivial. Besides the obvious challenge of requiring a large amount of training data, further enhancements are needed to make ML paradigms such as reinforcement learning and imitation learning accessible to the non-ML crowd.

Figure 1 – Imitation learning in Formula SAE design competition car. Video provided by the Technion Formula student team.

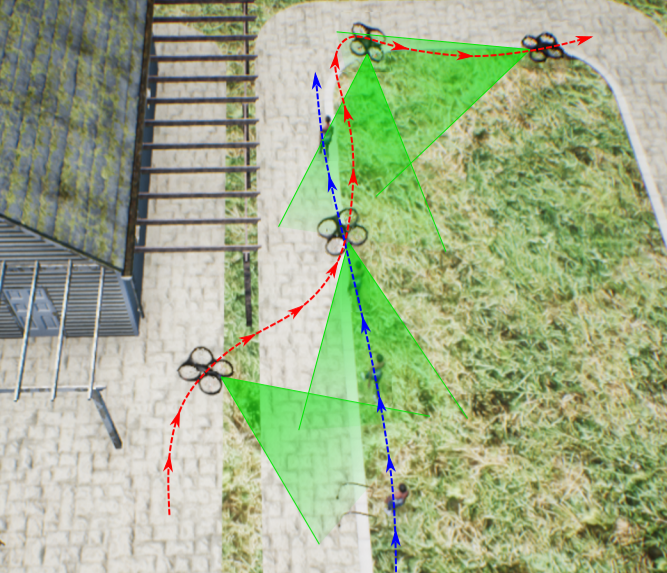

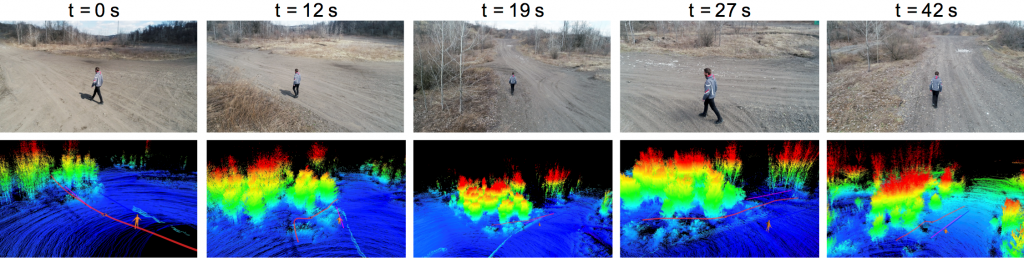

Reinforcement Learning has a distinctive advantage for solving tasks in autonomy that people find very easy to evaluate, but that are extremely hard to define explicitly. For example, the task of taking a beautiful photograph, or making an aesthetically pleasing movie. While it is easy to recognize a good video when we watch one, it is hard to define exactly in words what made it so appealing. Our collaborators at Carnegie Mellon University are using the deep RL with AirSim to train, directly from human supervision, an AI movie director (opens in new tab) that automatically selects the types of shots more appropriate for each scene context depending on the surrounding obstacles and actor motion. The deep RL model learns to encode human aesthetics preferences that previously were only part of the operator’s intuition. Such systems (opens in new tab) have been successfully been deployed in field experiments while filming action scenes with runners and bikers, as seen in Figures 2 and 3.”

Figure 2 – Time lapse of drone trajectory while filming a moving actor in the AirSim photo-realistic environment. Since the left hand side is occupied by the house, the drone autonomously switches shots using the trained deep RL model to keep the final footage smooth and aesthetically pleasing for the viewer.

Figure 3 – Field test in real-world using the online artistic shot selection module. UAV trajectory is shown in red, actor motion forecast in blue, and desired shot reference in pink. The UAV initially does a left side shot at t = 0s in the open field, but as a line of trees appear, it switches to a back shot at t = 12s. When an opening appears among the tree lines, the UAV selects a left side shot again at t = 27s, and when the clearance ends, the module selects a back shot again.

Reinforcement learning and other sequential decision-making tasks are notoriously hard even for ML experts due to the highly complex objective function they are trying to optimize for. We need to alleviate these challenges via introducing abstractions that are designed to enable non-ML experts to infuse their knowledge into the RL training. For example, a human teacher often decomposes a complex task for students. A similar ability to decompose complex autonomous tasks that we wish to teach the machine can go a long way toward efficiently solving the problem.

Figure 4 highlights the challenge of building an autonomous forklift for a warehouse in AirSim. The goal here is to build autonomy in these machines so that they can automatically transfer loads from one location to another. This is a fairly complex autonomy task which can be broken down into a number of simpler concepts such navigation to the load, aligning with the load, picking up-the load, detecting other actors and forklifts, braking appropriately, monitoring, and keeping the battery sufficiently charged. Such decomposition of the complex autonomy task helps as the individual concepts are far simpler to learn, moreover the resulting modularity also enables transparency and debuggability of the resulting autonomy. Enabling users to express and rapidly train decomposition is a critical factor in rapidly designing and building the autonomous systems.

Figure 4 – Autonomous forklifts in a warehouse using concept decomposition.

AI-centric simulations that are modular and that seamlessly interface within a wide-ranging ML and robotics framework transforms the challenge of building real-world robots from a hardware-centric task to one that is software-centered. Figure 5 shows a simulation of wind-turbine in AirSim that was used to create quadrotors for autonomous inspection. Such autonomous inspections are non-trivial as each wind-turbine develops unique physical posture due to bends, droops and twists over the course of their lifetime and consequently the robot has to adapt on the go. Ability to create several simultaneous simulations of such wind-turbines where the physical characteristics can be controlled programmatically is a key to solve the problem efficiently. Thus, not only various components, such as a perception module that estimates the physical properties of the turbine, could be trained, we also can develop, test, and validate the entire autonomy stack across millions of unique situations.

Figure 5 – Simulation of wind-turbine in AirSim

A huge part of building autonomy is the engineering of the systems and their integration with various computer and software modules. The toolchain is increasingly building upon the rich Microsoft ecosystem of Azure cloud, IoT, and AI technologies in order to achieve the ambitious vision of Robotics as a software.

We thank Amir Biran, Matthew Brown, David Carmona, Tom Hirshberg, Ratnesh Madaan, Gurdeep Singh Pall, Jim Piavis, Kira Radinsky, Sebastian Scherer, Matt Travers, Dean Zadok for collaborations and inputs to this blog.