Recent successes in machine intelligence hinge on core computation ability to efficiently search through billions of possibilities in order to make decisions. Sequences of decisions, if successful, often suggest that perhaps computation is catching up to–or even surpassing–human intelligence. Human intelligence, on the other hand, is highly generalizable, adaptive, robust and exhibits characteristics that the current state-of-the-art machine intelligence systems simply are not yet capable of producing. For example, humans are able to plan significantly far in advance based on the anticipated outcomes, even in the presence of many unknown variables. Human intelligence shines in scenarios in which other humans and living beings are involved and consistently demonstrates reasoning and meta-reasoning abilities. Human intelligence is also sympathetic, empathetic, kind, nurturing and–importantly–able to relinquish and redefine the goals of a mission for the benefit of a greater good. While almost all the work in machine intelligence focuses on “how”, the hallmark of human-intelligence is the ability to ask “what” and “why”.

Our hypothesis is that emotional intelligence is key to unlocking emergence of machines that are not only more general, robust and efficient, but that also are aligned with the values of humanity. The affective mechanisms in humans allow us to accomplish tasks that are far too difficult to program or teach current machines. For example, our sympathetic and parasympathetic responses allow us to stay safe and to be aware of danger. Our ability to recognize affect in others and imagine ourselves in their situations makes us far more effective in taking appropriate decisions and navigating in the complex world. Drives and affect such as hunger, curiosity, surprise, and joy enable us to regulate our own behavior and also determine the sets of goals that we wish to achieve. And finally, our ability to express our own internal state is an excellent way to signal to others and possibly influence their decision making.

Consequently, it has been hypothesized (opens in new tab) that building such an emotional intelligence into a computational framework at minimum would require the following capabilities:

- Recognizing others’ emotions

- Responding to others’ emotions

- Expressing emotions

- Regulating and utilizing emotions in decision making

Historically, the research in building emotionally intelligent machines has primarily taken the human-machine collaboration point of view and mostly focused on the first three capabilities. For example, the earliest work (opens in new tab) on affect recognition started almost three decades ago, where physiological sensors, cameras, microphones, and so on were used to detect a host of affective responses. While there is much debate about how consistently and universally people express emotions on their faces and other physiological signals, and whether these really reflect how they feel inside, researchers have successfully built algorithms to identify useful signals in the noisy world of human expressions as well as demonstrated that these signals are consistent with socio-cultural norms (opens in new tab).

Ability to take appropriate actions based on the internal cognitive state of a human is imperative for an emotionally intelligent agent. Applications (opens in new tab) such as automatic tutoring systems, mental and physical health support, and applications for improving productivity lie at the forefront of what is being pursued. The recent line of work on sequential decision making, such as contextual bandits, is slowly making gains in this rich area. Our own work, for example, shows how a system sensitive to affective aspects of managing a diet could help subjects make good decisions.

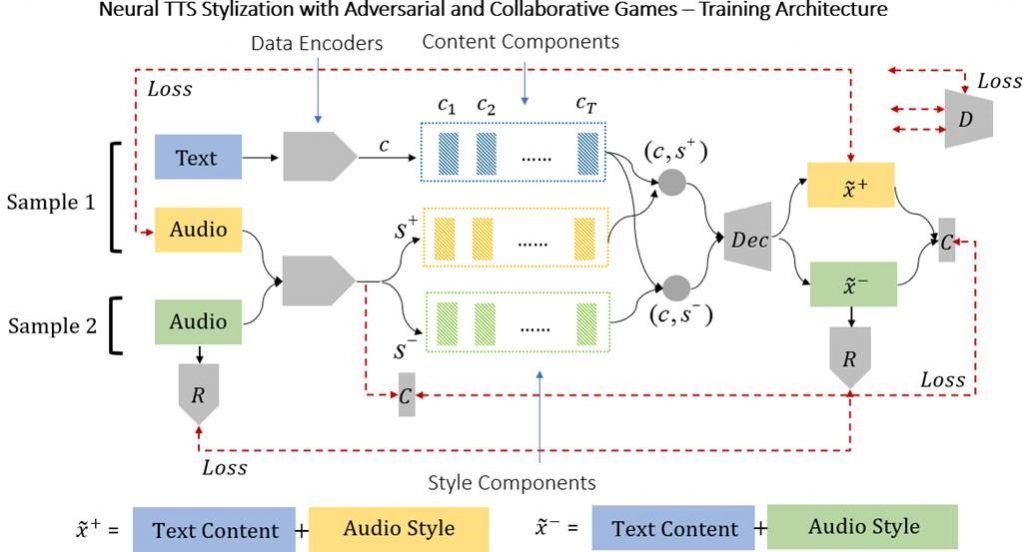

Expression of affect has been at the forefront of computing for many decades now. Even simple signals (for example, light, color, sound) have the ability to convey and provoke rich emotion. In “Neural TTS Stylization with Adversarial and Collaborative Games,” (co-authored with Shuang Ma and Yale Song) to be presented at the Seventh International Conference on Learning Representations—ICLR 2019 (opens in new tab), we propose a new machine learning approach to synthesizing realistic human sounding speech that is expressive. This architecture challenges the model to generate realistic sounding speech that is faithful to the textual content while maintaining an easily controllable dial for changing the emotion expressed in an independent fashion. Our model achieves start-of-the-art results across multiple tasks, including style transfer (content and style swapping), emotion modeling, and identity transfer (fitting a new speaker’s voice). An open-source implementation is available with the paper.

(opens in new tab)

Figure 1-Our neural architecture uses a combination of adversarial and collaborative approaches. The algorithm received two audio samples during each training step and has to produce two samples one of which is a reconstruction of the first audio sample (i.e., has both the content and style of sample 1) and the second which has the content of sample 1 and the style of sample 2. In doing so it creates an internal representation of both content and style which are disambiguated.While the recognition, expression and intervention aspects of artificially emotionally intelligent systems have been studied in-depth over the past 20 years, there is a still more compelling form of intelligence—a system that utilizes the affective mechanisms effectively in order to learn better and make choices efficiently. In the most recent line of work, we hope to explore questions of how to build such affective mechanisms that help our computational processes achieve more than what they accomplish currently.

Our recent work, also appearing at ICLR 2019, explores the idea of affect-based intrinsic motivations that can aid in learning decision-making mechanisms. Much of the recent success in artificial intelligence in solving games such as Go, Pac-Man, and text-based RPGs rely on reinforcement learning, where good actions are rewarded and bad actions are penalized. However, it requires a large number of trials in such an action-reward framework for a computational agent to learn a reasonable policy. The intuition behind our proposal is to get inspiration from how humans and other living beings leverage affective mechanisms to learn much more efficiently.

As a human learns to navigate the world, the body’s (nervous system’s) responses provide constant intrinsic feedback about the potential consequence of action choices, for example, becoming nervous when close to a cliff’s edge or when driving fast around a bend. Physiological changes are correlated with these biological preparations to protect one-self from danger. The anticipatory response in humans to a threatening situation is for the heart rate to increase, heart rate variability to decrease, and for blood to be diverted from the extremities and for the sweat glands to dilate. This is the body’s “fight or flight” response. Humans have evolved over millions of years to build up these complex systems. What if machines had similar feedback systems?

Figure 2-Visceral Machines are a novel approach to reinforcement learning that leverages neural networks trained on physiological signals to mimic autonomic nervous system responses. Such signals then are used as intrinsic reward mechanisms to train agents that can learn to accomplish various tasks.

In “Visceral Machines: Risk-Aversion in Reinforcement Learning with Intrinsic Physiological Rewards,” we propose a novel approach to reinforcement learning that leverages an intrinsic reward function trained on human fight or flight behavior.

Our hypothesis is that such reward functions can circumvent the challenges associated with sparse and skewed rewards in reinforcement learning settings and can help improve sample efficiency. In our case, extrinsic rewards from events are not necessary for the agent to learn. We test this in a simulated driving environment and show that it can increase the speed of learning and reduce the number of collisions during the learning stage. We are excited about the potential of training autonomous systems that mimic the ability to feel and respond to stimuli in an emotional way.

Figure 3-An example of the physiological response (blood volume pulse) recorded from a human during a driving task. A zoomed in section of the pulse wave with frames from the view of the driver are shown. Note how the pulse wave pinches between seconds 285 and 300, during this period the driver collided with a wall while turning sharply to avoid another obstacle. The pinching begins before the collision occurs as the driver’s anticipatory response is activated. The intrinsic reward component aims to recreate statistical properties of the blood volume pulse wave during driving in the simulated environment

A lot of computer scientists and roboticists have aspired to build agents that resemble memorable characters in popular science fiction such as KITT and R2D2. However, rich opportunities exist for building holistic affective computing mechanisms that go a step beyond and to help us build robust, efficient and non-myopic artificial intelligence. We hope that this work inspires a fresh look at how emotions can be used in artificial intelligence.

We hope to see you at ICLR in New Orleans in May and look forward to sharing ideas and advancing the conversation on the possibilities in the exciting research realm of emotionally intelligent agents.