Project Turing

A deep learning initiative inside Microsoft to build the best-in-class models for use by Microsoft and power AI applications across entire Microsoft product family (Word, PowerPoint, Office, Dynamics,…

A deep learning initiative inside Microsoft to build the best-in-class models for use by Microsoft and power AI applications across entire Microsoft product family (Word, PowerPoint, Office, Dynamics,…

AI at Scale is an applied research initiative that works to evolve Microsoft products with the adoption of deep learning for both natural language text and image processing.…

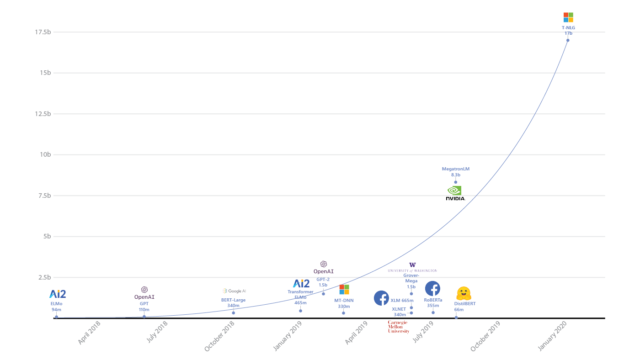

We are excited to introduce the DeepSpeed- and Megatron-powered Megatron-Turing Natural Language Generation model (MT-NLG), the largest and the most powerful monolithic transformer language model trained to date,…

This figure was adapted from a similar image published in DistilBERT. Turing Natural Language Generation (T-NLG) is a 17 billion parameter language model by Microsoft that outperforms the…

As the CVP leading the Turing team within the M365 organization at Microsoft, I work with a group of AI enthusiasts who are passionate about making a practical impact. We spearhead various deep learning efforts as part of Project Turing and MSAI, developing state-of-the-art models and frontier generative AI. Our innovations include Microsoft Copilot, which revolutionizes interaction and collaboration with machines, and the Turing family of foundational models, widely adopted across Microsoft products. We also incorporate semantic search and vector indexing into Microsoft 365 applications, transforming enterprise data navigation. Our models drive AI solutions for linguistic, visual, and multimodal challenges across Microsoft’s product line, from M365 to Bing to Windows to Edge, working with strategic platform partners and industry specialists to maximize performance, boost training efficiency, and minimize inference cost.

Today, we are excited to announce that with our latest Turing universal language representation model (T-ULRv5), a Microsoft-created model is once again the state of the art and at the top of the Google XTREME public leaderboard. Resulting from a…

Today, we are happy to announce that Turing multilingual language model (T-ULRv2) is the state of the art at the top of the Google XTREME public leaderboard. Created by the Microsoft Turing team in collaboration with Microsoft Research, the model…

This paper presents GEneric iNtent Encoder (GEN Encoder) which learns a distributed representation space for user intent in search. Leveraging large scale user clicks from Bing search logs as weak supervision of user intent, GEN Encoder learns to map queries…

Transformers have obtained significant success modeling natural language as a sequence of text tokens. However, in many real world scenarios, textual data inherently exhibits structures beyond a linear sequence such as trees and graphs; many tasks require reasoning with evidence…

This paper studies a new scenario in conversational search, conversational question suggestion, which leads search engine users to more engaging experiences by suggesting interesting, informative, and useful follow-up questions. We first establish a novel evaluation metric, usefulness, which goes beyond…

Deep neural networks have recently shown promise in the \emph{ad-hoc retrieval} task. However, such models have often been based on one field of the document, for example considering document title only or document body only. Since in practice documents typically…