Why does AI at scale matter?

Microsoft’s AI at Scale initiative is pioneering a new approach that will result in next-generation AI capabilities that are scaled across the company’s products and AI platforms. Building on years of systems work by Microsoft researchers, particularly in the area of parallel computation, AI at Scale makes it possible to quickly train machine learning models at an unprecedented scale. This includes developing a new class of large, centralized AI models that can be scaled and specialized across product domains, as well as creating state-of-the-art hardware and infrastructure to power this new class of models.

ONNX Integration

AI at Scale capabilities, including DeepSpeed, have been integrated into the ONNX (Open Neural Network Exchange) runtime to add distributed training support for machine learning models that is framework-agnostic and hardware-agnostic.

Project Parasail

Pioneering a novel approach to parallelizing a large class of seemingly sequential applications, particularly stochastic gradient descent.

Project Fiddle

Pipeline parallelism is a novel approach to model training to overcome the higher communication costs of data parallelism and the hardware resource inefficiency of model parallelism.

DeepSpeed for large model training

DeepSpeed is an open-source PyTorch-compatible library that vastly improves large model training by improving scale, speed, cost and usability—unlocking the ability to train models with over 100 billion parameters enabling breakthroughs in areas such as natural language processing (NLP), and multi-modality (combining language with other types of data, such as images, video, and speech).

Advances in natural language processing

The Turing Natural Language Generation (T-NLG) is a 17-billion parameter language model that outperforms the state-of-the-art on many downstream NLP tasks. In particular, it can enhance the Microsoft Office experience through writing assistance and answering reader questions paving the way for more fluent digital assistants.

On the multi-modality language-image front, we’ve significantly outperformed the state-of-the-art on downstream language-image tasks (e.g. visual search) with Oscar (Object-Semantics Aligned Pre-training).

Recently, pre-trained models such as Unicoder, M-BERT (opens in new tab), and XLM (opens in new tab) have been developed to learn multilingual representations for cross-lingual and multilingual tasks. By performing masked language model, translation language model, and other bilingual pre-training tasks on multilingual and bilingual corpora with shared vocabulary and weights for multiple languages, these models obtain surprisingly good cross-lingual capability. However, the community still lacks benchmark datasets to evaluate such capability. To help researchers further advance language-agnostic models and make AI systems more inclusive, the XGLUE dataset helps researchers test a language model’s zero-shot cross-lingual transfer capability – its ability to transfer what it learned in English to the same task in other languages.

We are incorporating these breakthroughs into the company’s products, including Bing, Office, Dynamics, and Xbox. Read this blog post (opens in new tab) to learn more.

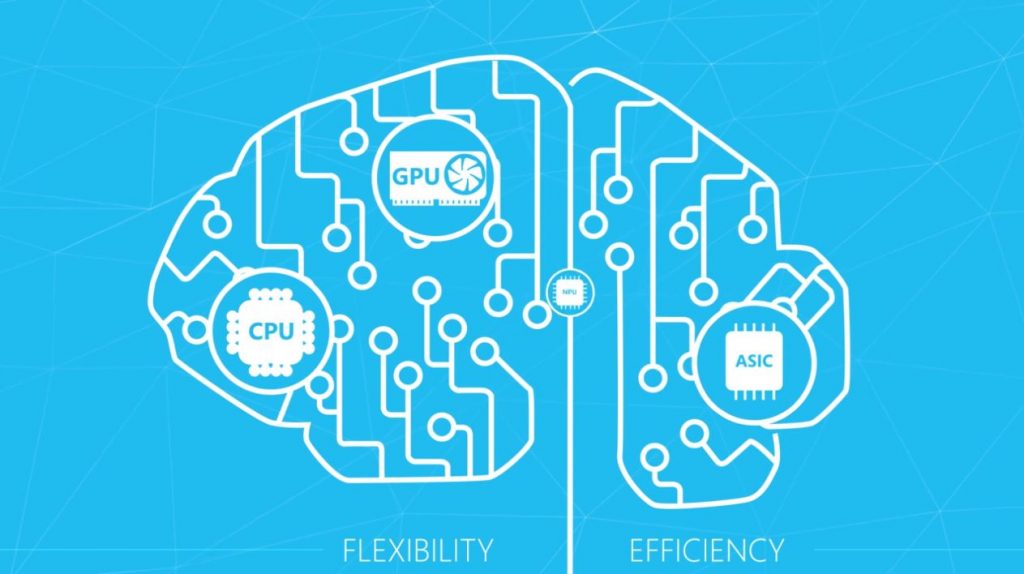

New hardware for deep learning

Project Brainwave’s hardware boasts a soft Neural Processing Unit (NPU), based on a high-performance field-programmable gate array (FPGA), which accelerates deep neural network (DNN) inferencing, making it ideal for applications in computer vision and natural language processing. This approach is transforming computing by augmenting CPUs with an interconnected and configurable compute layer composed of programmable silicon.

With a high-performance, precision-adaptable FPGA soft processor, Microsoft datacenters can serve pre-trained DNN models with high efficiencies at low batch sizes.

Exploiting FPGAs on a datacenter-scale compute fabric, a single DNN model can be deployed as a scalable hardware microservice that leverages multiple FPGAs to create web-scale services. This can process massive amounts of dynamic data.

Spell correction at scale

Customers around the world use Microsoft products in over 100 languages, yet most do not come with high-quality spell correction. This prevents customers from maximizing their ability to search for information on the web and enterprise—and even to author content. With AI at Scale, we used deep learning along with language families to solve this problem for customers by building what we believe is the most comprehensive and accurate spelling correction system ever in terms of language coverage and accuracy.