VROOM (Virtual Robotic Overlay for Online Meetings) is a prototype telepresence system that puts belonging at the center of the experience. It aims to help a remote individual feel like a remote physical place belongs as much to them as the local people in it and to help local people feel that a remote individual belongs with them in the same physical space. VROOM is a bi-directional asymmetric XR telepresence system using AR, VR, Robots, and 360 cameras. See the VROOM video on YouTube (opens in new tab).

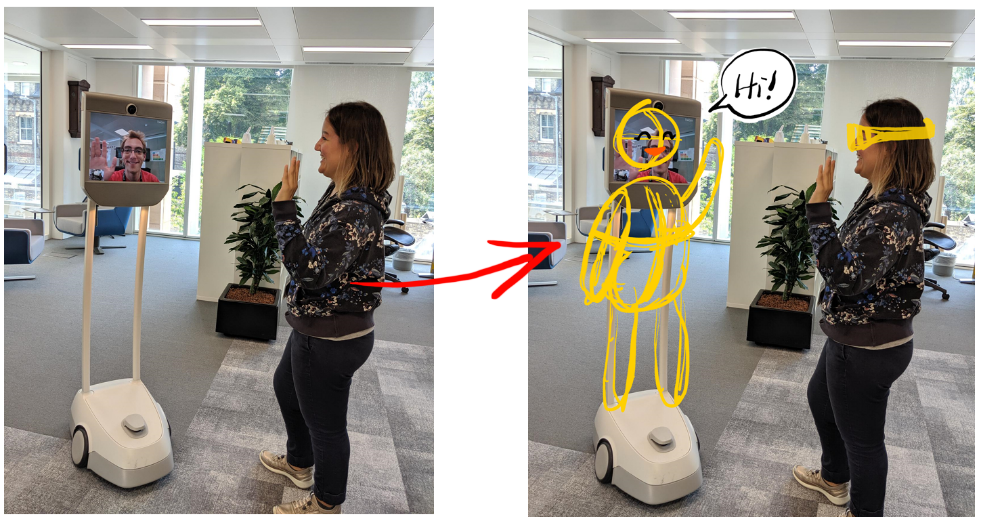

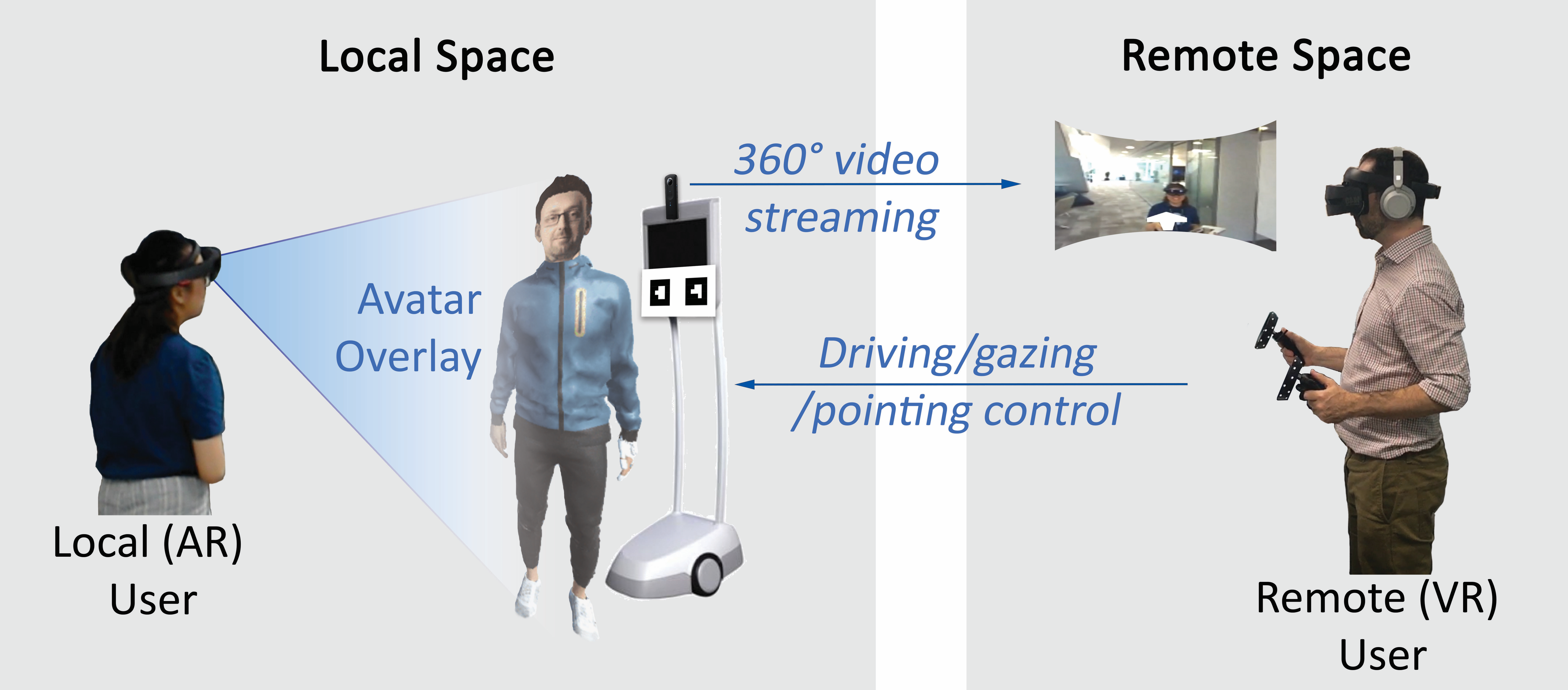

For a person in the local activity space wearing a Hololens, an augmented reality (AR) interface shows a life-size avatar of the remote user overlaid on a Beam telepresence robot using tracker markers. The Beam is also equipped with an extra 360° camera.

For the remote user, a head-mounted virtual reality (VR) interface presents an immersive 360° view of the local space. The remote user also has VR controllers, which allow for both piloting and gestural expression. The remote user has separate mobile and visual autonomy in the local activity space.

The remote user’s speech, head pose, and hand movements are applied to the avatar, along with canned blink, idle, and walking animations. The local user sees the entire avatar body in a third-person view. The remote user sees the avatar from the shoulders down in the first-person view. Local users can thus identify the remote user as a specific person, while the remote user has an identifiable embodiment of self, and both can take advantage of naturalistic verbal and gestural communication modalities.

Thanks to our Microsoft colleagues for helping develop VROOM: James Scott, Xu Cao, He Huang, Minnie Liu, Zhao Jun, Matthew Gan, and Leon Lu