Learning to Code: Machine Learning for Program Induction

The task of synthesizing programs given only example input-output behaviour is experiencing a surge of interest in the machine learning community. We present two directions for applying machine learning ideas to this task. First we…

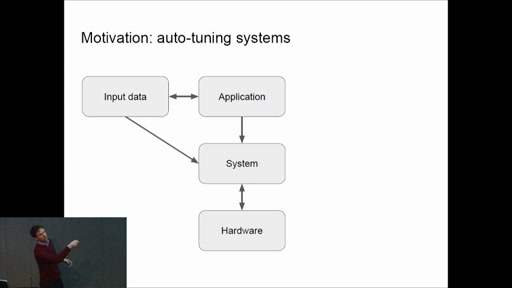

Bayesian optimisation in many dimensions with bespoke models

Bayesian optimisation (BO) is an optimisation method which incrementally builds a statistical model of the objective function to refine its search. Unfortunately, due to the curse of dimensionality, BO can fail to converge in problems…

The Automatic Statiscian: a project update

Three years ago, James Lloyd and I presented the Automatic Statistician at the joint MSR Cambridge machine learning meeting. The aim of this project is to automate the exploratory analysis and interpretation of data. Although…

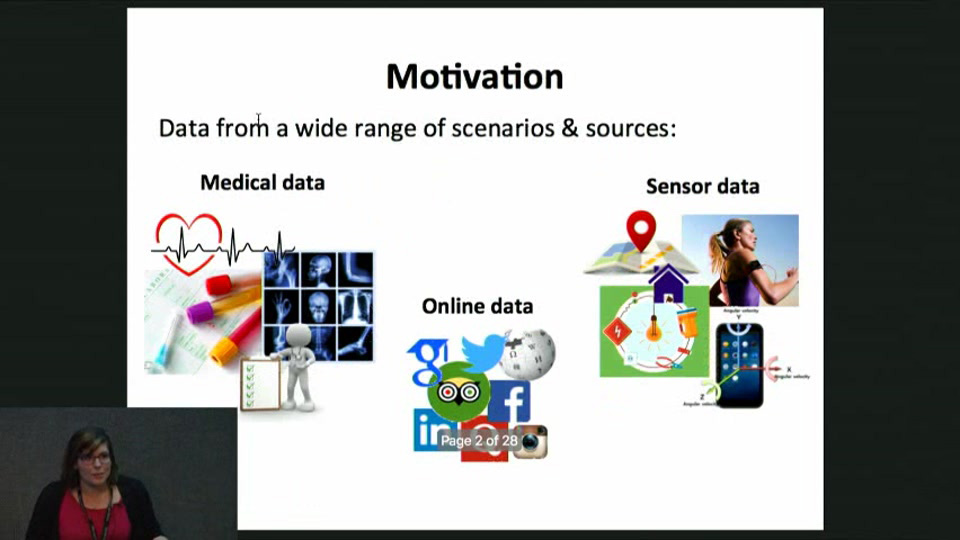

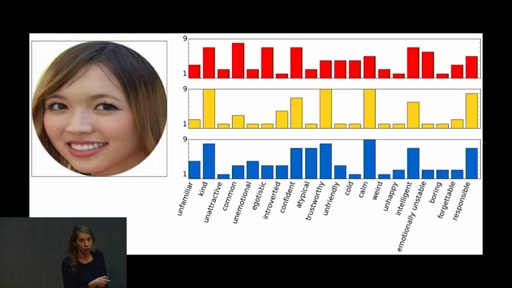

Automatic Discovery of the Statistical Types of Variables in a Dataset

A common practice in statistics and machine learning is to assume that the statistical data types (e.g., ordinal, categorical or real-valued) of variables, and usually also the likelihood model, is known. However, as the availability…

Approximate Inference with Amortised MCMC

We propose a novel approximate inference algorithm that approximates a target distribution by amortising the dynamics of a user-selected MCMC sampler. The idea is to initialise MCMC using samples from an approximation network, apply the…

Learning and Policy Search in Stochastic Dynamical Systems with Bayesian Neural Networks

We present an algorithm for policy search in stochastic dynamical systems using model-based reinforcement learning. The system dynamics are described with Bayesian neural networks (BNNs) that include stochastic input variables. These input variables allow us…

DISCO Nets: DIssimilarity COefficient Networks

The DISCO Nets is a new type of probabilistic model for estimating the conditional distribution over a complex structured output given an input. DISCO Nets allows efficient sampling from a posterior distribution parametrised by a…

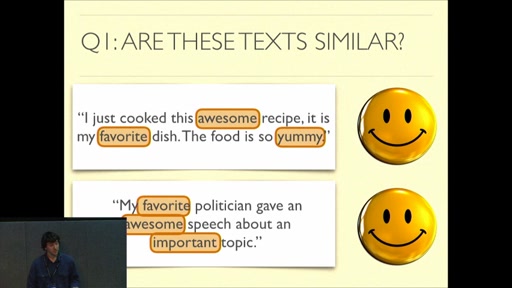

The Supervised Word Mover’s Distance

Recently, a new document metric called the word mover’s distance (WMD) has been proposed with unprecedented results on kNN-based document classification. The WMD elevates high-quality word embeddings to a document metric by formulating the distance…

Artificial Intelligence and Machine Learning in Cambridge 2017

This academic workshop aims to connect this community better and to learn from each other about interesting research and applications in the broad machine learning spectrum.

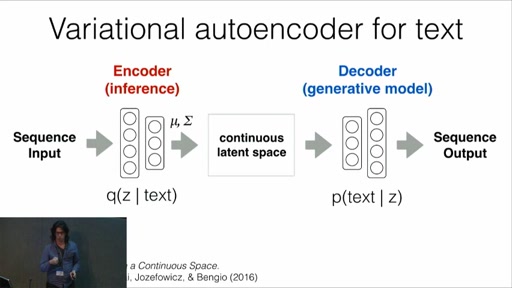

Grammar Variational Autoencoder

Deep generative models have been wildly successful at learning coherent latent representations for continuous data such as video and audio. However, generative modeling of discrete data such as arithmetic expressions and molecular structures still poses…