The past few years have seen tremendous progress in reinforcement learning (RL). From complex games to robotic object manipulation, RL has qualitatively advanced the state of the art. However, modern RL techniques require a lot for success: a largely deterministic stationary environment, an accurate resettable simulator in which mistakes – and especially their consequences – are limited to the virtual sphere, powerful computers, and a lot of energy to run them. At Microsoft Research, we are working towards automatic decision-making approaches that bring us closer to the vision of AI agents capable of learning and acting autonomously in changeable open-world conditions using the limited onboard compute. Project Frigatebird is our ambitious quest in this space, aimed at building intelligence that can enable small fixed-wing uninhabited aerial vehicles (sUAVs) to stay aloft purely by extracting energy from moving air.

Let’s talk hardware

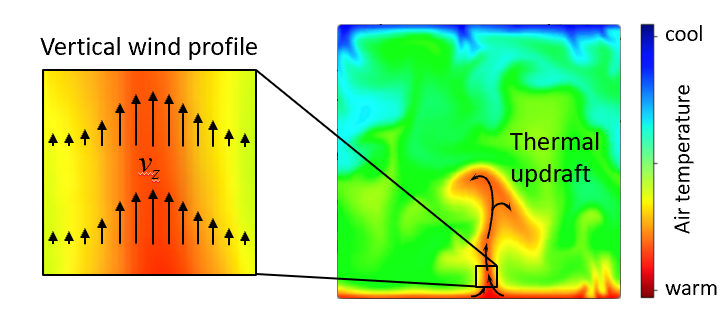

Snipe 2, our latest sUAV, pictured above, exemplifies Project Frigatebird’s hardware platforms. It is a small version of a special type of human-piloted aircraft known as sailplanes, also called gliders. Like many sailplanes, Snipe 2 doesn’t have a motor; even sailplanes that do, carry just enough power to run it for only a minute or two. Snipe 2 is hand-tossed into the air to an altitude of approximately 60 meters and then slowly descends to the ground—unless it finds a rising air current called a thermal (see Figure 2) and exploits it to soar higher. For human pilots in full-scale sailplanes, travelling hundreds of miles solely powered on these naturally occurring sources of lift is a popular sport. For certain birds like albatrosses or frigatebirds, covering great distances in this way with nary a wing flap is a natural-born skill. A skill that we would very much like to bestow on Snipe 2’s AI.

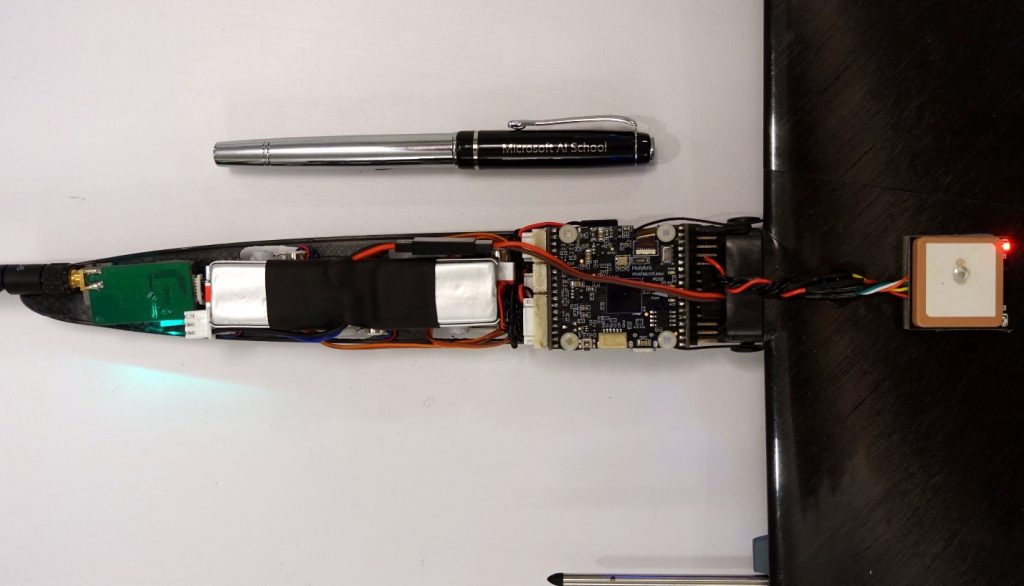

Snipe 2’s 1.5 meter-wingspan airframe weighs a mere 163 grams, its slender fuselage only 35 mm wide at its widest spot. Yet it carries an off-the-shelf Pixhawk 4 Mini flight controller and all requisite peripherals for fully autonomous flight (see Figure 1.) This “brain” has more than enough punch to run our Bayesian reinforcement learning-based soaring algorithm, POMDSoar. It can also receive a strategic, more computationally heavy, navigation policy over the radio from a laptop on the ground, further enhancing the sUAV’s ability to find columns of rising air. Alternatively, Snipe 2 can house more powerful but still sufficiently compact hardware such as Raspberry Pi Zero to compute this policy onboard. Our larger sailplane drones like the 5-meter wingspan Thermik XXXL can carry even more sophisticated equipment, including cameras and a computational platform for processing their data in real time for hours on end. Indeed, nowadays the only barrier preventing winged drones from staying aloft for this long on atmospheric energy alone in favorable weather is the lack of sufficient AI capabilities.

Reaching higher

Why is building this intelligence hard? Exactly because of the factors that limit modern RL’s applicability. Autopilots of conventional aircraft are built on fairly simple control-based approaches. This strategy works because an aircraft’s motors, in combination with its wings, deliver a stable source of lift, allowing it to “overpower” most of variable factors affecting its flight, for example, wind. Sailplanes, on the other hand, are “underactuated” and must make use of – not overpower – highly uncertain and non-stationary atmospheric phenomena to stay aloft. Thermals, the columns of upward-moving air in which hawks and other birds are often seen gracefully circling, are an example of these stochastic phenomena. A thermal can disappear minutes after appearing, and the amount of lift if provides varies across its lifecycle, with altitude, and with distance from the thermal center. Finding thermals is a difficult problem in itself. They cannot be seen directly; a sailplane can infer their size and location only approximately. Human pilots rely on local knowledge, ground features, observing the behavior of birds and other sailplanes, and other cues, in addition to instrument readings, to guess where thermals are. Interpreting some of these cues involves simple-sounding but nontrivial computer vision problems—for example, estimating distance to objects seen against the background of featureless sky. Decision-making based on these observations is even more complicated. It requires integrating diverse sensor data on hardware far less capable than a human brain, and accounting for large amounts of uncertainty over large planning horizons. Accurately inferring the consequences of various decisions using simulations, a common approach in modern RL, is thwarted under these conditions by the lack of onboard compute and energy to run it.

Figure 3: (Left) A schematic depiction of air movement within thermals and a sailplane’s trajectory. (Right) A visualization of an actual thermal soaring trajectory from one of our sUAVs’ flights.

Our first steps have focused on using thermals to gain altitude:

- Our RSS-2018 paper was the first autonomous soaring work to deploy an RL algorithm for exploiting thermals aboard an actual sailplane sUAV, as opposed to simulation. It also showed RL’s advantage at this task over a strong baseline algorithm based on control and replanning, an instance of a class of autonomous thermaling approaches predominant in prior work, in a series of field tests. Our Bayesian RL algorithm POMDSoar deliberatively plans learning about the environment and exploiting the acquired knowledge. This property gives it an edge over more traditional soaring controllers that update their thermal model and adjust their thermaling strategy as they gather more data about the environment, but don’t take intentional steps to optimize the information gathering.

- Our IROS-2018 paper studied ArduSoar, a control-based thermaling strategy. We have found it to perform very well given its approach that plans based on the current most likely thermal model. As a simple, robust soaring controller, ArduSoar has been integrated into ArduPlane (opens in new tab), a major open-source autopilot for fixed-wing drones.

Figure 4: An animated 3D visualization of a real simultaneous flight of two motorized Radian Pro sailplanes, one running ArduSoar and another running POMDSoar. Use the mouse to change the viewing angle, zoom, and replay speed. At the end, one of the Radians can be seen engaging in low-altitude orographic soaring near a tree line, getting blown by a wind gust into a tree, and becoming stuck there roughly 35 meters above the ground – a reality of drone testing in the field. After some time, the Radian was retrieved from a nearby swamp and repaired. It flies to this day.

We released both POMDSoar and ArduSoar (opens in new tab) as part of Frigatebird autopilot on Github, which is based on a fork of ArduPlane.

On a wing and a simulator

Although Project Frigatebird’s goal is to take RL beyond simulated settings, simulations play a central role in the project. While working on POMDSoar and ArduSoar, we saved a lot of time by evaluating our ideas on a simulator in the lab before doing field tests. Besides saving time, simulators allow us to do crucial experiments that would be very difficult to do logistically in the field. This applies primarily to long-distance navigation, where simulation lets us learn and assess strategies over multi-kilometer distances over various types of terrain, conditions we don’t have easy access to in reality.

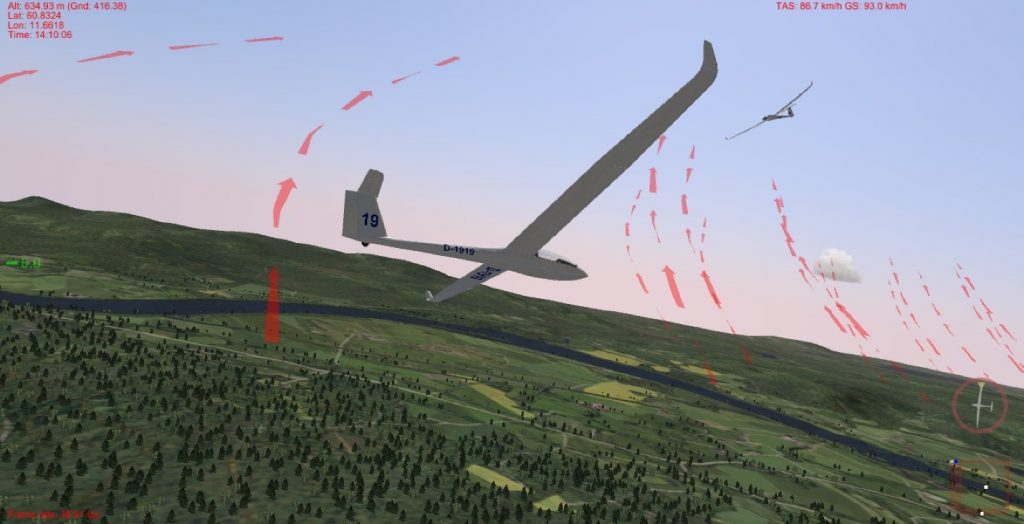

Figure 5: Software-in-the-loop simulation in Silent Wings. A Frigatebird-controlled LS-8b sailplane is trying to catch a thermal where another sailplane is already soaring on a windy day near Starmoen, Norway. For debugging convenience, Silent Wings indicates the centers of thermals and ridge lift, which are invisible in reality, with red arrows (this visualization can be disabled).

To facilitate such experimentation for other researchers, we released a software-in-the-loop (SITL) integration (opens in new tab) between Frigatebird and a soaring flight simulator, Silent Wings (opens in new tab). Silent Wings is renowned for the fidelity of its soaring flight experience. Importantly for experiments like ours, it provides the most accurate modelling of the distribution of thermals and ridge lift across the natural landscape as a function of terrain features, time, and environmental conditions that we’ve encountered in any simulator. This gives us confidence that Silent Wings’ evaluation of long-range navigation strategies, which critically rely on these distributions, will yield qualitatively similar results to what we will see during field experiments.

Flight plan

Sensors let sailplane sUAVs reliably recognize when they are flying through a thermal, and techniques like POMDSoar let them soar higher, even in the weak turbulent thermals found at lower altitudes. However, without the ability to predict from a distance where thermals are, the sailplane drones can’t devise a robust navigation strategy from point A to point B. To address this problem, in partnership with scientists from ETH Zurich’s Autonomous Systems Lab (opens in new tab), we are researching remote thermal prediction and its integration with motion planning.

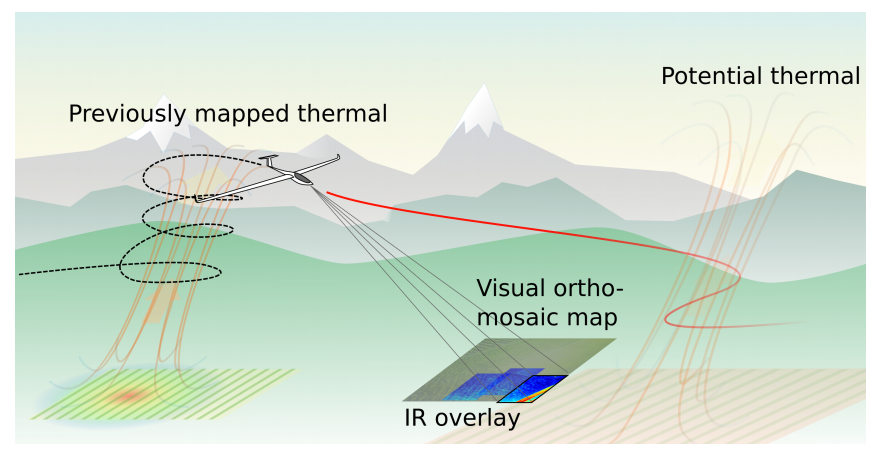

Thermals appear due to warmer parts of the ground heating up the air above them and forcing it rise. Our joint efforts with ETH Zurich’s team focus on detecting the temperature differences that cause this process, as well as other useful features from a distance, using infrared and optical cameras mounted on the sailplane, and forecasting thermal locations from them (see Figure 6.) However, infrared cameras cannot “see” such minute temperature variations in the air, and not every warm patch on the ground gives rise to a thermal, making this a hard but exciting problem. Integrating the resulting predictions with reinforcement learning for motion planning raises research challenges of its own due to the uncertainty in the predictions and difficulties in field evaluation of this approach.

Figure 6: A schematic of a sailplane predicting thermal locations in front of itself by mapping the terrain with infrared and optical cameras. Image provided by ETH Zurich’s Autonomous Systems Lab.

Crew

Building intelligence for a robotic platform that critically relies on, not merely copes with, highly variable atmospheric phenomena outdoors so that it can soar as well as the best soarers – birds! – takes expertise far beyond AI itself. To achieve our dream, we have been collaborating with experts from all over the world. Iain Guilliard (opens in new tab), a Ph.D. student from the Australian National University and a former intern at Microsoft Research, has been the driving force behind POMDSoar. Samuel Tabor (opens in new tab), a UK-based autonomous soaring enthusiast, has developed the alternative control-based ArduSoar approach and helped build the software-in-the-loop integration for Silent Wings. The Frigatebird autopilot (opens in new tab), which includes POMDSoar and ArduSoar, is based on the ArduPlane open-source project and on feedback from the international community of its developers. We are researching infrared/optical vision-aided thermal prediction with our partners Nicholas Lawrance (opens in new tab), Jen Jen Chung (opens in new tab), Timo Hinzmann (opens in new tab), and Florian Achermann (opens in new tab) at ETH Zurich’s Autonomous Systems Lab (opens in new tab) led by Roland Siegwart (opens in new tab). The know-how of all these people augments our project team’s in-house expertise in automatic sequential decision-making, robotics/vision (Debadeepta Dey (opens in new tab)), and soaring (Rick Rogahn).