From the intense shock of the COVID-19 pandemic to the effects of climate change, our global society has never faced greater risk. The Societal Resilience team at Microsoft Research was established in recognition of this risk and tasked with developing open technologies that enable a scalable response in times of crisis. And just as we think about scalability in a holistic way—scaling across different forms of common problems, for different partners, in different domains—we also take a multi-horizon view of what it means to respond to crisis.

When an acute crisis strikes, it creates an urgency to help real people, right now. However, not all crises are acute, and not all forms of response deliver direct assistance. While we need to attend to foreground crises like floods, fire, and famine, we also need to pay attention to the background crises that precipitate them—for many, the background crisis is already the foreground of their lives. To give an example, with climate change, the potential long-term casualty is the human race. But climate migration (opens in new tab) is happening all over the world already, and it disproportionately affects some of the poorest and most vulnerable countries.

Crises can also feed into and amplify one another. For example, the United Nations’ International Organization for Migration (IOM) reports that migration in general (opens in new tab), and crisis events (opens in new tab) in particular, are key drivers of human trafficking and exploitation. Migration push factors can become exacerbated during times of crisis, and people may face extreme vulnerability when forced to migrate amid a lack of safe and regular migration pathways (opens in new tab). Human exploitation and trafficking are a breach of the most fundamental human rights (opens in new tab) and show what can happen when societies fail to prevent the emergence of systemic vulnerability within their populations. By tackling existing sources of vulnerability and exploitation now, we can learn how to deliver more effective responses to the interconnected crises of the future.

To build resilience in these areas, researchers at Microsoft and their collaborators have been working on a number of tools that help domain experts translate real-world data into evidence. All three tools and case studies presented in this post share a common idea: that a hidden structure exists within the many combinations of attributes that constitute real-world data, and that both domain knowledge and data tools are needed to make sense of this structure and inform real-world response. To learn more about these efforts, read the accompanying AI for Business and Technology blog post (opens in new tab). Note that several of the technologies in this post will be presented in greater detail at the Microsoft Research Summit (opens in new tab) on October 19–21, 2021.

Supporting evidence-based policy

For crisis response at the level above individual assistance, we need to think in terms of policy—how should we allocate people, money, and other resources towards tackling both the causes and consequences of the crisis?

In such situations, we need evidence that can inform new policies and evaluate existing ones, whether the public policy of governments or the private policy of organizations. Returning to the link between crises and trafficking, if policy makers do not have access to supporting evidence because it doesn’t exist or cannot be shared, or if they are not persuaded by the weight of evidence in support of the causal relationship, they will not enact policies that ensure appropriate intervention and direct assistance when the time comes.

Policy is the greatest lever we have to save lives and livelihoods at scale. Building technology for evidence-based policy is how we maximize our leverage as we work to make societies more resilient.

Developing real-world evidence

Real-world problems affecting societal resilience leave a trail of “real-world data” (RWD) in their wake. This concept originated in the medical field to differentiate observational data collected for some other purpose (for example, electronic health records and healthcare claims) from experimental data collected through, and for the specific purpose of, a randomized controlled event (like a clinical trial).

The corresponding notion of “real-world evidence” (RWE) similarly emerged in the medical field, defined in 21 U.S. Code § 355g (opens in new tab) of the Federal Food, Drug, and Cosmetic Act as “data regarding the usage, or the potential benefits or risks, of a drug derived from sources other than traditional clinical trials.” While our RWE research is partly inspired by the methods used to derive RWE from RWD in a medical context, we also take a broader view of what counts as evidence for decision making and policy making across unrelated fields.

For problems like human trafficking, for example, it would be unethical to run a randomized controlled trial in which trafficking is allowed to happen. In this case, observational data describing victims of trafficking, collected at the point of assistance, is the next best source of data. Indeed, this kind of positive feedback loop, with direct assistance activities informing evidence-based policy and evidence-based policy informing the allocation of assistance resources, is one of the main ways in which targeted technology development could make a significant difference to real-world outcomes.

Empowering domain experts

In practice, however, facilitating positive feedback between assistance and policy activities means dealing with multiple challenges that hinder the progression from data to evidence, to policy, to impact. The people and organizations collecting data on the front line are rarely those responsible for making or evaluating the impact of policy, just as those with the technical expertise to develop evidence are rarely those with the domain expertise needed to interpret and act on that evidence.

To bridge these gaps, we work with domain experts to design tools that democratize the practice of evidence development—reducing reliance on data scientists and other data specialists whose skills are in short supply, especially during a crisis.

Real-world evidence in action

Over the following sections, we describe tools for developing different kinds of real-world evidence in response to the distinctive characteristics—and challenges—of accessing, analyzing, and acting on real-world data. In each case, we use examples drawn from our efforts to counter human trafficking and modern slavery.

Developing evidence of correlation from private data

Research challenge

When people can’t see the data describing a phenomenon, they can’t make effective policy decisions at any level. However, many real-world datasets relate to individuals and cannot be shared with other organizations because of privacy concerns and data protection regulations.

-

This challenge arose when Microsoft participated in Tech Against Trafficking (TAT) (opens in new tab)—a coalition of technology companies (currently Amazon, BT, Microsoft, and Salesforce) working to combat trafficking with technology. In the 2019 TAT Accelerator Program (opens in new tab), TAT member companies worked together to support the Counter Trafficking Data Collaborative (CTDC) (opens in new tab)—an initiative run by the International Organization for Migration (IOM) that pools data from organizations including, IOM, Polaris, Liberty Shared, OTSH, and A21, to create the world’s largest database of individual survivors of trafficking.

The CTDC data hub makes derivatives of this data openly available as a way of informing evidence-based policy against human trafficking, through data maps, dashboards, and stories that are accessible to policy makers. This raises risks to privacy. For example, if traffickers believe they have identified a victim within published data artifacts, they may assume that this implies collaboration with the authorities in ways that may prompt retaliation. To get around this, CTDC data is de-identified and anonymized using standard approaches. But this is cumbersome, forces a sacrifice of the data’s analytic utility, and may not remove all residual risks to privacy and safety.

Research question

How can we enable policy makers in one organization to view and explore the private data collected and controlled by another in a way that preserves the privacy of groups of data subjects, preserves the utility of datasets, and is accessible to all data stakeholders?

Enabling technology

We developed the concept of a Synthetic Data Showcase as a new mechanism for privacy-preserving data release, now available on GitHub (opens in new tab) and as an interactive AI Lab. Synthetic data is generated in a way that reproduces the structure and statistics of a sensitive dataset, but with the guarantee that every combination of attributes in the records appears at least k times in the records of the sensitive dataset and therefore cannot be used to isolate any actual groups of individuals smaller than k. In other words, we use synthetic data to generalize k-anonymity (opens in new tab) to all attributes of a dataset—not just a subset of attributes determined in advance to be identifying in combination.

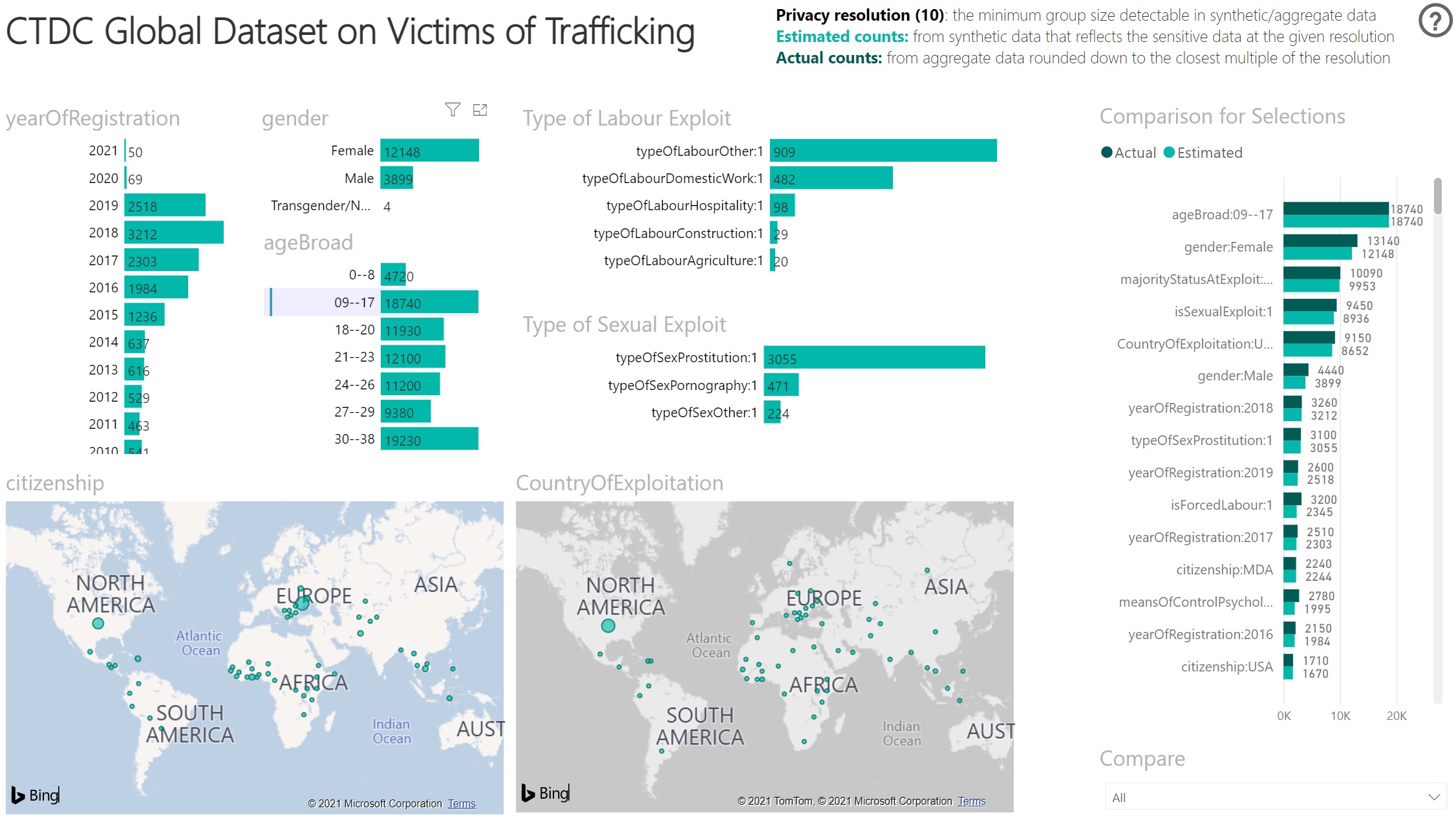

Alongside the synthetic data, we also release aggregate data on all short combinations of attributes, to both validate the utility of the synthetic data and to retrieve actual counts (as a multiple of k) for official reporting. Finally, we combine both anonymous datasets in an automatically generated Power BI report for an interactive, visual, and accessible form of data exploration. The resulting evidence is at the level of correlation—both across data attributes, as reflected by their joint counts, and across datasets, as reflected by the similarity of counts calculated over the sensitive versus synthetic datasets.

-

Our ability to publish and collaborate on open software enables us to work with IOM on creating a Synthetic Data Showcase for their full, de-identified victim database without ever accessing the highly sensitive data ourselves. Today, IOM has announced the resulting update (opens in new tab) to the CTDC website, sharing data on more than three times as many victims as before. This includes several new data columns, with group-level privacy guarantees and utility that anyone can interactively verify.

With the new Global Human Trafficking Synthetic Dataset, Synthetic Data Showcase has enabled IOM and CTDC to share data that couldn’t otherwise be shared, helping address problems that couldn’t otherwise be solved. In the following sections, we show how this dataset can be used to develop additional types of evidence to fight trafficking.

IOM aims to share the new technique with counter-trafficking organizations worldwide as part of a wider program to improve the production of data and evidence on human trafficking. This includes establishing new international standards and guidance to support governments in producing high-quality administrative data, in partnership with the UN Office on Drugs and Crime, and a package of data standards and information management tools (opens in new tab) for frontline counter-trafficking agencies.

-

Further details of the TAT-CTDC-IOM-Microsoft collaboration:

Developing evidence of causation from observational data

Research challenge

If people can’t see the causation driving a phenomenon, they can’t effectively make strategic policy decisions about where to invest resources over the long-term. However, counts and correlations derived from data cannot by themselves be used to confirm the presence of a causal relationship within a domain, or estimate the size of the causal effect.

-

The COVID-19 pandemic triggered an unprecedented effort to identify existing drugs that may reduce mortality and other adverse outcomes of infection. Through the Microsoft Research Studies in Pandemic Preparedness program, scientists at Johns Hopkins University and Stanford have developed new guidelines (opens in new tab) for performing retrospective analysis of pharmacoepidemiological data in a manner that emulates, to the extent possible, a randomized controlled trial. This capability is valuable whenever it isn’t possible, affordable, or ethical to run a trial for a given treatment.

Microsoft Research also has world-leading experts studying the kind of causal inference needed for trial emulation, as well as the DoWhy (opens in new tab) and EconML (opens in new tab) libraries with which to perform it. However, such guidelines and libraries remain inaccessible to experts in other domains who lack expertise in data science and causal inference. This includes people working on anti-trafficking who seek to understand the causal effect of factors, like migration and crises, on the extent and type of trafficking in order to formulate a more effective policy response.

Research question

How can we empower domain experts to answer causal questions using observational data collected for some other purpose in a way that emulates a randomized controlled trial, controls for the inherent biases of the data collection process, and doesn’t require prior expertise in data science or causal inference?

Enabling technology

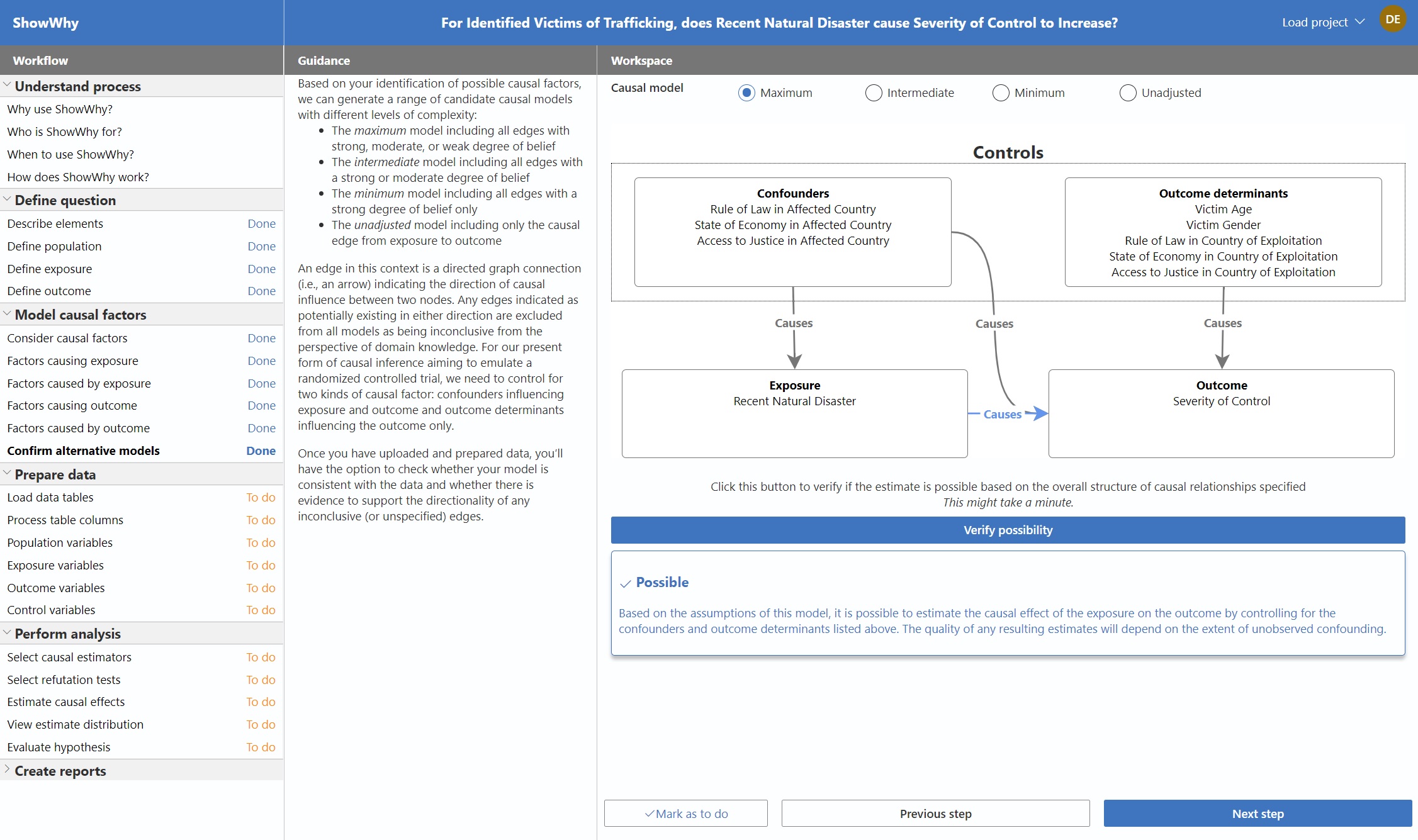

Building on the simplified and structured approach to causal inference promoted by DoWhy (opens in new tab), we have developed ShowWhy: an interactive application for guided causal inference over observational data. ShowWhy assumes no prior familiarity with coding or causal inference, yet enables the user to:

- formulate a causal question

- capture relevant domain knowledge

- derive corresponding data variables

- produce and defend estimates of the average causal effect

Behind the scenes, ShowWhy uses a combination of approaches from DoWhy (opens in new tab), EconML (opens in new tab), and CausalML (opens in new tab) to perform causal inference in Python, while also eliciting the assumptions, decisions, and justifications needed for others to evaluate the standard of evidence represented by the results. Following an analysis, users can export Jupyter Notebooks and other reports that document the end-to-end process in forms suitable for audiences ranging from data scientists evaluating the analysis to decision makers evaluating the appropriate policy response.

One of the most challenging aspects of causal inference is knowing which of multiple reasonable decisions to make at each step of the process. This includes how to define the population, exposure, and outcome of interest, how to model the causal structure of the domain, which estimation approach to use, and so on. Nonexperts deal with even greater uncertainty about whether the final result may hinge on some arbitrary decision, like the precise value of a threshold or the contents of a query.

To address this uncertainty and counter any claims of selective reporting in support of a preferred hypothesis, ShowWhy enables specification curve analysis (opens in new tab), in which all reasonable specifications of the causal inference task can be estimated, refuted, and jointly analyzed for significance. While a single estimate of the causal effect can inspire both overconfidence and underconfidence—depending on the prior beliefs of the audience—ShowWhy promotes a more balanced discussion about the overall strength (and contingency) of a much broader body of evidence. ShowWhy shifts the focus to where it matters: from a theoretical debate about the validity of a given result to a practical debate about the validity of decisions that make a meaningful difference to such results in practice.

-

ShowWhy will be released open-source on GitHub in late 2021. While this tool can be used to answer a wide range of causal questions across domains, we are particularly interested in how it can help our partners understand the drivers of human trafficking and exploitation.

Just as ShowWhy aims to make the practice of causal inference accessible to many for the first time, the Global Human Trafficking Synthetic Dataset (opens in new tab) similarly makes available, for the first time, rich microdata describing all victims of trafficking identified by CTDC data contributors. At the upcoming Microsoft Research Summit (opens in new tab), October 19–21, 2021, we’ll show how this combination of resources can enable community-generated evidence in support of, for example, the link between crises and trafficking (opens in new tab) reported by IOM.

While such evidence generated from synthetic data is suggestive rather than conclusive, it allows the community to conceive and evaluate causal questions that would otherwise be inconceivable. And because ShowWhy enables simple reproduction of analyses over alternative datasets, organizations like IOM that control highly sensitive data can easily use their own data to validate any external analyses performed using synthetic data.

-

Partner use of retrospective pharmacoepidemiological analysis in support of off-label drug discovery in the fight against COVID-19 and other respiratory infections:

- The Association Between Alpha-1 Adrenergic Receptor Antagonists and In-Hospital Mortality From COVID-19 (opens in new tab)

- COVID-19 outcomes among hospitalized men with or without exposure to alpha-1-adrenergic receptor blocking agents (opens in new tab)

- Alpha-1 adrenergic receptor antagonists to prevent hyperinflammation and death from lower respiratory tract infection (opens in new tab)

- Preventing cytokine storm syndrome in COVID-19 using α-1 adrenergic receptor antagonists (opens in new tab)

Developing evidence of change from temporal data

Research challenge

If people can’t see the structure and dynamics of a phenomenon, they can’t make effective policy decisions at the tactical level. However, in situations with substantial variability in how data observations occur over time, it can be difficult to separate meaningful changes from the noise.

-

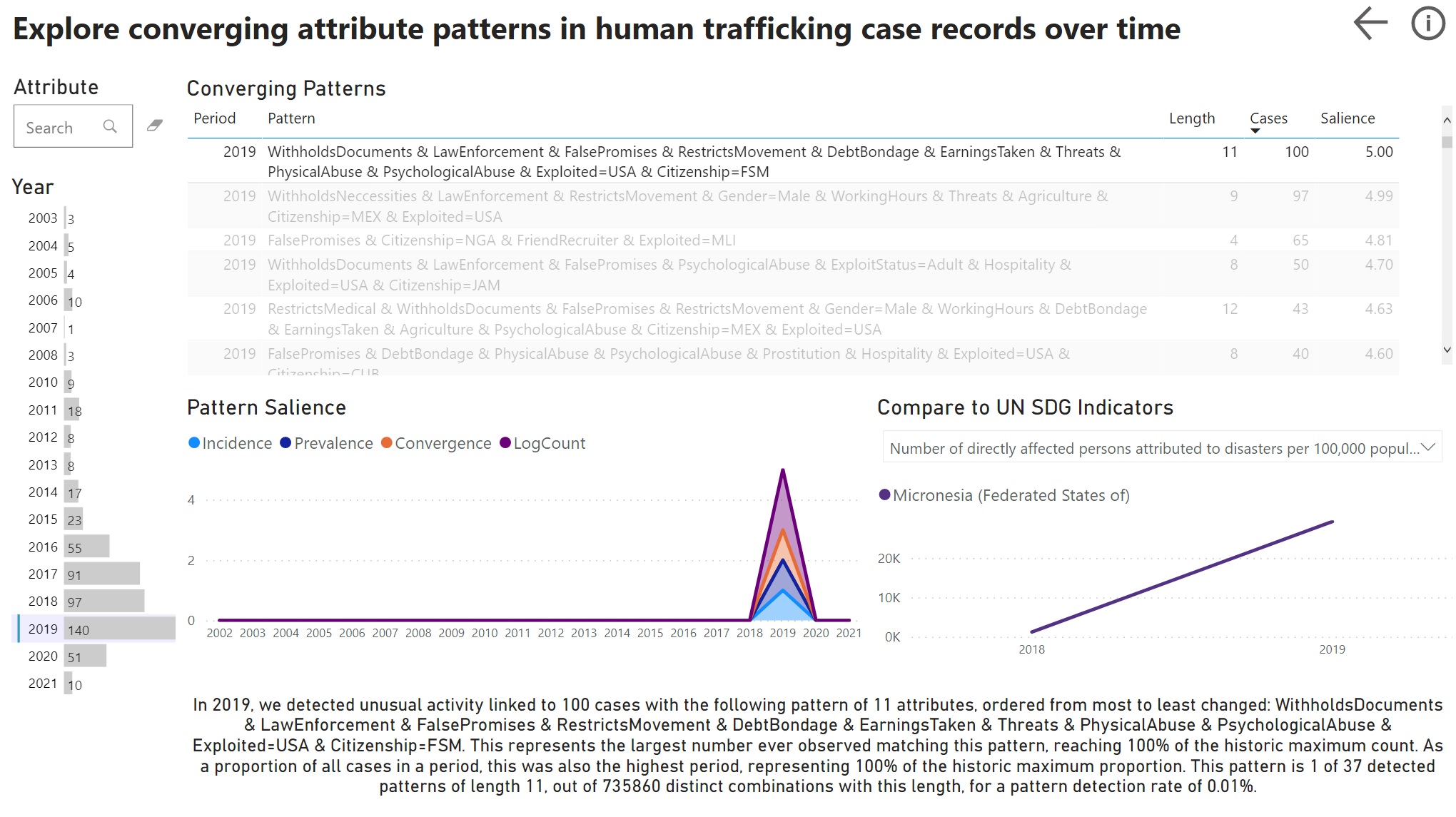

This challenge arose in the 2021 TAT Accelerator Program (opens in new tab), currently in progress, with Unseen UK (opens in new tab) and Seattle Against Slavery (opens in new tab) as participating organizations. For Unseen, one major challenge is identifying hidden patterns and emerging trends within case records generated through calls to the UK Modern Slavery and Exploitation helpline.

While it is easy to notice a dramatic spike in any one of the many attributes that describe a trafficking case (for example, an increase in reports linked to a particular location, industry, or age range), it is much harder to identify unusual or unusually frequent combinations of attributes (for example, a particular location, industry, and age range) that may represent an underlying change in real-world trafficking activity.

Compared with statistics on individual attributes, attribute combinations describe actual cases in ways that can directly inform targeted policy responses. The problem is that the number of attribute combinations grows combinatorially, each combination having a maximum frequency at some point in time, and only a small proportion of these maxima representing a meaningful change.

Research question

How can we detect meaningful changes within noisy data streams, in a way that accounts for intrinsic variability over time, reveals emerging groups of interrelated records and attribute values, and enables differentiated policy response?

Enabling technology

For this problem, we are collaborating with the School of Mathematics Research at the University of Bristol on how to apply their recent advances in graph statistics to the analysis of human trafficking data. With the CTDC Global Human Trafficking Synthetic Dataset (opens in new tab) as representative data, we can connect pairs of attributes based on the number of records sharing both attributes in each time period of interest (for example, for each year of victim registration).

Given this time series of graphs, we can use Unfolded Adjacency Spectral Embedding (UASE) (opens in new tab) to map all attributes over all time periods into a single embedded space with the strong stability guarantee (opens in new tab) that constant positions in this space represent constant patterns of behavior. The more similar the behavior of two nodes, the closer their positions in the embedding. By applying new insights into the measurement of relatedness (opens in new tab) within embedded spaces, we can identify groups of attribute nodes “converging” towards one another in a given time period, with respect to all other periods, as a measure of meaningful change normalized over all attributes and periods.

To date, we have informally observed that combinations of converging attributes typically coincide with the maximum absolute or relative frequency of the detected attribute combination over all time—something that would immediately be understood as an “insight.” Due to the graph method used to generate it, these insights are both structurally and statistically meaningful. However, understanding whether this represents a meaningful change in the real world demands domain knowledge beyond the data. This is why, as with Synthetic Data Showcase (opens in new tab), we use Power BI to create visual interfaces for interactively exploring and explaining sets of candidate insights in the context of other real-world data sources, like UN SDG indicators (opens in new tab) on established causes of human trafficking.

-

With the 2021 TAT accelerator still at an early stage, the CTDC Global Human Trafficking Synthetic Dataset (opens in new tab) provided a realistic and recognizable foundation for demonstrating new analytic capabilities that can help address real, emerging (or previously unobserved) forms of the problem. As the accelerator proceeds, we will work to enable each organization to apply these capabilities to their own data, progressing through a series of external demonstrations on open data, mock data, and de-identified data, toward internal testing on actual sensitive data and integration with real-world tools and workflows.

At the 2021 Microsoft Research Summit, we will share our TAT accelerator work in progress and demonstrate our new dynamic graph analysis capabilities using the newly available CTDC Global Human Trafficking Synthetic Dataset (opens in new tab), which contains evidence of twice as many attribute relationships as the previous k-anonymous data release. Following the summit, we will also update our open-source graspologic (opens in new tab) graph statistics package to include all of the methods from our collaborators at University of Bristol supporting our anti-trafficking efforts. Examples of end-to-end use of these methods, complete with interactive Power BI reports, will be made available following the conclusion of the TAT accelerator in early 2022.

-

Partner research into dynamic graph embedding used in this case study and our graspologic (opens in new tab) graph statistics package:

- The multilayer random dot product graph (opens in new tab)

- Spectral embedding for dynamic networks with stability guarantees (opens in new tab)

- Matrix factorisation and the interpretation of geodesic distance (opens in new tab)

- A Central Limit Theorem for an Omnibus Embedding of Multiple Random Dot Product Graphs (opens in new tab)

Towards combinatorial impact

For Synthetic Data Showcase, attribute combinations represent privacy to be preserved. For ShowWhy, they represent bias to be controlled. And for our dynamic graph capabilities, they represent insights to be revealed. The result in each case is a form of evidence that wouldn’t otherwise exist, used to tackle problems that couldn’t otherwise be solved. And while we have addressed the private, observational, and temporal nature of real-world data separately, the reality is that many datasets across domains share all these qualities. By making our collection of real-world evidence tools available open-source, we hope to maximize the ability of organizations around the world to contribute to a shared evidence base in their problem domain, for any and all problems of societal significance, both today and into the future.

As a next step for our anti-trafficking work in particular, we are also excited to announce that Microsoft has joined TellFinder Alliance (opens in new tab)—a global network of partners working to combat human trafficking using ephemeral web data. The associated TellFinder (opens in new tab) application already helps investigators and analysts develop evidence at the case level, leading to the prosecution of both individual traffickers and organized trafficking networks. By applying our tools with partners through both Tech Against Trafficking and TellFinder Alliance, we hope to develop the evidence that will help shape future interventions at the policy level—disrupting the mechanisms by which trafficking takes place and leaving no room in society for any kind of slavery or exploitation.