This blog post is a part of a series exploring our research in privacy, security, and cryptography. For the previous post, see https://www.microsoft.com/en-us/research/blog/research-trends-in-privacy-security-and-cryptography. While AI has the potential to massively increase productivity, this power can be used equally well for malicious purposes, for example, to automate the creation of sophisticated scam messages. In this post, we explore threats AI can pose for online communication ecosystems and outline a high-level approach to mitigating these threats.

Communication in the age of AI

Concerns regarding the influence of AI on the integrity of online communication are increasingly shared by policymakers, AI researchers, business leaders, and other individuals. These concerns are well-founded, as benign AI chatbots can be easily repurposed to impersonate people, help spread misinformation, and sway both public opinion and personal beliefs. So-called “spear phishing” attacks, which are personalized to the target, have proved devastatingly effective. This is particularly true if victims are not using multifactor authentication, meaning an attacker who steals their login credentials with a phishing mail could access authentic services with those credentials. This opportunity has not been missed by organized cybercrime; AI-powered tools marketed to scammers and fraudsters are already emerging. This is disturbing, because democratic systems, business integrity, and interpersonal relationships all hinge on credible and effective communication—a process that has notably migrated to the digital sphere.

As we enter a world where people increasingly interact with artificial agents, it is critical to acknowledge that these challenges from generative AI are not merely hypothetical. In the context of our product offerings at Microsoft, they materialize as genuine threats that we are actively addressing. We are beginning to witness the impact of AI in generating highly specific types of text (emails, reports, scripts, code) in a personalized, automated, and scalable manner. In the workplace, AI-powered tools are expected to bring about a huge increase in productivity, allowing people to focus on the more creative parts of their work rather than tedious, repetitive details. In addition, AI-powered tools can improve productivity and communication for people with disabilities or among people who do not speak the same language.

In this blog post, we focus on the challenge of establishing trust and accountability in direct communication (between two people), such as email, direct messages on social media platforms, SMS, and even phone calls. In all these scenarios, messaging commonly takes place between individuals who share little or no prior context or connection, yet those messages may carry information of high importance. Some examples include emails discussing job prospects, new connections from mutual friends, and unsolicited but important phone calls. The communication may be initiated on behalf of an organization or an individual, but in either case we encounter the same problem: if the message proves to be misleading, malicious, or otherwise inappropriate, holding anyone accountable for it is impractical, may require difficult and slow legal procedures, and does not extend across different communication platforms.

As the scale of these activities increases, there is also a growing need for a flexible cross-platform accountability mechanism that allows both the message sender and receiver to explicitly declare the nature of their communication. Concretely, the sender should be able to declare accountability for their message and the receiver should be able to hold the sender accountable if the message is inappropriate.

Elements of accountability

The problems outlined above are not exactly new, but recent advances in AI have made them more urgent. Over the past several years, the tech community, alongside media organizations and others, have investigated ways to distinguish whether text or images are created by AI; for example, C2PA is a type of watermarking technology, and one possible solution among others. With AI-powered tools increasingly being used in the workplace, Microsoft believes that it will take a combination of approaches to provide the highest value and most transparency to users.

Focusing on accountability is one such approach. We can start by listing some properties we expect of any workable solution:

- People and organizations need to be able to declare accountability for the messages they send.

- Receivers need to be able to hold the senders accountable if the message is inappropriate or malicious, to protect future potential victims.

- There must exist an incentive for the sender to declare accountability.

- The mechanism should only solve the accountability problem and nothing else. It must not have unintended side effects, such as a loss of privacy for honest participants.

- Receivers should not be required to register with any service.

- The accountability mechanism must be compatible with the plurality of methods people use to communicate today.

One way to build an accountability mechanism is to use a reputation system that verifies real-world identities, connecting our digital interactions to a tangible and ultimately accountable organization or human identity. Online reputation has now become an asset that organizations and individuals have a vested interest in preserving. It creates an incentive for honest and trustworthy behavior, which ultimately contributes to a safer and more reliable digital environment for everyone.

Reputation system for online accountability

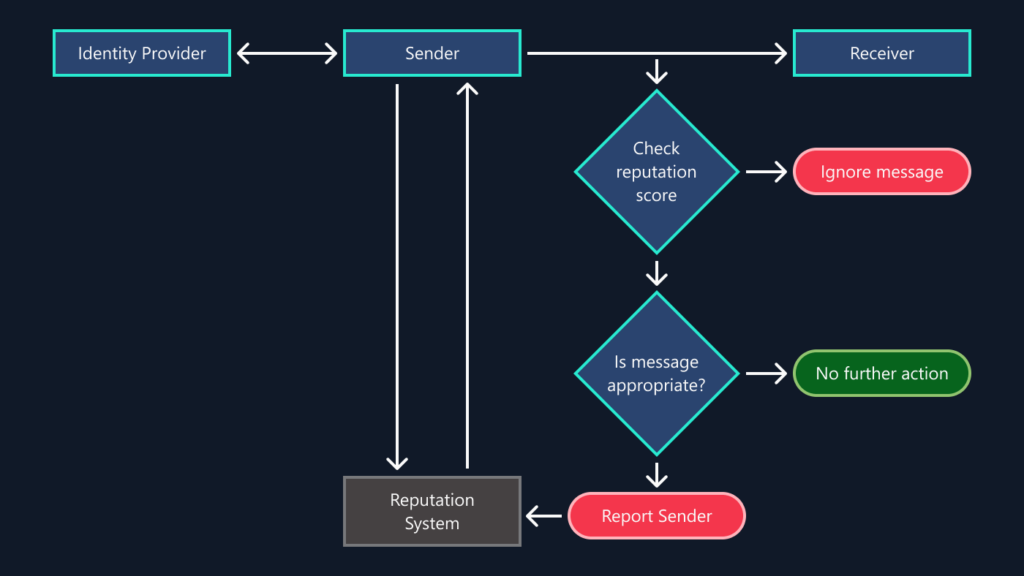

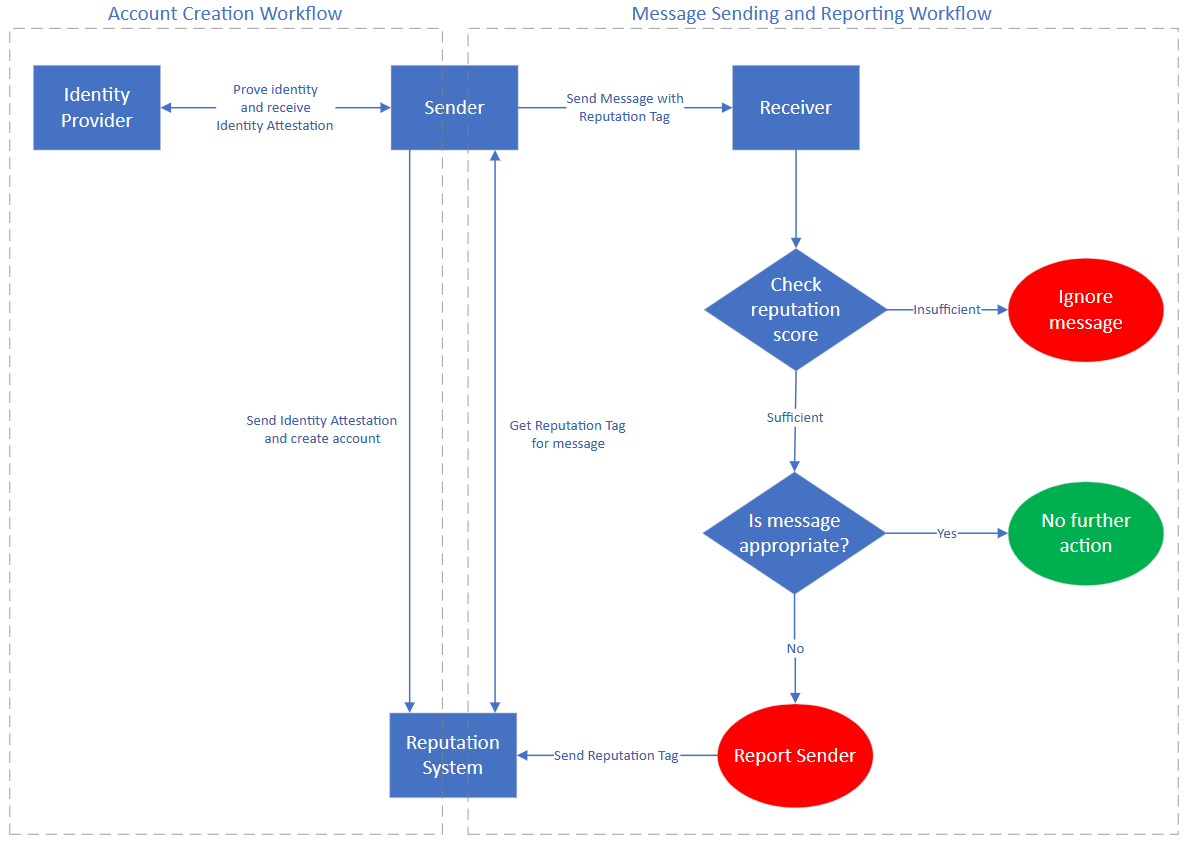

Consider what an online communication user experience could be like with an integrated reputation system. In this solution, a message sender could declare their accountability by binding their message to their account in the reputation system in the form of a cryptographic reputation tag. Conversely, the receiver uses the tag to verify the sender’s reputation and can use it to report the sender if the message is inappropriate, reducing the sender’s reputation. It is the sender’s responsibility to judge whether the receiver will perceive the message as inappropriate.

Messages with an attached reputation tag are called reputed messages, whereas those without an associated reputation are called generic messages. Reputed messages would typically make the most sense in one-to-one communication that the sender intends for a particular recipient, or one-to-many communication to a few recipients. For example, a proposal to discuss a business deal, a wedding invitation email, a payment reminder SMS from a company’s billing department, or a work email discussing a joint project might be sent as reputed messages. Generic messages would typically not be intended for a particular receiver. For example, emails sent to a mailing list (many receivers) or non-personalized advertisements (large scale) should be sent as generic.

The different components and workflows of our accountability mechanism are depicted, at a high level, in Figure 1.

Taking a concrete example, think of a situation where you receive an email from your bank asking you to verify the security settings for your account. You know that phishing emails often target such scenarios, so your first reaction is to ignore the message. However, in this case your email client has noted the valid reputation tag and automatically moved the email to a reputed messages folder. It shows the sender’s reputation, high, next to the message. Instead of deleting the unsolicited and slightly suspicious email, you decide to check whether the link in the email truly leads you to your bank’s website. You are now convinced this is a legitimate message and proceed with the recommendations to review your security settings.

As another example, suppose you work in your company’s billing department. You find something wrong with a customer’s billing information and decide to send them an email to get more information. Since this is an important matter, you hope to maximize the chance of them seeing your message by attaching the billing department’s reputation tag to it. The customer sees the email go in the reputed messages folder, notices the sender’s high reputation, and responds to it with appropriate urgency.

As a third example, imagine that you receive an unsolicited phone call from someone who claims to be your distant relative and wants to discuss a family reunion they are organizing. They ask you questions about your family, making you slightly uneasy. Right before calling you, they sent you a reputation tag via SMS encoding their reputation and the context of their call. You verify that the tag is valid, but that their reputation is medium. You decide to end the call and report them using the tag they shared, as you felt that their call asking for such sensitive information was inappropriate.

These examples highlight that this single system can be used across many different modes of communication, from emails to social media messages to phone calls, fostering trust and safety across the entire landscape of direct communication methods in use today.

Microsoft research podcast

Collaborators: Silica in space with Richard Black and Dexter Greene

College freshman Dexter Greene and Microsoft research manager Richard Black discuss how technology that stores data in glass is supporting students as they expand earlier efforts to communicate what it means to be human to extraterrestrials.

Call to action

In this blog post we have attempted to outline a solution to an already existing problem that is exacerbated by modern AI. Capturing the core of this problem is not easy, and many of the previously proposed solutions have unintended consequences that make them unworkable. For example, we explained why approaches that attempt to limit the use of AI are unlikely to succeed.

The solutions are not easy either. The messaging ecosystem is vastly complex and any solution requiring fundamental changes to that are unlikely to be acceptable. Usability is a key concern as well: if the system is only designed to communicate risk, we may want to avoid inadvertently communicating safety, much like the presence of padlock symbols as a sign of HTTPS have caused confusion and underestimation of risk for web browser users (opens in new tab).

Is there a comprehensive identity framework that would connect real-world identities to digital identities? This connection to a unique real-world identity is crucial, as otherwise anyone could simply create as many distinct reputation accounts as they need for any nefarious purpose.

For organizations, the situation is easier, because countries and states tend to hold public records that establish their existence and “identity.” For individuals, platforms like Reddit, TripAdvisor, and Stack Overflow have built reputation systems for their internal use, but without a foundational layer that confirms unique human identities these cannot be used to solve our problem, just as Facebook’s “real name” policy and X Premium (formerly Twitter Blue) have been insufficient to prevent the creation and use of fake accounts. Still, this is not an impossible problem to solve: LinkedIn is already partnering with CLEAR (opens in new tab) to bind government ID verification to a verification marker in user profiles, and with Microsoft Entra Verified ID (opens in new tab) to verify employment status. Worldcoin (opens in new tab) is building a cryptocurrency with each wallet being linked to a unique real-world person through biometrics, and Apple recently announced Optic ID (opens in new tab) for biometric authentication through their Vision Pro headset.

Whenever we talk about identities—especially real-world identities—we need to talk about privacy. People use different digital identities and communication methods in different communities, and these identities need to be kept separate. Trusting a reputation system with such sensitive information requires careful consideration. Our preliminary research suggests that techniques from modern cryptography can be used to provide strong security and privacy guarantees so that the reputation system learns or reveals nothing unnecessary and cannot be used in unintended ways.

What about the governance of the reputation system? In an extreme case, a single centralized party hosts the system while providing cryptographic transparency guarantees of correct operation. In another extreme, we should explore whether a purely decentralized implementation can be feasible. There are also options between these two extremes; for example, multiple smaller reputation systems hosted by different companies and organizations.

These open questions present an opportunity and a responsibility for the research community. At Microsoft Research, we are diligently working on aspects of this problem in partnership with our research on privacy-preserving verifiable information and identity, secure hardware, transparency systems, and media provenance. We invite the rest of the research community to join in by either following the path we outlined here or suggesting better alternatives. This is the start of a broad exploration that calls for a profound commitment and contribution from all of us.