News & features

In the news | The Register

Microsoft to upgrade language translator with new class of AI model

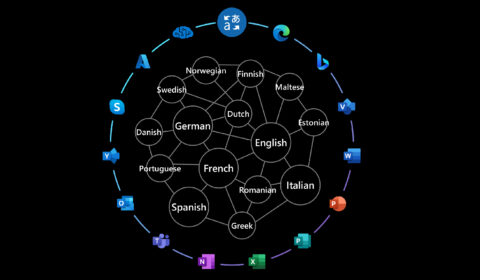

Microsoft is replacing at least some of its natural-language processing systems with a more efficient class of AI model. These transformer-based architectures have been named “Z-code Mixture of Experts.” Only parts of these models are activated when they’re run since…

Microsoft Translator enhanced with Z-code Mixture of Experts models

| Hany Hassan Awadalla, Krishna Doss Mohan, and Vishal Chowdhary

Translator, a Microsoft Azure Cognitive Service, is adopting Z-code Mixture of Experts models, a breakthrough AI technology that significantly improves the quality of production translation models. As a component of Microsoft’s larger XYZ-code initiative to combine AI models for text,…

In the news | VentureBeat

Microsoft claims new AI model architecture improves language translation

Coinciding with Nvidia’s March 2022 GPU Technology Conference, Microsoft today announced an update to Translator — its Azure service that can translate roughly 100 languages across call centers, chatbots, and third-party apps — that the company claims greatly improves the…

In the news | TechCrunch

Microsoft improves its AI translations with Z-Code

Microsoft today announced an update to its translation services that, thanks to new machine learning techniques, promises significantly improved translations between a large number of language pairs. Based on its Project Z-Code, which uses a “spare Mixture of Experts” approach,…

In the news | Microsoft AI blog

New Z-code Mixture of Experts models improve quality, efficiency in Translator and Azure AI

Microsoft is making upgrades to Translator and other Azure AI services powered by a new family of artificial intelligence models its researchers have developed called Z-code, which offer the kind of performance and quality benefits that other large-scale language models…

In the news | NVIDIA Blogs

Getting People Talking: Microsoft Improves AI Quality and Efficiency of Translator Using NVIDIA Triton

Microsoft aims to be the first to put a class of powerful AI transformer models into production using Azure with NVIDIA GPUs and Triton inference software. When your software can evoke tears of joy, you spread the cheer. So, Translator,…

In the news | ZDNet

Microsoft improves Translator and Azure AI services with new AI ‘Z-code’ models

Microsoft is updating its Translator and other Azure AI services with a set of AI models called Z-code, officials announced on March 22. These updates will improve the quality of machine translations, as well as help these services support more…

In the news | Azure Cognitive Services

Azure Cognitive Services for Language support 96 languages for custom features

Last year, Azure Cognitive Services announced the release of Azure Cognitive Service for Language. During that release, multiple NLP services came together under a unified, state-of-the-art, NLP service available under one resource and one user experience. Three new custom features…

In the news | Azure Cognitive Services

What’s new in Azure Cognitive Service for Language?

Azure Cognitive Service for Language is updated on an ongoing basis. To stay up-to-date with recent developments, this article provides you with information about new releases and features.