What is R2?

R2 is a probabilistic programming system that uses powerful techniques from program analysis and verification for efficient Markov Chain Monte Carlo (MCMC) inference. The language that is used to describe probabilistic models in R2 is based on C#.R2 compiles the given model into executable code to generate samples from the posterior distribution. The inference algorithm currently implemented in R2 is a variation of the Metropolis-Hastings sampling algorithm.

Getting R2

Click on this link to download R2. To install R2, simply extract the downloaded zip file to an appropriate directory. To get started with using R2, please refer to the included user guide.

-

Let us see how to use R2 for performing inference on probabilistic models by means of an example. We’ll consider the famous Monty Hall problem for this. The Monty Hall problem can be stated as follows:

Suppose you’re on a game show, and you’re given the choice of three doors. Behind one door is a car, behind the others, goats. You pick a door, say #1, and the host, who knows what’s behind the doors, opens another door, say #3, which has a goat. He says to you, “Do you want to pick door #2?” Is it to your advantage to switch your choice of doors?

– Marilyn vos Savant, Parade Magazine, 1990

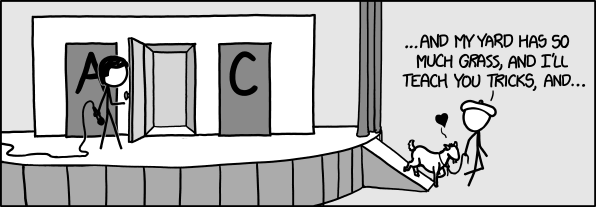

(Source: xkcd.com)

We need to find the probabilities of the contestant winning the car when they don’t change their choice and also when they change their choice. For this, we would have to construct a model. This model can be represented in R2 by means of the following program:

In line 11, we initialize the game by choosing the door behind which there would be a car. Behind the other two doors, there would be goats. This is done by randomly picking a door for the car. Since each door has equal probability of getting picked, this is modeled by drawing a sample from a Categorical distribution with 3 values. The probability of picking each value is 1/3.

Next, in line 14, we model the contestant picking a door. Again, each door has equal probability of getting picked. As previously, this is specified by drawing a sample from a Categorical distribution with 3 values where the probability of picking each value is 1/3.

In lines 17-46, we make the host open one of the other two doors behind which there is a goat. If the contestant has chosen the door behind which the car is present, then the host picks one of the other two doors with equal probability. Otherwise, there is only one door behind which there is a goat and the host picks this door.

Finally, we check whether the contestant wins the car by not changing their choice or by changing it. These values are stored in the boolean variables chosenDoorContainsCar and otherDoorContainsCar. Both these variables are returned in a tuple in line 62.

Now, let us use R2 to perform inference on this model. In a command prompt, type the following:

> R2 MontyHall.cs /numSamples:1000000

R2 would generate samples from the given model and compute the mean and variance of the variables of interest from the generated samples. In this case, R2 would print the mean and variance of each element of the returned tuple. You should get an output similar to the following:

0 Mean: 0.332854 0 Variance: 0.222062 1 Mean: 0.667146 1 Variance: 0.222062

As is clear from the above output, the contestant has a higher chance of winning the car if they change their choice.

More examples can be found in the examples/ directory of the R2 installation location.

For more information on using R2, please refer to the R2 user guide in the docs/ directory.

-

R2 currently has the following limitations which we are working towards fixing in future releases:

- Metropolis-Hastings sampling algorithm is the only inference algorithm that is currently available.

- The convergence characteristics of the Metropolis-Hastings algorithm depend upon the proposal distribution that is used. We use a heuristic to tune the proposal distribution. There is no facility that allows the user to tune the proposal distribution.

- Multi-variate distributions are not supported.

-

- Johannes Borgström (Uppsala University)

- Andy Gordon (MSR Cambridge)

- Gil Hur (Seoul National University)

Interns

- Arun Tejasvi Chaganty (Stanford University)

- Guillaume Claret (ENS Paris)

- Vijay D’Silva (UC Berkeley)

- Christian von Essen (Verimag)

- Sulekha Kulkarni (Georgia Tech)

- Sherjil Ozair (IIT Delhi)

- Robert J. Simmons (CMU)

- Samin Ishtiaq (MSR Cambridge)

- Claudio Russo (MSR Cambridge)

-

If you face any issues using R2, or have questions/comments regarding it, please feel free to contact us at: r2pp AT Microsoft DOT com.

People

Aditya Nori

Lab Director

Sriram Rajamani

Corporate Vice President, Microsoft Research