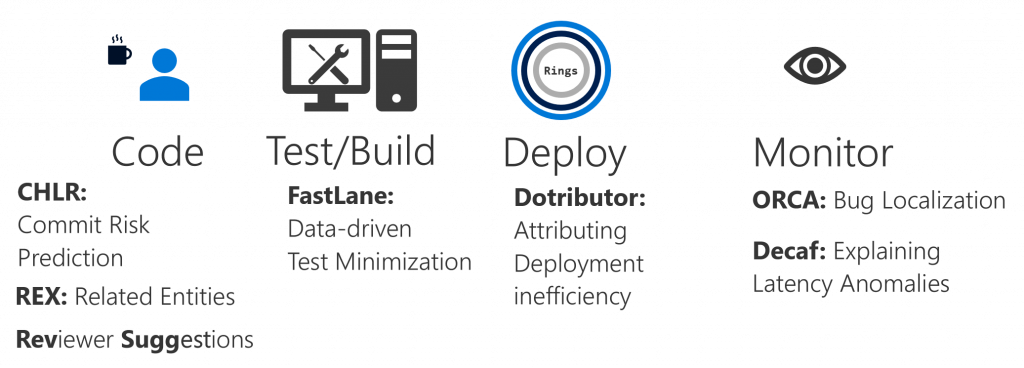

Project Sankie infuses data-driven techniques into engineering processes, development environments, and software lifecycles of large services. Sankie’s goal is to consume data from static and dynamic features of a system, learn from them, and provide meaningful insights that can be used to make decisions for developing/reviewing, testing, deploying, monitoring, and root-causing.

Sub-projects:

CHLR: Commit Risk Prediction. Given data from all stages of a DevOps pipeline, we learn a model for the risk associated with a commit. This can be used in many applications. For instance, the system can test risky commits more comprehensively, it can assign more experienced reviewers to them, or it can apply heavier-duty analysis techniques only on risky commits.

Intent Detection: Using past commit logs, we automatically detect what the intent of a commit is. For instance, is it a bug fix? Is it a code refactor? Inferring the intent of commits automatically can inform the developers better and can help detect commit risk more accurately.

Related Entities’ Exploration. Code components such as files and functions are correlated. A change to one requires a change to another. In a study, we found that developers tend to miss changing all correlated components adequately. This is a tool that detects such correlated entities in code and, at commit time, reminds developers of these correlations. The tool has been widely deployed within several repositories within our organization and is finding a significant number of such potential bugs.

Reviewer Suggestion. Developers, while creating a commit, manually pick a list of reviewers for the commit. This leads to a suboptimal allocation of reviews, and therefore it makes inefficient use of reviewer time. The purpose of reviewer-suggestions is to automatically suggest reviewers based on the nature of the commit. This not only assigns the right expert reviewer to a commit, it also fairly distributes reviews across the board, thereby reducing skew in assignments.

Fastlane: Data-driven test minimization. Fastlane uses a history of test-runs collected over multiple months to build efficient test execution plans. Fastlane analyzes petabytes of logs and, using several techniques such as commit risk prediction, test pairwise-correlation and runtime-based prediction, predicts the results of certain test runs so that the system need not run them. Apart from saving resources, this fast-tracks commits as well.

Dotributor: Attribution of deployment inefficiencies. Deployment is a complex stage in the DevOps pipeline. There is a need to attribute deployment inefficiencies to various problems and also understand the monetary cost of these inefficiencies. Dotributor achieves this by providing a useful visualization tool that deployment engineers can use to detect particular inefficient parts of the pipeline.

Orca: Differential bug localization. Post-deployment issues in services can cause serious service-downtimes. Often, these issues are caused by buggy code commits. Orca is a tool that can help an On-Call Engineer localize the issue to the correct buggy commit much faster than earlier possible. Using techniques such as differential code analysis, build provenance graph, and commit risk estimates, Orca achieves this goal.

DeCaf: Getting to the root-cause of abnormal request latencies. Normally, user requests to a service are served within SLA. However, there are times when the service latency becomes anomalously large. The reason for this could be transient, or it could be an actionable issue on the service-side. DeCaf is a tool that helps service engineers differentiate between transient and actionable issues. When the issue is actionable, DeCaf, using a random forest model, analyzes petabytes of logs in the matter of minutes and provides to-the-point summaries that lead to the root-cause of the problem.