Stabilizing Training of Generative Adversarial Networks through Regularization

- Kevin Roth ,

- Aurelien Lucchi ,

- Sebastian Nowozin ,

- Thomas Hofmann

Proceedings to the Neural Information Processing Systems conference |

Published by Neural Information Processing Systems

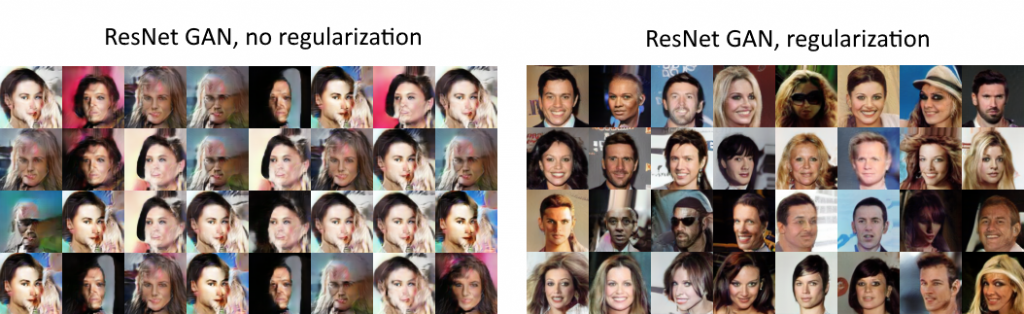

Deep generative models based on Generative Adversarial Networks (GANs) have demonstrated impressive sample quality but in order to work they require a careful choice of architecture, parameter initialization, and selection of hyper-parameters. This fragility is in part due to a dimensional mismatch between the model distribution and the true distribution, causing their density ratio and the associated f-divergence to be undefined. We overcome this fundamental limitation and propose a new regularization approach with low computational cost that yields a stable GAN training procedure. We demonstrate the effectiveness of this approach on several datasets including common benchmark image generation tasks. Our approach turns GAN models into reliable building blocks for deep learning.