Using Facial Animation to Increase the Enfacement Illusion and Avatar Self-Identification

- Mar Gonzalez Franco ,

- Anthony Steed ,

- Steve Hoogendyk ,

- Eyal Ofek

IEEE Transactions on Visualization and Computer Graphics |

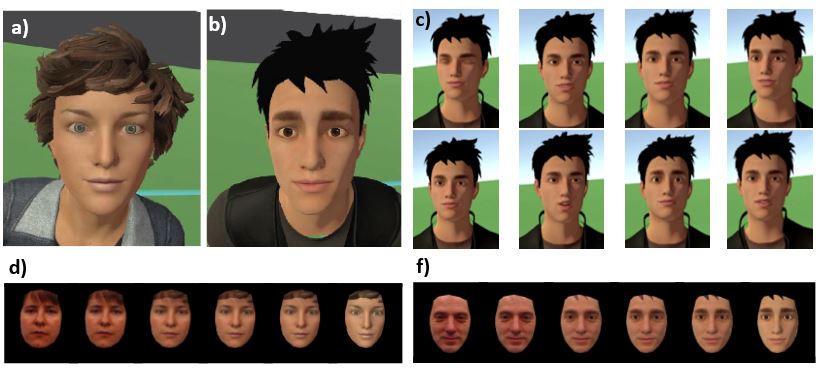

Through avatar embodiment in Virtual Reality (VR) we can achieve the illusion that an avatar is substituting our body: the avatar moves as we move and we see it from a first person perspective. However, self-identification, the process of identifying a representation as being oneself, poses new challenges because a key determinant is that we see and have agency in our own face. Providing control over the face is hard with current HMD technologies because face tracking is either cumbersome or error prone. However, limited animation is easily achieved based on speaking. We investigate the level of avatar enfacement, that is believing that a picture of a face is one’s own face, with three levels of facial animation: (i) one in which the facial expressions of the avatars are static, (ii) one in which we implement a synchronous lip motion and (iii) one in which the avatar presents lip-sync plus additional facial animations, with blinks, designed by a professional animator. We measure self-identification using a face morphing tool that morphs from the face of the participant to the face of a gender matched avatar.

We find that self-identification on avatars can be increased through pre-baked animations even when these are not photorealistic nor look like the participant.