Interpretability in NLP: Moving Beyond Vision

Deep neural network models have been extremely successful for natural language processing (NLP) applications in recent years, but one complaint they often suffer from is their lack of interpretability. On the other hand, the field…

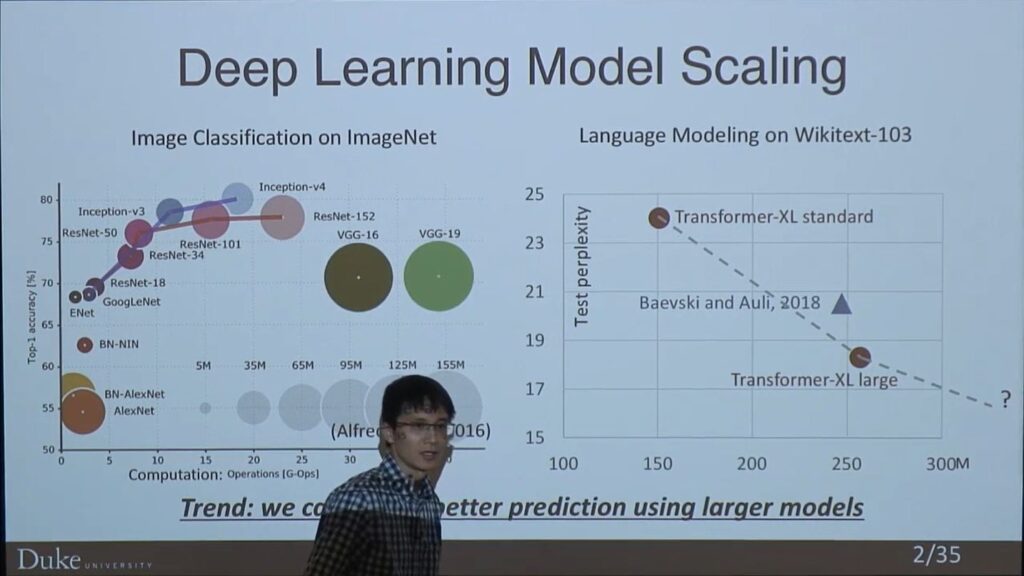

Efficient and Scalable Deep Learning

In deep learning, researchers keep gaining higher performance by using larger models. However, there are two obstacles blocking the community to build larger models: (1) training larger models is more time-consuming, which slows down model…

Towards Grounded Spatio-Temporal Reasoning

To develop an Artificial Intelligence (AI) system that can understand the world around us, it needs to be able to interpret and reason about the world we see and the language we speak. In recent…

UniLM – Unified Language Model Pre-training

Large-scale Self-supervised Pre-training Across Tasks, Languages, and Modalities.

GitHub Publication Publication Publication Publication Publication

Visually Grounded Language Understanding and Generation

In this talk, I will present our latest work on comprehending and generating visually grounded language. First, we will discuss the challenging task of learning visual grounding of language. I will introduce how to pretrain…

Learning to Map Natural Language to General Purpose Source Code

Models that map natural language (NL) to source code in general purpose languages such as Java, Python, and SQL find utility amongst two main audiences viz. developers who can manipulate the generated code, and non-expert…

Layer Trajectory BLSTM: New evolution enhances speech recognition technology

Speech is a signal that can enable natural interaction between human and machine. In order to facilitate this exchange, machines have to be able to recognize what a human has spoken, both the words and…