Welcome to Research Focus, a new series of blog posts that highlights notable publications, events, code/datasets, new hires and other milestones from across the research community at Microsoft.

NEW RESEARCH

Self-supervised Multi-task pretrAining with contRol Transformers (SMART)

Many real-world applications require sequential decision making, where an agent interacts with a stochastic environment to perform a task. For example, a navigating robot is expected to control itself and move to a target using sensory information it receives along the way. Learning the proper control policy can be complicated by environmental uncertainty and high-dimensional perceptual information, such as raw-pixel spaces. More importantly, the learned strategy is specific to the task (e.g. which target to reach) and the agent (e.g., a two-leg robot or a four-leg robot). That means that a good strategy for one task does not necessarily apply to a new task or a different agent.

Pre-training a foundation model can help improve overall efficiency when facing a large variety of control tasks and agents. However, although foundation models have achieved incredible success in language domains, different control tasks and agents can have large discrepancies, making it challenging to find a universal foundation. It becomes even more challenging in real-world scenarios that lack supervision or high-quality behavior data.

In a new paper: SMART: Self-supervised Multi-task pretrAining with contRol Transformers, Microsoft researchers tackle these challenges and propose a generic pre-training framework for control problems. Their research demonstrates that a single pre-trained SMART model can be fine-tuned for various visual-control tasks and agents, either seen or unseen, with significantly improved performance and learning efficiency. SMART is also resilient to low-quality datasets and works well even when random behaviors comprise the pre-training data.

Spotlight: AI-POWERED EXPERIENCE

NEW RESEARCH

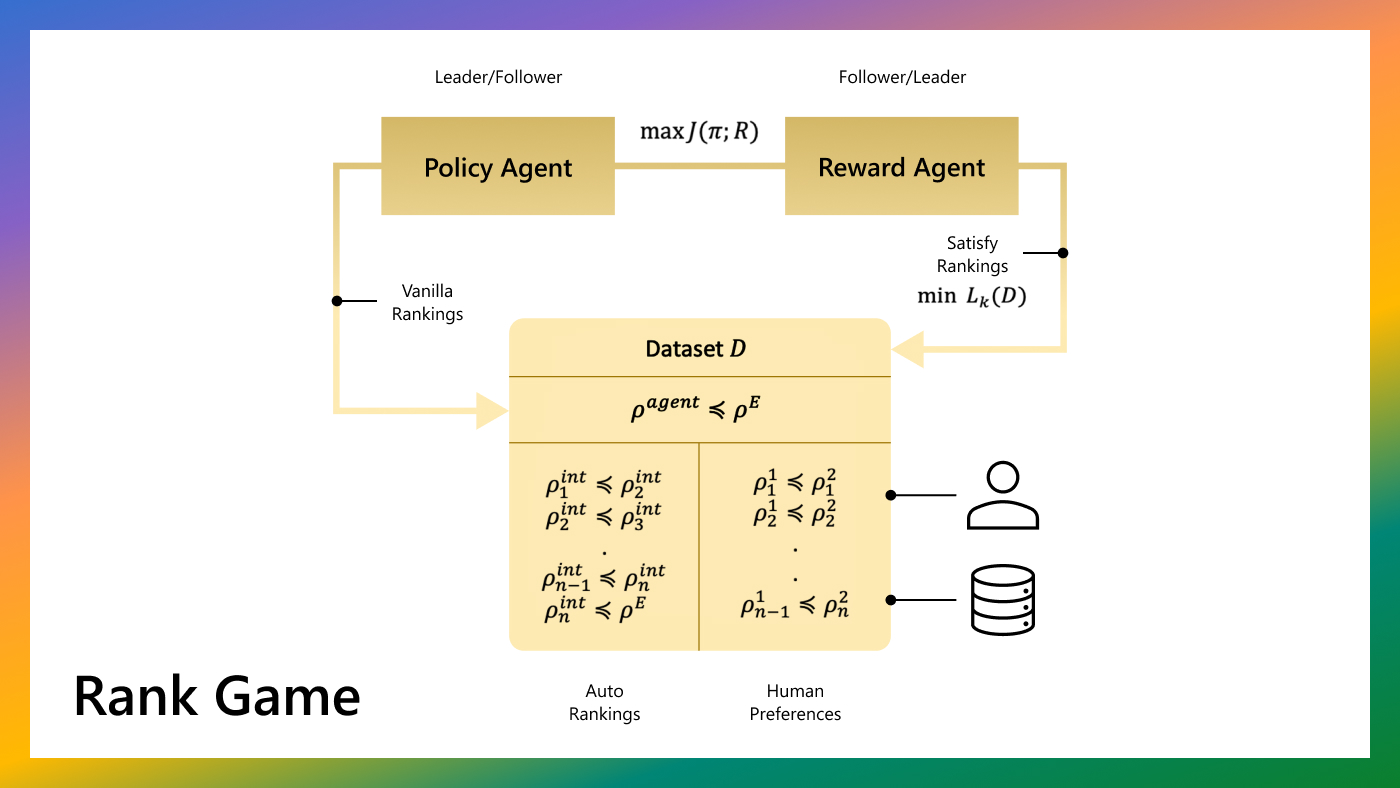

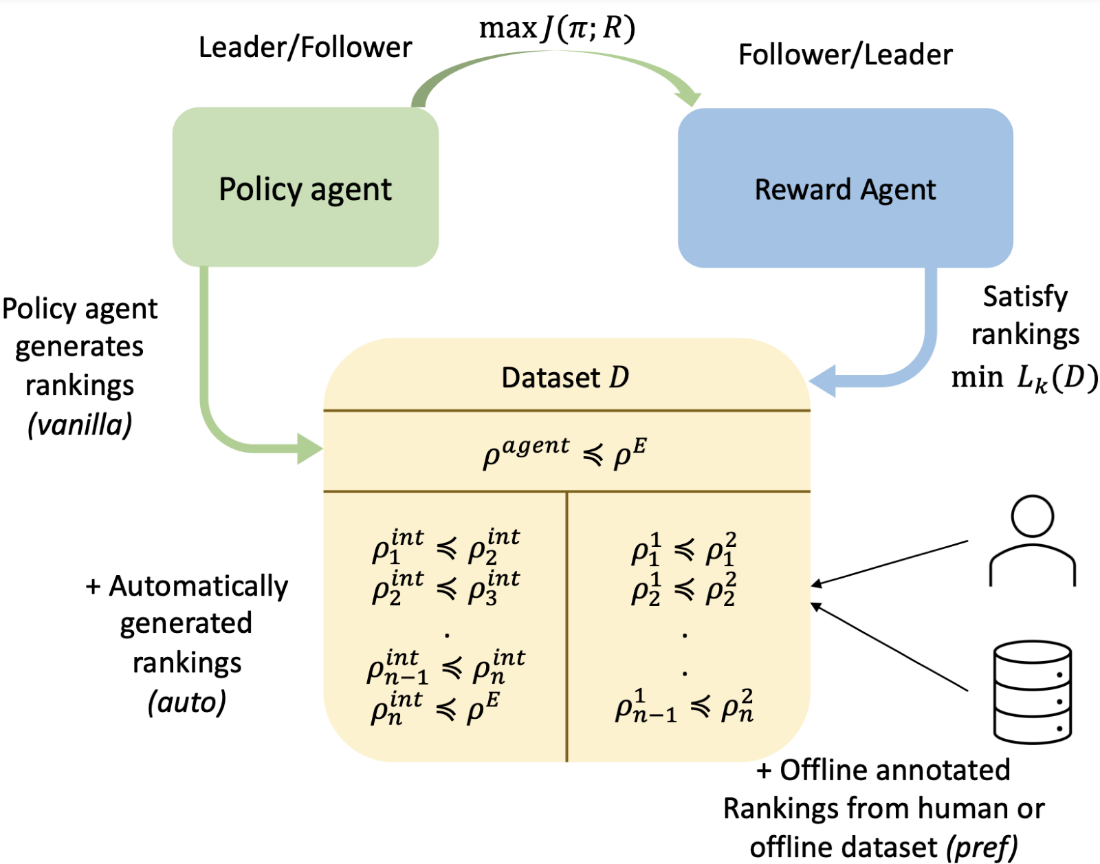

A Ranking Game for Imitation Learning

Reinforcement learning relies on environmental reward feedback to learn meaningful behaviors. Since reward specification is a hard problem, imitation learning (IL) may be used to bypass reward specification and learn from expert data, often via Inverse Reinforcement Learning (IRL) techniques. In IL, while near-optimal expert data is very informative, it can be difficult to obtain. Even with infinite data, expert data cannot imply a total ordering over trajectories as preferences can. On the other hand, learning from preferences alone is challenging, as a large number of preferences are required to infer a high-dimensional reward function, though preference data is typically much easier to collect than expert demonstrations. The classical IRL formulation learns from expert demonstrations but provides no mechanism to incorporate learning from offline preferences.

In a new paper: A Ranking Game for Imitation Learning (opens in new tab) accepted at TMLR 2023 (opens in new tab), researchers from UT Austin, Microsoft Research, and UMass Amherst create a unified algorithmic framework for IRL that incorporates both expert and suboptimal information for imitation learning. They propose a new framework for imitation learning called “rank-game” which treats imitation as a two-player ranking-based game between a policy and a reward. In this game, the reward agent learns to satisfy pairwise performance rankings between behaviors, while the policy agent learns to maximize this reward. A novel ranking loss function is proposed, giving an algorithm that can simultaneously learn from expert demonstrations and preferences, gaining the advantages of both modalities. Experimental results in the paper show that the proposed method achieves state-of-the-art sample efficiency and can solve previously unsolvable tasks in the Learning from Observation (LfO) setting. Project video and code can be found on GitHub (opens in new tab).

NEWS

Microsoft helps GoodLeaf Farms drive agricultural innovation with data

Vertical indoor farming uses extensive technology to manage production and optimize growing conditions. This includes movement of grow benches, lighting, irrigation, and air and temperature controls. Data and analytics can help vertical farms produce the highest possible yields and quality.

Canadian vertical farm pioneer GoodLeaf Farms (opens in new tab) has announced a partnership (opens in new tab) with Microsoft and data and analytics firm Adastra to optimize crop production and quality. GoodLeaf has deployed Microsoft Azure Synapse Analytics (opens in new tab) and Microsoft Power Platform (opens in new tab) to utilize the vast amounts of data it collects.

GoodLeaf is also collaborating with Microsoft Research through Project FarmVibes, using GoodLeaf’s data to support research into controlled environment agriculture.

GoodLeaf’s farm in Guelph, Ontario, and two currently under construction in Calgary and Montreal, use a connected system of cameras and sensors to manage plant seeding, growing mediums, germination, temperature, humidity, nutrients, lighting, and air flow. Data science and analytics help the company grow microgreens and baby greens in Canada year-round, no matter the weather using a hydroponics system and specialized LED lights.

OPPORTUNITY

Reinforcement Learning Open Source Fest

Proposals are now being accepted for Reinforcement Learning (RL) Open Source Fest 2023, a global online program that introduces students to open-source RL programs and software development. Our goal is to bring together a diverse group of students from around the world to help solve open-source RL problems and advance state-of-the-art research and development. The program produces open-source code written and released to benefit all.

Accepted students will join a four-month research project from May to August 2023, working virtually alongside researchers, data scientists, and engineers on the Microsoft Research New York City Real World Reinforcement Learning team. Students will also receive a $10,000 USD stipend. At the end of the program, students will present each of their projects to the Microsoft Research Real World Reinforcement Learning team online.

The proposal deadline is Monday, April 3, 2023, at 11:59 PM ET. Learn more and submit your proposal (opens in new tab) today.