Reinforcement learning, a major machine learning technique, is increasingly making an impact, producing fruitful results in such areas as gaming, financial markets, autonomous driving, and robotics. But there remain many challenges and opportunities before AI, using such techniques and others, can move beyond solving specific tasks to exhibiting more general intelligence, and we in the research community have a long tradition of relying on the more controlled environments of play as a benchmark for this work.

For the Machine Intelligence and Perception group here at Microsoft Research, our sandbox of choice has been Minecraft. Its complex 3D world and flexibility to create a wide variety of game-play scenarios make it ideal for exploring the potential of artificial intelligence, and the platform we’ve built to do so—Project Malmo—has allowed us to capitalize on these strengths.

PODCAST SERIES

In Learning to Play: The Multi-Agent Reinforcement Learning in MalmÖ (MARLÖ) Competition (opens in new tab), we invited programmers into this digital world to help tackle multi-agent reinforcement learning. This challenge, the second competition using the Project Malmo platform, tasked participants with designing learning agents capable of collaborating with or competing against other agents to complete tasks across three different games within Minecraft.

The competition—co-hosted by Microsoft, Queen Mary University of London, and CrowdAI—drew strong interest from people and teams worldwide. At the end of the five-month submission period in December, we had 133 participants.

Katja Hofmann, Senior Researcher and research lead of Project Malmo, announced the five competition winners at the Applied Machine Learning Days conference in Lausanne, Switzerland, last month.

Katja Hofmann at Applied Machine Learning Days ©samueldevantery.com

And the winners are …

First place was awarded to a team from Bielefeld University’s Cluster of Excellence Center in Cognitive Interactive Technology (opens in new tab) in Germany.

“What we like about the MARLÖ competition best is that it highlights fundamental problems of general AI, like multitasking behavior in multi-agent 3D environments,” said team supervisor Dr. Andrew Melnik. “The most challenging aspect of the competition was the changes in the appearance of the target objects, as well as environment tilesets and weather and light conditions—rainy and sunny, noon and dawn. It was quite a unique feature of the competition, and we enjoyed tackling the problem.”

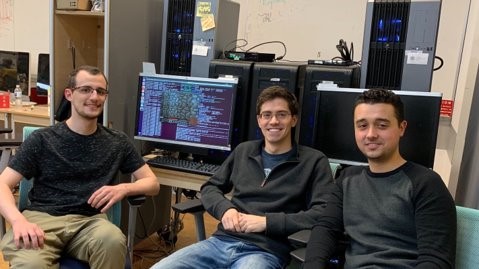

First place winners from left to right: Lennart Bramlage, Andrew Melnik, and Hendric Voß

With their agent, Melnik and team members Lennart Bramlage and Hendric Voß employed an approach called “MimicStates,” a type of imitation learning in which they exploited a collection of mimic actions from real players performing catching, attacking, and defensive action patterns in different environments.

“Leveraging human data is a very promising direction for tackling the hardest AI challenges,” said Hofmann. “The success of this approach suggests that there are many interesting directions to explore. For example, how to best leverage human data to further improve performance.”

First runner-up, from left to right: Rodrigo de Moura Canaan, Michael Cerny Green, and Philip Bontrager

Second runner-up: Linjie Xu

The competition’s first runner-up was the New York University team of Rodrigo de Moura Canaan, Michael Cerny Green, and Philip Bontrager, and second runner-up was Linjie Xu from Nanchang University, Jiangxi Province, China. Motoki Omura from The University of Tokyo, Japan, and Timothy Craig Momose, Izumi Karino, and Yuma Suzuki, also from the University of Tokyo, received honorable mention.

From the well-established to the novel

Many competitors used variations of well-established deep reinforcement learning approaches—deep-Q networks (DQN) and proximal policy optimization (PPO) were particularly popular—while others incorporated more recent innovations, including recurrent world models (opens in new tab), and even developed novel approaches (“Message-Dropout: An Efficient Training Method for Multi-Agent Deep Reinforcement Learning,” published at the 2019 AAAI Conference on Artificial Intelligence (opens in new tab)).

For future research and competitions on these tasks, we expect that current approaches could significantly improve through methods combining both the imitation learning techniques used by the winning team and reinforcement learning, benefiting from the prior knowledge of human gameplay and the further fine-tuning of behaviors through reinforcement learning. It is our belief that competitions like MARLÖ will continue to play a key role in innovating in this area.

“Multi-agent reinforcement learning has been picking up traction in the research community over the last couple of years, and what we need right now is a series of ambitious tasks that the community can use to measure our collective progress,” said Sharada Mohanty, a Ph.D. student at École Polytechnique Fédérale de Lausanne, Switzerland, and co-founder of CrowdAI (opens in new tab), which co-hosted MARLÖ. “This competition establishes a series of such ambitious tasks that act as good baselines that will catalyze a lot of meaningful progress in multi-agent reinforcement learning over the next few years.”

MARLÖ (opens in new tab) is part of our ongoing engagement with the multi-agent reinforcement learning community to help further advance general artificial intelligence.

“Generalization across multiple task variants and agents is very hard and nowhere near solved,” said Hofmann. “With the MARLÖ starter kit and competition tasks online (opens in new tab), we invite the community to try and tackle this open challenge.”

Try the tutorial, experiment, and keep the conversation going by sending your ideas to malmoadm@microsoft.com. We look forward to hearing from you.