Our mission

We create technology innovations that improve the lives of people with disabilities. As part of this effort, we embrace the concept of universal design, where we aim to bring new experiences to all users with our technologies.

To achieve real impact, we create new applications and services, hardware prototypes, and software libraries, as well as help develop standards for new kinds of user interfaces.

We embrace the spirit of designing not just for the user, but with the user—we design and test our ideas in close collaboration with end users and partners to help us better understand and evaluate the impact of new interfaces on the lives of people with disabilities such as ALS (opens in new tab).

Core projects

We develop new user experiences that combine innovative research and clever engineering. Our projects are mostly in the areas of eye-controlled or sound-based user interfaces.

Eye-controlled user interfaces

Modern human-computer interfaces are quite flexible. We control our devices via keyboards, touch panels and screens, pointing devices such as computer mice and trackballs, on-air gestures, and our voice. Our devices talk back to us via displays with text and graphics, and through lights, sounds, and synthesized voices.

But what if you don’t have your hands and your voice available? How do you express your needs and thoughts?

That can happen to anyone–you may be eating your lunch, so your hands and mouth are occupied. If you have a disability such as ALS, you may not be able to move your hands or speak.

In those situations, it is possible that your eyes can move well, under your control. Then, we can use eye tracking (opens in new tab) devices to capture the motion of your eyes.

Thanks to advances in electronics, several low-cost eye-tracking devices can be connected to low-cost computing devices. Our team has tapped into the opportunity to combine those devices with advanced algorithms, with the aim of making eye-controlled interfaces broadly available.

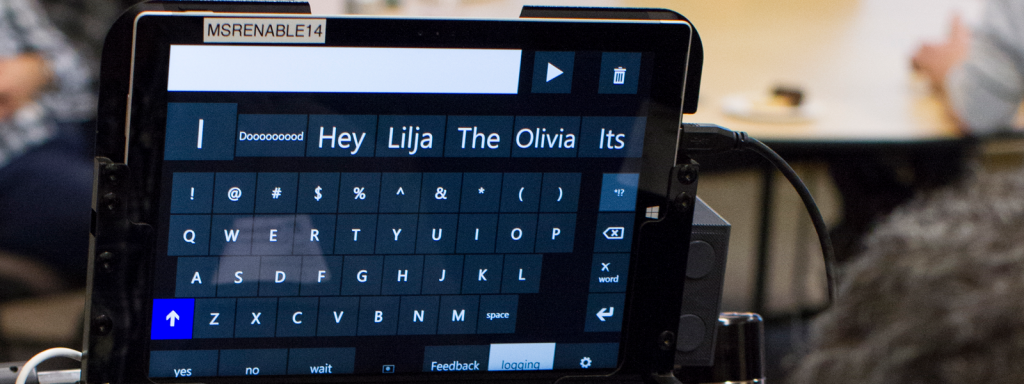

Through our design-with-the-user approach, our team has developed innovative interfaces based on eye tracking, especially for eye typing, thus enabling people to communicate without using their hands or voice. Examples include our Hands-Free keyboard and a communication prototype (opens in new tab) for mobile phones in which eye-gesture tracking is performed by the phone camera, with no additional hardware needed. Recently we also published EyeDrive (opens in new tab), a virtual joystick that can be controlled by eye tracking. We hope the community will use it to develop many interesting applications, such as our fun collaboration with Team Gleason on the Pilot37 (opens in new tab) project, allowing users to control an RC car or a drone by eye. Our Hands-Free Music project aims to restore critical expressive & creative channels for people severely affected by disabling conditions, such as ALS and related conditions with potential to isolate and erect innumerable, formidable barriers between people and their loved ones and communities.

In collaborations with the Windows development team, we created new eye-tracking interfaces for the operating system. Those include a new taskbar, an extension of the on-screen keyboard, mouse emulation, and new scrolling interfaces. They define the new Eye Control (opens in new tab) capability of Windows, introduced (opens in new tab) with the Windows Fall Creators Update in the fall of 2017.

Everyone loves to play games… well, why not with eye control! Eyes First games are popular games reinvented for an “Eyes First” experience. Playing these games is a great way to get familiar with using eye control, learn the skills to apply to other eye gaze-enabled assistive technologies, and simply to have some fun.

Sound-based user interfaces

When we are walking around, we usually carry with us a mobile device that has GPS capability. Then, appropriate mapping software can show us where we are, and points of interest nearby. That way, we can locate ourselves with more confidence, and explore the world around us.

But what if you can’t see your device screen? How do you get information on what’s nearby?

That can happen to anyone–your device may be in a bag, and it’s inconvenient to reach for it. If you have low vision, you may not be able to see the mobile device screen well.

In those situations, if the user has good hearing, we can then use sound as a guide. Motivated by the opportunity to develop new tools for people with low vision, we started a few years ago the Cities Unlocked project (opens in new tab), in partnership with Guide Dogs UK (opens in new tab) and Future Cities Catapult (opens in new tab). That effort was the basis for our current Soundscape project.

For users who can’t see well, Soundscape can help improve awareness of points-of-interest around them. That information helps the user build a mental acoustic picture of the surrounding environment. With that information and the users’ mobility tools, such as canes and guide dogs, users will then feel more confident to independently explore the world around them.