News & features

AutoAdapt: Automated domain adaptation for large language models

| Sidharth Sinha, Anson Bastos, Xuchao Zhang, Akshay Nambi, Rujia Wang, and Chetan Bansal

Deploying large language models (LLMs) in real-world, high-stakes settings is harder than it should be. In high-stakes settings like law, medicine, and cloud incident response, performance and reliability can quickly break down because adapting models to domain-specific requirements is a…

New Future of Work: AI is driving rapid change, uneven benefits

| Jaime Teevan, Sonia Jaffe, Rebecca Janssen, Nancy Baym, Siân Lindley, Bahar Sarrafzadeh, Brent Hecht, Jenna Butler, Jake Hofman, and Sean Rintel

For the past five years, the New Future of Work report has captured how work is changing. This year, the shift feels especially sharp. Previous editions have focused on technology’s role in increasing productivity by automating tasks, accelerating communication, and…

Ideas: Steering AI toward the work future we want

| Jaime Teevan, Jenna Butler, Jake Hofman, and Rebecca Janssen

Microsoft Chief Scientist Jaime Teevan and researchers Jenna Butler, Jake Hofman, and Rebecca Janssen unpack the New Future of Work Report 2025 and explore the ideal AI-driven working world. Plus, is AI a tool or a collaborator? And why the answer matters.

AsgardBench: A benchmark for visually grounded interactive planning

| Andrea Tupini, Lars Liden, Reuben Tan, Yu Wang, and Jianfeng Gao

Imagine a robot tasked with cleaning a kitchen. It needs to observe its environment, decide what to do, and adjust when things don’t go as expected, for example, when the mug it was tasked to wash is already clean, or…

GroundedPlanBench: Spatially grounded long-horizon task planning for robot manipulation

| Sehun Jung, HyunJee Song, Dong-Hee Kim, Reuben Tan, Jianfeng Gao, Yong Jae Lee, and Donghyun Kim

Vision-language models (VLMs) use images and text to plan robot actions, but they still struggle to decide what actions to take and where to take them. Most systems split these decisions into two steps: a VLM generates a plan in…

Systematic debugging for AI agents: Introducing the AgentRx framework

| Shraddha Barke, Arnav Goyal, Alind Khare, and Chetan Bansal

As AI agents transition from simple chatbots to autonomous systems capable of managing cloud incidents, navigating complex web interfaces, and executing multi-step API workflows, a new challenge has emerged: transparency. When a human makes a mistake, we can usually trace…

In the news | National Academy of Engineering

Doug Burger elected to National Academy of Engineering

Academy membership honors individuals who have made outstanding contributions to engineering research, practice, or education. Burger was elected for accelerating cloud-scale computing and networking infrastructures with field-programmable systems.

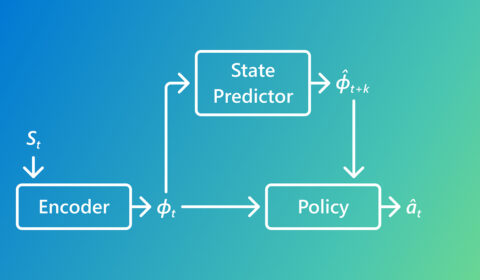

Rethinking imitation learning with Predictive Inverse Dynamics Models

| Pallavi Choudhury, Lukas Schäfer, Chris Lovett, Katja Hofmann, and Sergio Valcarcel Macua

This research looks at why Predictive Inverse Dynamics Models often outperform standard Behavior Cloning in imitation learning. By using simple predictions of what happens next, PIDMs reduce ambiguity and learn from far fewer demonstrations.

UniRG: Scaling medical imaging report generation with multimodal reinforcement learning

| Sheng Zhang, Flora Liu, Guanghui Qin, Mu Wei, and Hoifung Poon

AI can help generate medical image reports, but today’s models struggle with varying reporting schemes. Learn how UniRG uses reinforcement learning to boost performance of medical vision-language models.