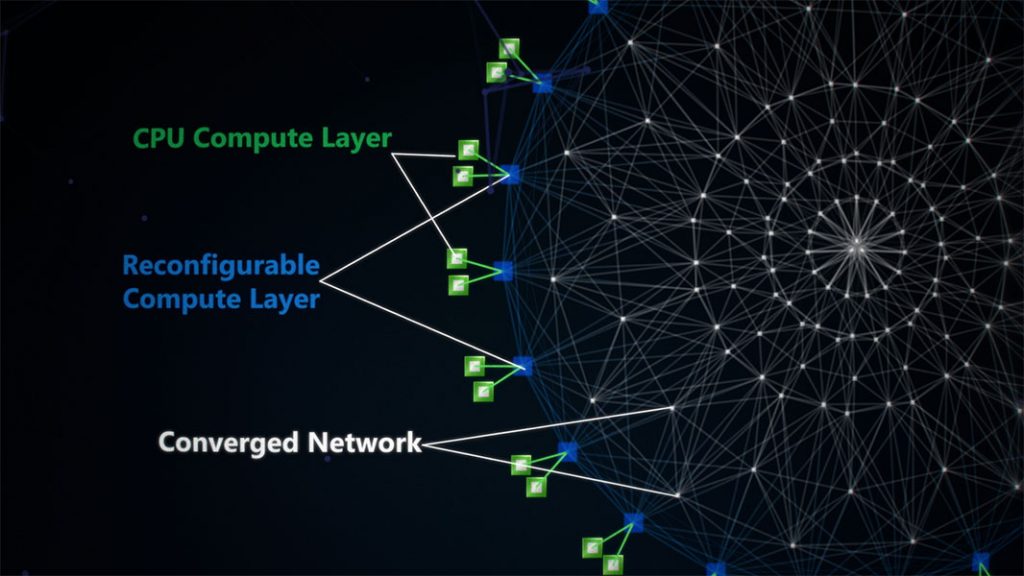

Project Catapult is the code name for a Microsoft Research (MSR) enterprise-level initiative that is transforming cloud computing by augmenting CPUs with an interconnected and configurable compute layer composed of programmable silicon.

Project Brainwave leverages Project Catapult to enable real-time AI

Try the first hardware accelerated model released May 7, 2018

Project Catapult is transforming cloud computing

We are living in an era where information grows exponentially and creates the need for massive computing power to process that information. At the same time, advances in silicon fabrication technology are approaching theoretical limits, and Moore’s Law (opens in new tab) has run its course. Chip performance improvements no longer keep pace with the needs of cutting-edge, computationally expensive workloads like software-defined networking (SDN) and artificial intelligence (AI). To create a faster, more intelligent cloud that keeps up with growing appetites for computing power, datacenters need to add other processors distinctly suited for critical workloads.

FPGAs offer a unique combination of speed and flexibility

Since the earliest days of cloud computing, we have answered the need for more computing power by innovating with special processors that give CPUs a boost. Project Catapult began in 2010 when a small team, led by Doug Burger and Derek Chiou, anticipated the paradigm shift to post-CPU technologies. We began exploring alternative architectures and specialized hardware such as graphics processing units (GPUs), field-programmable gate arrays (FPGAs), and custom application-specific integrated circuits (ASICs). We soon realized that FPGAs offer a unique combination of speed, programmability, and flexibility ideal for delivering cutting-edge performance and keeping pace with rapid innovation. Though FPGAs have been in use for decades, Microsoft Research (MSR) pioneered their use in cloud computing. MSR proved that FPGAs could deliver efficiency and performance without the cost, complexity, and risk of developing custom ASICs.

FPGA can perform line-rate computation

Project Catapult’s innovative board-level architecture is highly flexible. The FPGA can act as a local compute accelerator, an inline processor, or a remote accelerator for distributed computing. In this design, the FPGA sits between the datacenter’s top-of-rack (ToR) network switches and the server’s network interface chip (NIC). As a result, all network traffic is routed through the FPGA, which can perform line-rate computation on even high-bandwidth network flows.

The first hyperscale supercomputer

Today, nearly every new server in Microsoft datacenters integrates an FPGA into a unique distributed architecture, which creates an interconnected and configurable compute layer that extends the CPU compute layer. Using this acceleration fabric, we can deploy distributed hardware microservices (HWMS) with the flexibility to harness a scalable number of FPGAs—from one to thousands. Conversely, cloud-scale applications can leverage a scalable number of these microservices, with no knowledge of the underlying hardware. By coupling this approach with nearly a million Intel FPGAs deployed in our datacenters, we have built the world’s first hyperscale supercomputer, which can compute machine learning and deep learning algorithms with an unmatched combination of speed, efficiency, and scale.

Leading datacenter transformation by using programmable hardware

Through Project Catapult, Microsoft is leading the industry’s datacenter transformation by using programmable hardware. We were the first to prove the value of FPGAs for cloud computing, first to deploy them at cloud scale, and, with Bing, first to use them to accelerate enterprise-level applications.

Project Brainwave to enable real-time AI

Our leadership in accelerated networking has delivered the world’s fastest cloud network. Today, Project Brainwave is leveraging Project Catapult to enable real-time AI, with blazing fast inferencing performance at a remarkably affordable cost. A growing team of MSR researchers and engineers, in very close partnership with engineering groups such as Bing, Azure Machine Learning, Azure Networking, Azure Cloud Server Infrastructure (CSI), and Azure Storage, continue to push the boundaries of accelerated cloud computing.

Milestones

Project Catapult’s waves of innovation will continue.

-

2010

MSR demonstrated the first proof of concept to Bing leadership, with a proposal to use FPGAs at scale to accelerate Web search.

-

2011

MSR researchers and Bing engineers developed the first prototype; identifying and accelerating computationally expensive operations in Bing’s IndexServe engine.

-

2012

Project Catapult’s scale pilot of 1,632 FPGA-enabled servers was deployed to a datacenter, by using an early architecture with a custom secondary network.

-

2013

Results of the pilot demonstrated a dramatic improvement in search latency, running Bing decision-tree algorithms 40 times faster than CPUs alone, and proved the potential to speed up search even while reducing the number of servers. Bing leadership committed to putting Project Catapult in production.

-

2014

The Catapult v2 architecture introduced the breakthrough of placing FPGAs as a “bump in the wire” on the network path. Work began on accelerating software-designed networking for Azure. Project Catapult’s seminal paper was published.

-

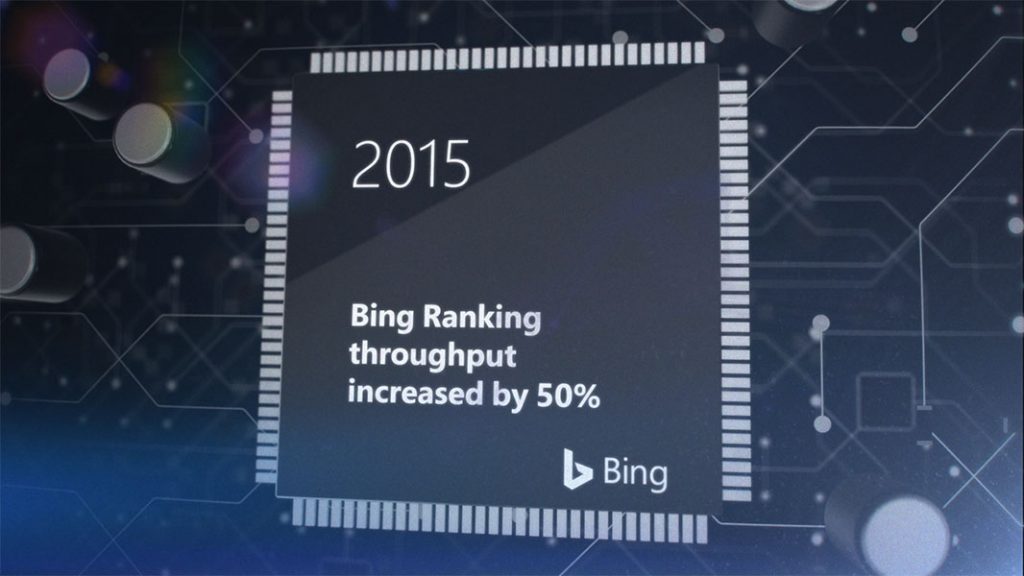

2015

FPGA-enabled servers were deployed at scale in Bing and Azure datacenters, and Bing first used FPGAs in production to accelerate search ranking. This enabled a 50 percent increase in throughput, or a 25 percent reduction in latency.

-

2016

Azure launched Accelerated Networking, using FPGAs to enable the world’s fastest cloud network. FPGAs became a default part of most Azure and Bing server SKUs. MSR began Project Brainwave, focused on accelerating AI and deep learning.

-

2017

MSR and Bing launched hardware microservices, enabling one web-scale service to leverage multiple FPGA-accelerated applications distributed across a datacenter. Bing deployed the first FPGA-accelerated Deep Neural Network (DNN). MSR demonstrated that FPGAs can enable real-time AI, beating GPUs in ultra-low latency, even without batching inference requests.

-

2018

Bing and Azure deployed new multi-FPGA appliances into datacenters, shifting the ratio of computing power between CPUs and FPGAs, with multiple Intel Arria 10 FPGAs in each server. MSR, Bing, and Azure Machine Learning partnered to bring Project Brainwave to production for both Microsoft engineering groups and third-party customers. Azure Machine Learning launched the preview of Hardware Accelerated Models, powered by Project Brainwave, delivering ultra-fast DNN performance with ResNet-50, at remarkably low cost—only 21 cents per million images during preview.

This is still the beginning. Project Brainwave is gaining traction across the company, with accelerated models in development for text, speech, vision, and other areas. The company-wide Project Catapult virtual team continues to innovate in deep learning, networking, storage, and other areas.