CLAW: A Multifunctional Handheld Haptic Controller for Grasping, Touching, and Triggering in Virtual Reality

- Inrak Choi ,

- Eyal Ofek ,

- hrvoje Benko ,

- Mike Sinclair ,

- Christian Holz

CHI 2018 |

Published by ACM

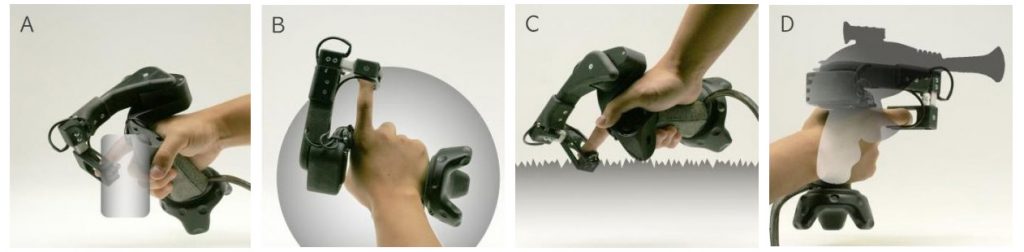

CLAW VR haptic controller provides articulated movement and force feedback actuation to the user’s index finger which allows for convincing haptic rendering of: (a) grasping, (b) touching, (c) rendering virtual textures, and (d) triggering.

CLAW is a handheld virtual reality controller that augments the typical controller functionality with force feedback and actuated movement to the index finger. Our controller enables three distinct interactions (grasping virtual object, touching virtual surfaces, and triggering) and changes its corresponding haptic rendering by sensing the differences in the user’s grasp. A servo motor coupled with a force sensor renders controllable forces to the index finger during grasping and touching. Using position tracking, a voice coil actuator at the index fingertip generates vibrations for various textures synchronized with finger movement. CLAW also supports a haptic force feedback in the trigger mode when the user holds a gun. We describe the design considerations for CLAW and evaluate its performance through two user studies. The first study obtained qualitative user feedback on the naturalness, effectiveness, and comfort when using the device. The second study investigated the ease of the transition between grasping and touching when using our device.

CLAW: A Multifunctional Handheld Haptic Controller for Grasping, Touching, and Triggering in VR

CLAW extends the concept of a VR controller to a multifunctional haptic tool, using a single motor. At first glance, it looks very similar to your standard VR controller. A closer look reveals a unique motorized arm that rotates the index finger relative to the palm to simulate force feedback.

CLAW acts as a multi-purpose controller that contains both the expected functionality of VR controllers (thumb buttons and joysticks, 6DOF control, index finger trigger) as well as enabling a variety of haptic renderings for the most commonly expected hand interactions: grasping objects, touching virtual surfaces and receiving force feedback. But a unique characteristic of the CLAW is its ability to adapt haptic rendering by sensing differences in the user’s grasp and the situational context of the virtual scene. As a user holds a thumb against the tip of the finger, the device simulates a grasping operation: the closing of the fingers around a virtual object is met with a resistive force, generating a sense that the object lies between the index finger and the thumb. A force sensor embedded in the index finger rest and changing the motor’s response profiles enables the sensing of objects of different materials, from full rigid wooden block to an elastic sponge. If the user holds the thumb away from a grasp pose, for example on the handle, shaping the palm instead in a pointing gesture, the controller delivers touch sensations. Moving the tip of the finger toward a surface of a virtual objects generates a resistance that pushes the finger back and prevents the finger from penetrating the virtual surface. Furthermore, a voice coil mounted under the tip of the index finger delivers small vibrations generated by surface texture as the finger slides along a virtual surface. Sensing the force applied by the user can also help to interact with virtual objects. Pushing a slider allows the experienced friction to signal preferable states or pressure can change attributes of a paint brush or pen in a drawing program.

CLAW was realized with the aid of Inrak Choy, an intern from Stanford University.