Advancing end-to-end automatic speech recognition and beyond

- Jinyu Li | International Symposium on Chinese Spoken Language Processing

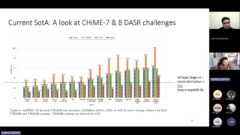

The speech community is transitioning from hybrid modeling to end-to-end (E2E) modeling for automatic speech recognition (ASR). While E2E models achieved state-of-the-art results in most benchmarks in terms of ASR accuracy, there are lots of practical factors that affect the production model deployment decision, including low-latency streaming, leveraging text-only data, and handling overlapped speech etc. Without providing excellent solutions to all these factors, it is hard for E2E models to be widely commercialized.

In this talk, I will overview the recent advances in E2E models with the focus on technologies addressing those challenges from the perspective of industry. To design a high-accuracy low-latency E2E model, a masking strategy was introduced into Transformer Transducer. I will discuss technologies which can leverage text-only data for general model training via pretraining and adaptation to a new domain via augmentation and factorization. Then, I will extend E2E modeling for streaming multi-talker ASR. I will also show how we go beyond ASR by extending the learning in E2E ASR into a new area like speech translation and build high-quality E2E speech translation models even without any human labeled speech translation data. Finally, I will conclude the talk with some new research opportunities we may work on.

Watch Next

-

-

Beyond Swahili: Designing Inclusive AI for Bantu Languages

- Alfred Malengo Kondoro

-

-

-

-

-

Evaluating the Cultural Relevance of AI Models and Products: Learnings on Maternal Health ASR, Data Augmentation and User Testing Methods

- Oche Ankeli,

- Ertony Bashil,

- Dhananjay Balakrishnan

-

-

-

Microsoft Research India - The lab culture

- P. Anandan,

- Indrani Medhi Thies,

- B. Ashok