“If you think about the world that we’re in now, software plays a key role in our lives – in everything we do. And at the core of solving big problems and fundamental challenges is the ability to use software to attack the problem and make it better. I work in the field of programming languages, and we go to work thinking ‘what do we do to make software better?’ Because if we make software better, then we can make all the things that depend on software better.”

Ben Zorn, Partner Researcher, Microsoft Research

Among the key aims of computer science is to advance the tools and systems that software engineers use every day – applying the latest developments in everything from formal methods to artificial intelligence to make software easier to write, understand, test, document and manage. This work can help developers to manage complexity and risk, stay abreast of new developments, and even tackle new paradigms such as quantum computing.

This collection highlights some of the many projects and initiatives across Microsoft Research that aim to advance the field of software development: creating new programming languages, building tools and systems for better software creation, testing, operation and documentation, and advancing program synthesis to automate and streamline programming tasks.

Explore more

Programming Languages

Bosque

Spotlight: AI-POWERED EXPERIENCE

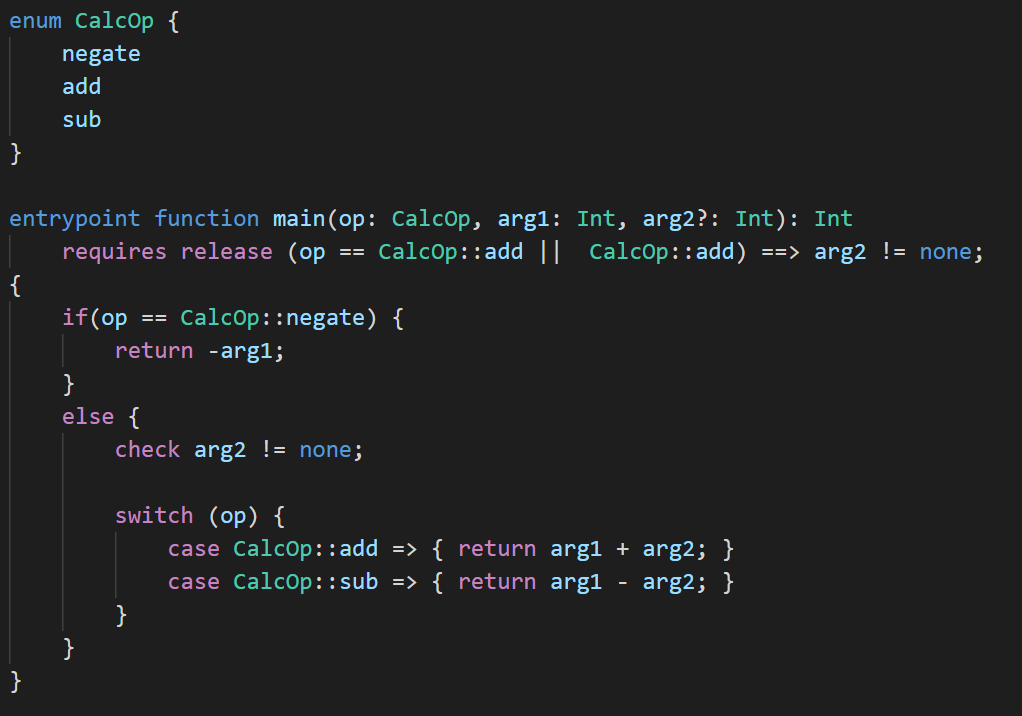

The Bosque programming language is an experiment in regularized programming language design for a machine-assisted rapid and reliable software development lifecycle. It is a ground-up language and tooling co-design effort focused on investigating the theoretical and practical implications of:

- Explicitly designing a code intermediate representation language (or bytecode) that enables deep automated code reasoning and the deployment of next-generation development tools, compilers, and runtime systems.

- Leveraging the power of the intermediate representation to provide a programming language that is both easily accessible to modern developers and that provides a rich set of useful language features for developing high reliability & high performance applications.

- Taking a cloud-development-first perspective on programming to address emerging challenges as we move into a distributed cloud development model based around microservices, serverless, and RESTful architectures.

Explore more

F*

F* is a general-purpose functional programming language with effects aimed at program verification. It puts together the automation of an SMT-backed deductive verification tool with the expressive power of a proof assistant based on dependent types. After verification, F* programs can be extracted to efficient OCaml, F#, C, WASM, or ASM code. This enables verifying the functional correctness and security of realistic applications. The main ongoing use case of F* is building a verified, drop-in replacement for the whole HTTPS stack in Project Everest, a multi-year collaborative effort focused on building a verified, secure communications stack designed to improve the security of HTTPS, a key internet safeguard. This includes verified implementations of TLS 1.2 and 1.3 and of the underlying cryptographic primitives.

Explore more

Excel LAMBDA

LAMBDA allows users to define their own custom functions using Excel’s formula language. Excel already allows users to define custom functions, but only by writing them in an entirely different language such as JavaScript (opens in new tab). In contrast, LAMBDA allows users to define a custom function in Excel’s own formula language. Moreover, one function can call another, so there is no limit to the power they can deploy with a single function call. The introduction of LAMBDA makes the Excel formula language Turing Complete (opens in new tab).

Explore more

P

P is a state machine-based programming language for modeling and specifying complex distributed systems. P allows the programmer to specify the system as a collection of interacting state machines, which communicate with each other using events. P unifies modeling, programming, and testing into one activity for the programmer. Not only can a P program be compiled into executable code, but it can also be systematically tested using model checking. Among other use cases, developers have used P for programming safe robotics systems.

Explore more

Q#

Q# is a domain-specific programming language designed for quantum application development. It is a high-level language that can express the full range of current and future quantum applications. Q# allows users to build hardware agnostic, reusable components intended for execution on a quantum processor. For debugging and development purposes, Q# programs can be executed on a simulator.

Explore more

Development, testing, documentation and operation

CodeBERT

CodeBERT is a bimodal pre-trained model for programming language (PL) and natural language (NL). CodeBERT learns general-purpose representations that support downstream NL-PL applications such as natural language codesearch, code documentation generation, etc. CodeBERT is developed with transformer-based neural architecture, and trained with a hybrid objective function that incorporates the pre-training task of replaced token detection, which is to detect plausible alternatives sampled from generators. This enables us to utilize both bimodal data of NL-PL pairs and unimodal data, where the former provides input tokens for model training while the latter helps to learn better generators.

CodeXGLUE

CodeXGLUE is a benchmark dataset and open challenge for code intelligence. It includes a collection of code intelligence tasks and a platform for model evaluation and comparison. CodeXGLUE stands for General Language Understanding Evaluation benchmark for code. It includes 14 datasets for 10 diversified code intelligence tasks covering the following scenarios:

- code-code (clone detection, defect detection, cloze test, code completion, code refinement, and code-to-code translation)

- text-code (natural language code search, text-to-code generation)

- code-text (code summarization)

- text-text (documentation translation)

Explore more

CoCoGUM

Code summaries are short natural language (NL) descriptions of code snippets that help developers better understand and maintain source code. Due to the pivotal role of code summaries in software development and maintenance, there is a surge of works on automatic code summarization to reduce the heavy burdens of developers. However, contemporary approaches only leverage the information within the boundary of the method being summarized (i.e., local context), and ignore that using broader context could assist with code summarization.

In this paper, researchers explore two global context information, namely intra-class and inter-class context information, and propose the model CoCoGUM: Contextual Code Summarization with Multi-Relational Graph Neural Networks on UMLs. CoCoGUM first incorporates class names as the intra-class context, which is further fed to a Transformer-based sentence embedding model to extract the class lexical embeddings. Then, relevant Unified Modeling Language (UML) class diagrams are extracted as inter-class context and we use a Multi-Relational Graph Neural Network (MR-GNN) to encode the class relational embeddings. Class lexical embeddings and class relational embeddings, together with the outputs from code token encoder and AST encoder, are passed to the decoder armed with a two-level attention mechanism to generate high-quality context-aware code summaries.

Coyote

Mission-critical cloud services require more than 99.9 percent uptime—developers face extreme challenges in this unpredictable, high-stakes environment. Coyote provides developers with a programming library and tools for confidently building reliable asynchronous software on the .NET platform.

Coyote allows developers to express the high-level asynchronous design directly in the code. It automatically finds deep non-deterministic safety and liveness bugs through intelligent systematic testing; and reproduces these non-deterministic bugs, facilitating easier debugging and quick fixes. It also supports both actor- and task-based programming.

Coyote is being used by several teams in Azure (opens in new tab) to build and test their distributed services.

Explore more

Developer Velocity Lab

The Developer Velocity Lab’s mission is to discover, improve and amplify developer work and well-being. It does this through socio-technical investigations in the following focus areas:

- Productivity: Investigating ways to measure and improve developer productivity, so we can help developers do their work better, faster, smarter and more securely. This includes investigations of low-code, no-code and work at the intersection of code, ML and AI.

- Community: Studying the ways that people communicate, collaborate, share knowledge and build communities when they build software. An important aspect of this is making all kinds of software development more accessible, inclusive and sustainable.

- Well-being: Investigating the intersections of happiness, satisfaction and personal value with software development activities, so we can find ways to make development more fun, enjoyable and sustainable for individuals.

EverCrypt

EverCrypt, part of Project Everest, is a no-excuses, industrial-grade, fully verified cryptographic provider. EverCrypt provides the same convenience and performance as popular existing cryptographic libraries. Thanks to formal verification, EverCrypt eliminates many of the bugs that leave protocols and applications vulnerable. Usable by verified and unverified clients alike, EverCrypt emphasizes both multiplatform support and high performance. Parts of EverCrypt have been integrated in Windows, the Linux Kernel, Firefox, the Wireguard VPN and the Tezos blockchain.

Explore more

EverParse

EverParse is a framework that automatically produces high-performance, formally proven C code for parsing binary messages. EverParse is now being used to ensure that certain network virtualization components in the Windows kernel correctly parse attacker-controlled inputs. Network packets are handled first by EverParse code that is proven to correctly parse and accept only well-formed messages, rejecting potentially malicious inputs before they reach the rest of the system.

Explore more

Narya

When a failure occurs in production systems, the highest priority is to quickly mitigate it. Despite its importance, failure mitigation is done in a reactive and ad-hoc way: taking some fixed actions only after a severe symptom is observed. For cloud systems, such a strategy is inadequate. NARYA is a preventive and adaptive failure mitigation service that is integrated in a production cloud, Microsoft Azure’s compute platform. Narya predicts imminent host failures based on multi-layer system signals and then decides smart mitigation actions to avert VM failures. Narya’s decision engine takes a novel online experimentation approach to continually explore the best mitigation action. Narya further enhances the adaptive decision capability through reinforcement learning.

Explore more

Network Verification

Networks need to run reliably, efficiently, and without users noticing any problems, even as they grow. Keeping networks tuned this way requires the development of tools that improve the functioning of large-scale datacenter networks. The Network Verification project investigates a range of network verification tools that help network operators and architects design, operate, maintain, troubleshoot, and report on their large networks.

Specifically, the Network Verification project explores three sub-areas:

- Data-plane verification: Because networks forward packets according to their data plane, verification tools need to have data-plane intelligence built in, for pre- and post-deployment verification and bug detection.

- Control-plane verification: Networks generate routing tables through protocols such as BGP (border gateway protocol). To analyze networks before they go into production, they need to be verified at this, the control-plane level.

- Emulation: With emulation, network designers can find bugs in firmware and models; network operators can be trained before they are working on production systems. The goal of this product is to enable highly scalable, high-fidelity network emulation for those applications.

RESTler

RESTler is the first stateful REST API fuzzing tool for automatically testing and finding security and reliability bugs in cloud/web services through their REST APIs. Given an OpenAPI/Swagger specification of a cloud/web service REST API, RESTler automatically generates and executes tests that exercise the service through its REST API—no prerecorded REST API traffic or preexisting tests are needed. RESTler intelligently infers dependencies among request types from the API specification, and, during testing, it checks for specific classes of bugs and dynamically learns from prior service responses. This intelligence allows RESTler to explore deeper service states reachable only through specific request sequences and to find more bugs.

Explore more

Software Analytics

A huge wealth of various data exists in software lifecycle, including source code, feature specifications, bug reports, test cases, execution traces/logs, and real-world user feedback, etc. Data plays an essential role in modern software development, because hidden in the data is information about the quality of software and services as well as the dynamics of software development. With various analytical and computing technologies, such as pattern recognition, machine learning, data mining, information visualization and large-scale data computing & processing, software analytics is to enable software practitioners to perform effective and efficient data exploration and analysis in order to obtain insightful and actionable information for data-driven tasks in engineering software and services.

The mission of the Software Analytics Group at Microsoft Research Asia is to advance the state of the art in the software analytics area; and utilize our technologies to help improve the quality of software and services as well as the development productivity for both Microsoft and software industry.

Program synthesis

Blue-Pencil

Traditional program synthesis systems have modal UIs (i.e., users enter a special mode to give demonstration/examples), which interrupt users’ workflow and require users to have knowledge about the systems to invoke them.

Blue-Pencil aims at developing modeless program synthesis: systems that do not require users to explicitly enter a special mode to give demonstration or examples. Instead, a modeless program synthesis system observes what task a user is doing, learns how to automate the task from those observations, and subsequently assists the user by automating the remaining part of the task.

Modeless program synthesis is the main technology behind Visual Studio IntelliCode Suggestions (opens in new tab).

Explore more

Deep Program Understanding

The Deep Program Understanding project aims to teach machines to understand complex algorithms, combining methods from the programming languages, software engineering and the machine learning communities.

Learning to Understand Programs: Building “smart” software engineering tools requires learning to analyze and understand existing code and related artefacts such as documentation and online resources (e.g. StackOverflow). One of our primary concerns is the integration of standard static analysis methods with machine learning methods to create learning-based program analyses that can be used within software engineering tools. Such tools can then be used to find bugs, automatically retrieve or produce relevant documentation, or verify programs.

Learning to Generate Programs: A core problem of machine learning is to learn algorithms that explain observed behaviour. This can take several forms, such as program synthesis from examples, in which an interpretable program matching given input/output pairs has to be produced; or alternatively programming by demonstration, in which a system has to learn to mimic sequences of actions.

Advancing the Machine Learning Frontier: Structured data such as programs represent a challenge for machine learning methods. The combination of domain constraints, known semantics and complex structure requires new machine learning methods and techniques. Our focus in this area is the analysis and generation of graphs, for which we have developed novel neural network architectures and generative procedures.

Predictive Program Synthesis

Program synthesis technologies help users easily automate tasks that would otherwise require significant manual effort or programming skills. For instance, programming-by-example or natural language programming approaches allow the user to express intent by giving examples or natural language descriptions of the task, from which the system can synthesize a program in a formal programming language to complete the task. In this project, we are exploring the novel notion of predictive program synthesis, which is based on the idea that, in some scenarios, we can automatically suggest useful programs to the user without any interaction or intent specification from the user, based entirely on the context.

Common examples of such scenarios include extraction of data from unstructured or semi-structured sources such as text files or webpages. We have developed technologies for automatically synthesizing data extraction scripts for such applications and are also exploring other applications of predictive synthesis such as automatically suggesting improvements to code authored by developers in the IDE.

Predictive synthesis is the technology being used for the new web data extraction feature (opens in new tab) in Microsoft Power BI.

PROSE framework

Microsoft PROSE is a framework of technologies for programming by examples: automatic generation of programs from input-output examples.

The core component of the PROSE SDK is its program synthesis framework for custom domain-specific languages (DSLs). It allows users to define a DSL that describes a typical space of tasks in their application domain, and automatically provides parsing, execution, and synthesis technologies for this DSL. Text transformation (opens in new tab) and text extraction (opens in new tab) DSLs are programming-by-example technologies that have been developed on top of the PROSE core synthesis framework.