Member of Technical Staff, AI Safety Post-Training – MAI Super Intelligence Team

As a Member of Technical Staff, AI Safety Post-Training, you will work to develop and implement cutting-edge safety methodologies for post-training large language models with agentic and reasoning capabilities that are served to millions of…

Publicly-verifiable elections

Microsoft’s free, open-source ElectionGuard tools enable voters to verify their votes were accurately counted without compromising privacy or trusting election equipment or personnel. New research eliminates the need for cryptographic keys, making the process far…

Research Intern – Cryptography

The researchers and engineers in the Cryptography team pursue challenging research that has an impact at Microsoft and the world at large. Most recently we have focused on cryptographic identity, formally verified cryptography, encrypted communications,…

Reducing Privacy leaks in AI: Two approaches to contextual integrity

New research explores two ways to give AI agents stronger privacy safeguards grounded in contextual integrity. One adds lightweight, inference-time checks; the other builds contextual awareness directly into models through reasoning and RL.

CHERI-Lite for Memory Safety Exploit Mitigation

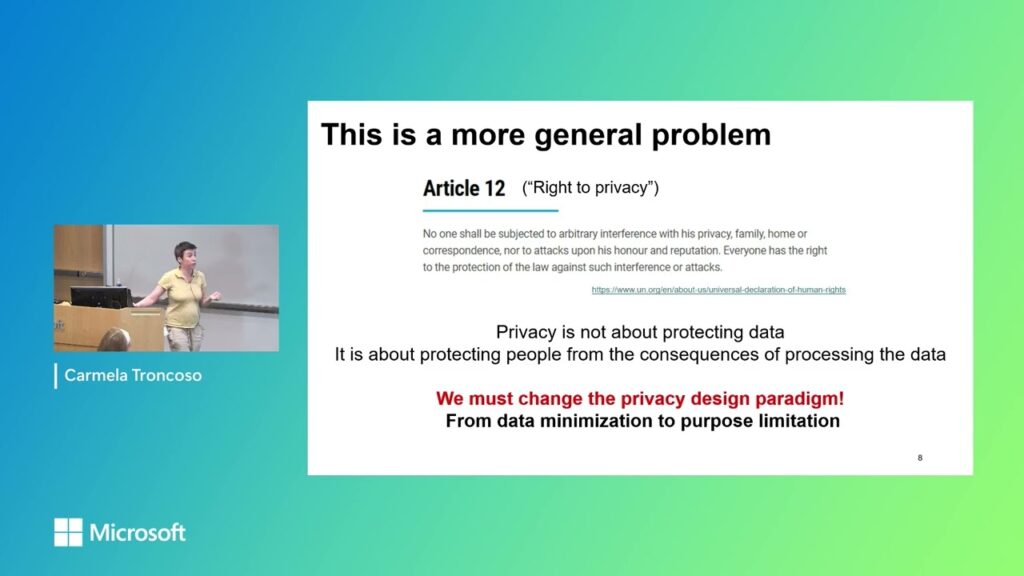

Designing safe digital systems for the humanitarian sector

Speaker: Carmela Troncoso Host: Betül Durak In this talk we overview our collaboration with the International Committee of the Red Cross, in which we help them to digitalize their aid distribution process without increasing risks…

BlueCodeAgent: A blue teaming agent enabled by automated red teaming for CodeGen AI

BlueCodeAgent is an end-to-end blue-teaming framework built to boost code security using automated red-teaming processes, data, and safety rules to guide LLMs’ defensive decisions. Dynamic testing reduces false positives in vulnerability detection.